Scaling Gemini API Requests and Managing Rate Limits in Google Apps Script

Building a basic Gemini API proof-of-concept in Google Workspace is easy, but scaling it to process thousands of records requires a major paradigm shift. Discover how to overcome the challenges of enterprise-grade AI automation and turn your workflows into a massive productivity multiplier.

The Challenge of Scaling Gemini API in Workspace Applications

Integrating the Gemini API into Automatically create new folders in Google Drive, generate templates in new folders, fill out text automatically in new files, and save info in Google Sheets using AI Powered Cover Letter Automation Engine is a massive force multiplier for productivity. Building a proof-of-concept that summarizes a single Google Doc or extracts entities from a specific Gmail thread is relatively straightforward. However, a significant paradigm shift occurs when you attempt to transition from a single-execution script to an enterprise-grade automation that processes thousands of records.

When you scale up—perhaps iterating through a massive Google Sheet to generate bulk personalized emails, or scanning an entire Google Drive folder to classify hundreds of PDFs—you immediately collide with the architectural constraints of both Genesis Engine AI Powered Content to Video Production Pipeline and the Gemini API. Scaling in this environment isn’t just about writing efficient code; it is about orchestrating distributed workloads within strict execution boundaries, managing state, and respecting hard network limits.

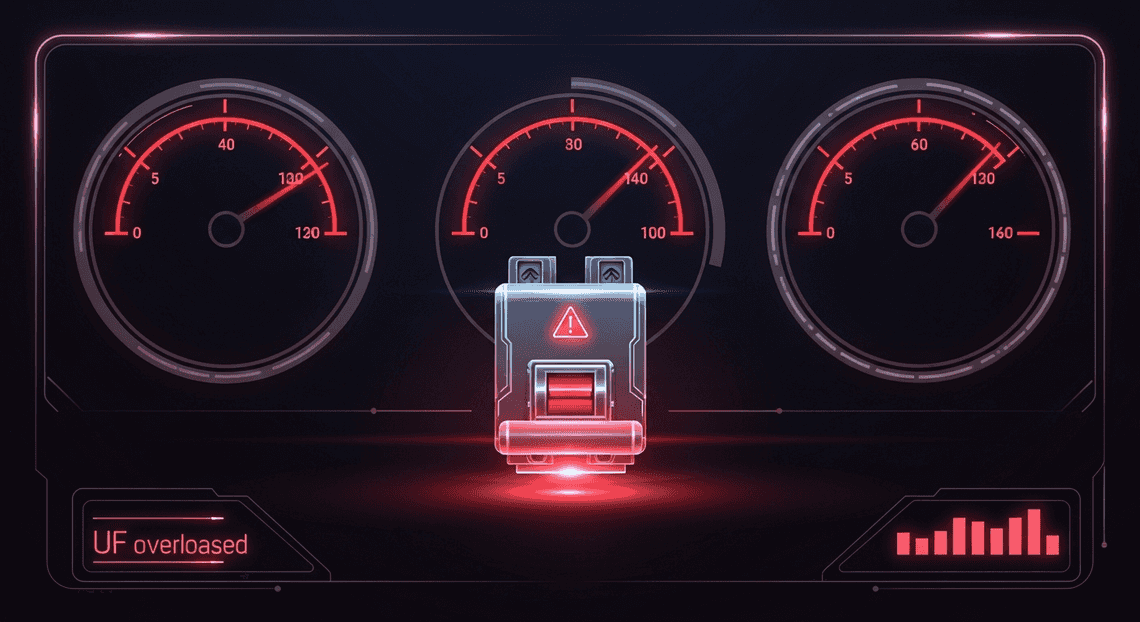

Understanding 429 Too Many Requests Errors

The most immediate roadblock developers face when scaling Gemini API calls is the dreaded HTTP 429 Too Many Requests status code. This error is the API’s built-in circuit breaker, signaling that your application has exceeded its allocated quota and further requests are being temporarily rejected.

To effectively manage 429 errors, you must understand that the Gemini API evaluates rate limits across multiple dimensions simultaneously:

-

RPM (Requests Per Minute): The raw number of API calls made within a 60-second rolling window. A simple

forloop in Apps Script utilizingUrlFetchApp.fetch()can easily fire off dozens of requests per second, instantly exhausting standard tier RPM limits. -

TPM (Tokens Per Minute): This is often the silent killer in Workspace integrations. Even if you stay well below your RPM limit, sending massive payloads (like full transcripts or multi-page Google Docs) can rapidly consume your TPM quota. Once the token threshold is breached, the API will return a 429 error regardless of your request count.

-

RPD (Requests Per Day): The absolute ceiling for daily usage, which varies heavily depending on whether you are using the free tier or a paid Google Cloud project with billing enabled.

In Google Apps Script, unhandled 429 errors are fatal. Because UrlFetchApp throws an exception upon receiving a non-2xx HTTP response by default, hitting a rate limit will abruptly terminate your script. This leaves your Workspace data in an inconsistent state—half of your spreadsheet might be processed, while the other half remains untouched, creating a data integrity nightmare.

Analyzing High Volume Document Processing Bottlenecks

While API rate limits are a primary concern, they are only one part of the scaling equation. When you are processing high volumes of Workspace documents, the execution environment itself introduces severe bottlenecks. Analyzing these chokepoints is critical for designing resilient cloud architectures.

1. The Synchronous Nature of Apps Script

Google Apps Script is inherently synchronous. When you call UrlFetchApp.fetch(geminiEndpoint, options), the V8 engine blocks further execution until the Gemini API returns a response. If you are processing 500 documents and the LLM takes an average of 3 seconds to generate a response for each, your script will spend 25 minutes purely waiting on network I/O.

2. The 6-Minute Execution Limit

This synchronous blocking leads directly to the most infamous Apps Script bottleneck: the maximum execution time limit. Standard Google accounts are capped at 6 minutes per script execution (up to 30 minutes for AC2F Streamline Your Google Drive Workflow Enterprise accounts). When processing large batches of documents, you will inevitably hit this wall, resulting in an Exceeded maximum execution time error.

3. Payload Extraction and Context Window Overhead

Extracting text from Workspace files is computationally expensive. Converting a complex Google Doc with tables and images into a clean text string, or parsing a large Google Sheet into a JSON array, consumes valuable Apps Script memory and execution time. Furthermore, feeding these large extracted payloads into Gemini’s context window increases the LLM’s processing time, compounding the synchronous wait times mentioned above.

4. State Management and Resumability

Because high-volume processing is guaranteed to hit either a 429 rate limit or a 6-minute timeout, the lack of native state management in Apps Script becomes a critical bottleneck. If a script fails on document 842 out of 1,000, the system must know exactly where to resume on the next execution. Without a robust mechanism to track progress (such as utilizing Properties Service, a dedicated tracking Sheet, or Google Cloud Firestore), developers are forced to restart the entire batch, wasting API quotas and risking infinite failure loops.

Architectural Patterns for API Rate Limiting

When integrating advanced AI models into your workflows, writing the HTTP request is the easy part; ensuring that request survives at scale is where true cloud engineering begins. Bridging Google Apps Script (a serverless environment with rigid execution boundaries) and the Gemini API (a high-demand service with strict throughput limits) requires a shift from simple scripting to robust system design. To prevent cascading failures, data loss, or script timeouts, we must implement architectural patterns that respect the constraints of both environments.

Navigating Quotas in Google Apps Script

To design an effective rate-limiting strategy, you must first map the operational boundaries of your environment. When calling the Gemini API from Automated Client Onboarding with Google Forms and Google Drive., you are squeezed between two distinct sets of quotas: the execution limits of Google Apps Script and the rate limits of the Gemini API.

Google Apps Script Constraints:

-

Execution Time Limits: The most notorious bottleneck. A standard Apps Script execution is capped at 6 minutes (up to 30 minutes for Automated Discount Code Management System Enterprise accounts). If your script is processing hundreds of rows in a Google Sheet and waiting for Gemini API responses, you will inevitably hit this wall.

-

UrlFetchApp Quotas: Google imposes daily limits on the

UrlFetchAppservice (typically 20,000 to 100,000 calls per day, depending on your Workspace tier). -

Concurrent Executions: Apps Script limits how many scripts can run simultaneously. Brute-forcing parallel requests via client-side calls can lead to concurrent execution errors.

Gemini API Constraints:

-

Requests Per Minute (RPM) & Requests Per Day (RPD): The hard limits on how many individual HTTP calls you can make.

-

Tokens Per Minute (TPM): A more dynamic limit based on the volume of text (or multimodal data) you are sending and receiving. You might be well under your RPM limit but still hit a

429 Too Many Requestserror if your prompts are massive.

The architectural challenge lies in the intersection of these quotas. If you use Utilities.sleep() aggressively to avoid Gemini’s RPM limits, you artificially inflate your script’s runtime, virtually guaranteeing you will crash into Apps Script’s 6-minute execution limit. Navigating this requires moving away from synchronous, linear loops and adopting a decoupled, state-aware approach.

Designing a Resilient Request Pipeline

To build a pipeline capable of processing thousands of Gemini API requests without failing, we must borrow concepts from enterprise cloud architecture—specifically decoupling, state management, and intelligent retry logic. Here are the core patterns to implement in your Google Apps Script environment:

1. Exponential Backoff with Jitter

When you hit a Gemini API rate limit (HTTP 429), immediately retrying the request will only exacerbate the problem. Instead, implement an exponential backoff algorithm. This pattern pauses the script for progressively longer intervals (e.g., 2s, 4s, 8s) before retrying. Adding “jitter”—a randomized variance to the sleep time—prevents the “thundering herd” problem if multiple Apps Script instances are running concurrently.

function fetchWithBackoff(url, options, maxRetries = 5) {

for (let i = 0; i < maxRetries; i++) {

try {

const response = UrlFetchApp.fetch(url, options);

if (response.getResponseCode() === 200) return response;

} catch (e) {

if (e.message.includes("429") || e.message.includes("500")) {

const sleepTime = Math.pow(2, i) * 1000 + Math.round(Math.random() * 1000);

Utilities.sleep(sleepTime);

} else {

throw e; // Fail fast on non-retryable errors (e.g., 400 Bad Request)

}

}

}

throw new Error("Max retries exceeded");

}

2. The State Machine & Time-Driven Continuation

To defeat the 6-minute execution limit, your script must become a state machine. Instead of attempting to process an entire dataset in one run, the script should process a batch, monitor its own execution time, and gracefully pause before the timeout occurs.

-

Use

Date.now()at the start of your script. -

After each Gemini API call, check the elapsed time.

-

If the elapsed time approaches 4.5 to 5 minutes, save the current index/state using the

PropertiesServiceor by writing a “status” column directly into a Google Sheet. -

Programmatically create a Time-Driven Trigger (

ScriptApp.newTrigger()) to fire a new instance of the script 1 minute later to pick up exactly where the last execution left off, then terminate the current execution.

3. Caching to Conserve TPM/RPM

Never ask the Gemini API the same question twice. If your workflow involves categorizing data or generating responses based on repetitive inputs, wrap your API calls in the Apps Script CacheService. By hashing the prompt and checking the cache before invoking UrlFetchApp, you drastically reduce your RPM and TPM consumption, saving your quotas for unique payloads.

4. Cloud-Native Offloading (The Enterprise Pattern)

If your workload scales beyond what Apps Script triggers and PropertiesService can reliably handle, it is time to offload the queue management to Google Cloud Platform (GCP). In this pattern, Apps Script acts merely as the ingestion layer. It reads the Automated Email Journey with Google Sheets and Google Analytics data and pushes the payloads to Google Cloud Pub/Sub or Cloud Tasks.

From there, a Google Cloud Function or Cloud Run service—configured with strict concurrency limits matching your Gemini API quotas—consumes the queue at a controlled rate. This completely removes the Apps Script 6-minute timeout from the equation and provides enterprise-grade observability, dead-letter queues, and guaranteed delivery for your AI workloads.

Implementing Exponential Backoff Algorithms

When scaling your interactions with the Gemini API, hitting rate limits—often represented by HTTP 429 (Too Many Requests) status codes—is not a matter of if, but when. As your Google Apps Script processes larger datasets or serves more users, the volume of outbound requests can easily outpace the allocated quota. To handle these bottlenecks gracefully without crashing your application or discarding valuable data, implementing an exponential backoff algorithm is an absolute necessity.

The Core Logic Behind Exponential Backoff

At its heart, exponential backoff is a standard error-handling strategy for network applications. When a request fails due to rate limiting or a transient server error, the script does not immediately retry the request. Immediate retries would only further overwhelm the API endpoint and guarantee subsequent failures.

Instead, the algorithm dictates that the client should wait for a specific period before trying again. If the second attempt fails, the wait time is increased exponentially. For example, your script might wait 1 second before the first retry, 2 seconds before the second, 4 seconds before the third, and 8 seconds before the fourth.

Mathematically, the delay is calculated as:

Wait Time = Base Delay × (2 ^ Attempt Number)

To make this algorithm truly robust in a distributed environment, cloud engineers introduce a concept called jitter. Jitter adds a randomized millisecond offset to the calculated wait time. If multiple instances of your script hit a rate limit simultaneously, a strict exponential backoff would cause them all to retry at the exact same moment, creating a “thundering herd” effect. Jitter spreads these retries out, significantly increasing the likelihood of a successful API call.

Leveraging Utilities.sleep for Execution Delays

Unlike Node.js or JSON-to-Video Automated Rendering Engine, where you might use asynchronous functions like setTimeout or asyncio.sleep to pause execution without blocking the main thread, Google Apps Script operates in a strictly synchronous environment. To pause execution in Apps Script, we rely on the built-in Utilities.sleep(milliseconds) method.

When Utilities.sleep() is invoked, the script halts entirely for the specified duration. While this perfectly serves the requirement of delaying our Gemini API retries, it introduces a critical Automated Google Slides Generation with Text Replacement constraint: the 6-minute execution limit.

Standard Google Apps Script executions are forcefully terminated if they run longer than 6 minutes (or 30 minutes for Automated Order Processing Wordpress to Gmail to Google Sheets to Jobber Enterprise accounts). Because Utilities.sleep() counts directly against this execution quota, your backoff parameters must be carefully tuned. You cannot allow infinite retries, nor can you allow the exponential delay to grow too large, or your script will time out and fail entirely. A maximum of 4 to 5 retries with a base delay of 1,000 to 2,000 milliseconds is generally the sweet spot for Apps Script.

Building a Robust Retry Mechanism

To implement this in your Google Apps Script projects, it is best practice to create a generic wrapper function. This allows you to encapsulate the UrlFetchApp call, the error checking, and the Utilities.sleep() logic into a single, reusable block of code.

Here is a production-ready implementation of an exponential backoff retry mechanism tailored for the Gemini API:

/**

* Executes an HTTP request with exponential backoff and jitter.

*

* @param {string} url - The Gemini API endpoint.

* @param {Object} options - The UrlFetchApp options object.

* @param {number} maxRetries - Maximum number of retry attempts (default: 5).

* @returns {GoogleAppsScript.URL_Fetch.HTTPResponse} The successful API response.

*/

function fetchGeminiWithBackoff(url, options, maxRetries = 5) {

const baseDelay = 1000; // 1 second base delay

// Enforce muteHttpExceptions to handle status codes manually

options.muteHttpExceptions = true;

for (let attempt = 0; attempt <= maxRetries; attempt++) {

const response = UrlFetchApp.fetch(url, options);

const statusCode = response.getResponseCode();

// 200 OK - Return the response immediately

if (statusCode >= 200 && statusCode < 300) {

return response;

}

// Check for Rate Limits (429) or Transient Server Errors (500, 502, 503)

if (statusCode === 429 || statusCode >= 500) {

if (attempt === maxRetries) {

throw new Error(`Gemini API failed after ${maxRetries} retries. Status: ${statusCode}. Response: ${response.getContentText()}`);

}

// Calculate exponential backoff with jitter

const exponentialDelay = baseDelay * Math.pow(2, attempt);

const jitter = Math.floor(Math.random() * 500); // 0 to 500ms of randomness

const totalDelay = exponentialDelay + jitter;

console.warn(`[Attempt ${attempt + 1}/${maxRetries}] Rate limit or server error (${statusCode}). Sleeping for ${totalDelay}ms...`);

// Pause script execution

Utilities.sleep(totalDelay);

} else {

// For client errors (e.g., 400 Bad Request, 401 Unauthorized, 403 Forbidden),

// do not retry as the request itself is malformed or unauthorized.

throw new Error(`Fatal API Error. Status: ${statusCode}. Response: ${response.getContentText()}`);

}

}

}

Key features of this implementation:

-

muteHttpExceptions: true: By default,UrlFetchAppthrows a fatal exception on any 4xx or 5xx error. By muting exceptions, we capture the HTTP response object and inspect the status code programmatically, giving us granular control over which errors trigger a retry. -

Targeted Retries: The script only backs off and retries for

429(Rate Limit) and5xx(Server Error) codes. If you send a malformed payload resulting in a400 Bad Request, the script fails fast, saving valuable execution time. -

Integrated Jitter: The

Math.random() * 500ensures that concurrent script executions won’t perfectly sync up their retry attempts, smoothing out the load on the Gemini API.

Advanced Queue Management and Request Batching

When scaling interactions with the Gemini API, you are inevitably caught between two immovable constraints: the strict rate limits of the Gemini API (measured in Requests Per Minute and Tokens Per Minute) and the execution time limits of Google Apps Script (typically 6 minutes per execution). Overcoming these bottlenecks requires moving beyond simple synchronous loops and implementing advanced queueing, batching, and state management architectures.

Structuring Rate Limiting Queues

In a serverless environment like Google Apps Script, memory is ephemeral. To build a resilient rate-limiting queue, you must decouple the generation of a request from its execution using a persistent storage layer.

For lightweight to medium workloads, Google Sheets or the PropertiesService act as excellent queue backends. For enterprise-grade scaling, integrating Google Cloud Firestore via its REST API is highly recommended. Regardless of the storage medium, a robust queue structure should contain the following metadata for each Gemini request:

-

Request ID: A unique identifier (UUID) for idempotency.

-

Payload: The actual prompt and model configuration.

-

Status:

PENDING,PROCESSING,COMPLETED, orFAILED. -

Retry Count: To implement exponential backoff for

429 Too Many Requestserrors. -

Estimated Token Count: Crucial for staying under Gemini’s TPM (Tokens Per Minute) limits.

To process this queue without violating rate limits, implement a “Token Bucket” or “Leaky Bucket” algorithm within your script. Before the processor pulls a request from the PENDING queue, it checks a centralized timestamp (stored in CacheService for fast read/writes) to calculate how many requests or tokens have been consumed in the current rolling window. If the limit is approaching, the script intentionally sleeps using Utilities.sleep() or defers execution, ensuring you never overwhelm the Gemini endpoint.

Batching Requests for Maximum Throughput

Sending requests sequentially to the Gemini API is highly inefficient, as network latency compounds with every call. To maximize throughput, you must leverage request batching.

Google Apps Script provides a powerful, often underutilized method for concurrent network requests: UrlFetchApp.fetchAll(). By grouping your queued Gemini requests into an array of HTTP request objects, you can dispatch them simultaneously.

However, batching requires careful orchestration to avoid triggering immediate rate limits:

-

Chunking by Quota: Instead of batching an arbitrary number of requests, dynamically size your batches based on the Gemini model’s specific limits. If your tier allows 60 RPM, and you have 100 queued items, chunk them into batches of 10-15 requests.

-

Token-Aware Batching: Calculate the estimated token size of the prompts in your batch. If a single batch exceeds the TPM limit, subdivide it further.

-

Handling Partial Failures: When using

UrlFetchApp.fetchAll(), some requests in the array may succeed while others return a429or500error. Your script must parse the array of HTTP responses, map them back to the original Request IDs, and update the queue accordingly—marking successes asCOMPLETEDand returning failures toPENDINGwith an incremented retry count.

Managing State Across Multiple Script Executions

Because Google Apps Script terminates executions after 6 minutes (or 30 minutes for Workspace Enterprise accounts), processing a large queue of Gemini requests in a single run is impossible. The solution is the Chained Trigger Pattern, which requires meticulous state management across multiple script executions.

To implement this, your queue processor must be strictly time-aware. At the start of the execution, record the start time using Date.now(). After processing each batch, calculate the elapsed time. Once the script approaches the safe threshold (e.g., 4.5 to 5 minutes), it must initiate a graceful shutdown sequence:

-

Commit State: Write the final status of all processed requests back to your persistent storage (Sheets, Properties, or Firestore).

-

Set Resumption Pointers: Save the index or ID of the next item to be processed in the

PropertiesService. -

Schedule the Next Run: Use the

ScriptAppservice to programmatically create a new time-driven trigger set to fire a minute or two in the future (e.g.,ScriptApp.newTrigger("processQueue").timeBased().after(60000).create();). -

Clean Up: Delete the current trigger that initiated the execution to prevent infinite trigger proliferation, and terminate the script cleanly.

By maintaining state externally and chaining executions, your Google Apps Script can effectively run indefinitely in the background, continuously chewing through massive queues of Gemini API requests while perfectly respecting both Google Cloud quotas and Apps Script limitations.

Deploying High Volume Jobs Successfully

Transitioning a Gemini API integration from a lightweight proof-of-concept to a production-grade pipeline requires a fundamental shift in architecture. When you are operating within Google Apps Script (GAS), you are bound by strict quotas—most notably the 6-minute execution time limit (or 30 minutes for Automated Payment Transaction Ledger with Google Sheets and PayPal Enterprise accounts) and the Gemini API’s specific Requests Per Minute (RPM) and Tokens Per Minute (TPM) limits. Deploying high-volume jobs successfully means designing for idempotency, stateful execution, and robust telemetry.

Processing Thousands of Workspace Documents Daily

To process thousands of Google Docs, Sheets, or Gmail threads daily without hitting execution timeouts or overwhelming the Gemini API, you must abandon the single-pass execution model. Instead, adopt a “Chunk and Chain” architectural pattern. This involves breaking your massive workload into smaller, manageable batches and using time-driven triggers to chain executions together.

Here is how a Cloud Engineer approaches high-volume document processing in Apps Script:

-

State Management: You need a reliable way to track progress. Use the

PropertiesService(specificallyScriptProperties) or a dedicated control tab in a Google Sheet to store a “continuation token” or the index of the last processed document. If the script is interrupted, the next run knows exactly where to resume. -

Execution Time Monitoring: Do not wait for Google Apps Script to forcefully terminate your script. Implement a time-check mechanism within your processing loop. If the script approaches the 5-minute mark, gracefully halt the loop, save the current state, and programmatically spawn a new time-driven trigger to pick up the remaining queue.

-

Batching for Rate Limits: If your Gemini API tier allows for 60 RPM, trying to process 100 documents in a tight

forloop will result in429 Too Many Requestserrors. Implement a queue system that processes documents in batches that align with your quota, utilizingUtilities.sleep()strategically to pace the requests, or distributing the workload across the hour using staggered triggers.

Here is a conceptual framework for a stateful, time-aware processing loop:

function processDocumentQueue() {

const startTime = Date.now();

const MAX_EXECUTION_TIME = 5 *60* 1000; // 5 minutes safe buffer

const scriptProperties = PropertiesService.getScriptProperties();

let currentIndex = parseInt(scriptProperties.getProperty('LAST_PROCESSED_INDEX') || '0', 10);

// Assume getPendingDocuments() fetches an array of Workspace File IDs

const pendingDocs = getPendingDocuments();

while (currentIndex < pendingDocs.length) {

// 1. Check remaining time

if (Date.now() - startTime > MAX_EXECUTION_TIME) {

console.info(`Approaching execution limit. Saving state at index ${currentIndex}.`);

scriptProperties.setProperty('LAST_PROCESSED_INDEX', currentIndex.toString());

scheduleNextRun(); // Programmatically create a trigger for 1 minute from now

return;

}

// 2. Process the document with Gemini

try {

const docId = pendingDocs[currentIndex];

callGeminiApiForDocument(docId);

currentIndex++;

} catch (error) {

// Handle specific rate limit errors (e.g., exponential backoff)

if (isRateLimitError(error)) {

console.warn('Rate limit hit. Pausing execution.');

Utilities.sleep(10000); // 10-second backoff

} else {

throw error; // Escalate unexpected errors

}

}

}

// Clear state upon successful completion of the entire queue

scriptProperties.deleteProperty('LAST_PROCESSED_INDEX');

console.info('All documents processed successfully.');

}

Monitoring Performance and Logging Errors

When processing thousands of documents asynchronously, silent failures are your biggest enemy. A robust logging and monitoring strategy is non-negotiable for enterprise deployments. Fortunately, Google Apps Script integrates natively with Google Cloud Logging (formerly Stackdriver), providing a powerful suite of tools to monitor your Gemini API workloads.

To effectively monitor performance and log errors, implement the following practices:

-

Structured Logging: Avoid generic

Logger.log()statements. Instead, use theconsoleclass (console.info(),console.warn(),console.error()) to pipe structured JSON payloads directly into Google Cloud Logging. Include metadata such as the Document ID, execution attempt number, and token usage metrics returned by the Gemini API. This allows you to query your logs in the GCP Console using the Logs Explorer (e.g., filtering by specific document IDs or error codes). -

Granular Error Handling: Wrap your Gemini API HTTP requests in

try...catchblocks and categorize the errors. A429(Rate Limit) requires a different operational response (backoff and retry) than a400(Bad Request, perhaps due to a payload exceeding the context window) or a500(Internal Server Error). Log the full response body of the error to capture Gemini’s specific diagnostic messages. -

Performance Telemetry: Track the latency of your Gemini API calls. By logging the start and end times of the

UrlFetchApp.fetch()requests, you can calculate the average processing time per document. This telemetry is vital for fine-tuning your batch sizes and optimizing your time-driven triggers. If you notice latency spiking, it may indicate network congestion or an opportunity to switch to a lighter Gemini model (e.g., from Gemini 1.5 Pro to 1.5 Flash) for simpler tasks. -

Automated Alerting: Relying on manual log reviews is inefficient. Use Google Cloud Monitoring to set up Log-Based Alerts. For example, you can configure an alert to send a webhook to a Slack channel or trigger a PagerDuty incident if the script logs more than five

console.error()events containing the phrase “Gemini API” within a 10-minute window. Alternatively, for a purely Apps Script-native approach, implement a fallbackcatchblock that usesMailApp.sendEmail()to notify the administrator of critical pipeline failures.

Scale Your Custom Architecture

While Google Apps Script is an incredible tool for prototyping and automating lightweight workflows, it inherently comes with rigid boundaries. When your Gemini API request volume surges, you will inevitably hit the ceiling of Apps Script’s quotas—whether it is the 6-minute execution timeout, the daily UrlFetchApp limits, or the lack of native asynchronous processing. To truly scale your AI integrations, you need to evolve your architecture by bridging Google Docs to Web with the robust infrastructure of Google Cloud Platform (GCP).

Scaling a custom architecture requires shifting from a synchronous, monolithic script to a decoupled, event-driven microservices model. Here is how you can elevate your system to handle enterprise-grade Gemini API workloads:

-

Decouple with Cloud Tasks or Pub/Sub: Instead of forcing Apps Script to wait for the Gemini API to generate a response (which risks hitting the 6-minute timeout), use Apps Script simply as an event trigger. Send the payload to Google Cloud Pub/Sub or Cloud Tasks. This allows your system to queue thousands of requests asynchronously, managing retries and backoffs automatically without locking up your Workspace UI.

-

Offload Compute to Cloud Run or Cloud Functions: Move the actual API execution logic out of Apps Script. By deploying containerized microservices on Cloud Run or serverless Cloud Functions, you gain access to massive concurrency, longer timeout limits (up to 60 minutes on Cloud Run), and the ability to write your logic in Node.js, Python, or Go.

-

Implement Distributed Rate Limiting: When running concurrent requests, a simple

Utilities.sleep()in Apps Script is no longer sufficient. By integrating Google Cloud Memorystore (Redis), you can implement sophisticated distributed rate-limiting algorithms—like token buckets or sliding windows—to ensure your distributed workers never exceed Gemini’s Requests Per Minute (RPM) or Tokens Per Minute (TPM) quotas. -

Upgrade to Building Self Correcting Agentic Workflows with Vertex AI: If you are using the public Gemini API, scaling up might require transitioning to Vertex AI on Google Cloud. Vertex AI provides enterprise SLAs, higher baseline quotas, and the ability to purchase Provisioned Throughput, ensuring your applications have guaranteed capacity during peak loads.

By treating Google Apps Script as the frontend interface (via Google Sheets, Docs, or Gmail) and Google Cloud as the backend engine, you create a highly resilient, scalable architecture capable of processing millions of AI requests seamlessly.

Book a Solution Discovery Call with Vo Tu Duc

Transitioning from a basic Apps Script macro to a fully decoupled, cloud-native AI architecture can be complex. If your organization is struggling with API rate limits, execution timeouts, or unpredictable scaling costs, it is time to bring in an expert.

Vo Tu Duc is a recognized specialist in Google Cloud Engineering, SocialSheet Streamline Your Social Media Posting 123 automation, and advanced AI integrations. With deep expertise in bridging the gap between everyday business tools and enterprise-grade cloud infrastructure, Vo Tu Duc can help you design a system that is both cost-effective and infinitely scalable.

During a Solution Discovery Call, we will dive deep into your specific use case. Here is what you can expect:

-

Architecture Audit: A comprehensive review of your current Google Apps Script workflows and bottleneck identification.

-

Quota & Rate Limit Strategy: Custom strategies to manage Gemini API RPM/TPM limits, including queueing, caching, and exponential backoff designs.

-

GCP Migration Roadmap: Actionable steps to offload heavy processing to Cloud Run, Pub/Sub, and Vertex AI while keeping your Workspace users happy.

-

ROI & Cost Estimation: A transparent look at the cloud costs associated with scaling your AI operations and how to optimize them.

Stop letting quotas dictate your productivity. [Click here to book your Solution Discovery Call with Vo Tu Duc] and start building a resilient, scalable AI architecture today.

Stop Doing Manual Work. Scale with AI.

Want to turn these blog concepts into production-ready reality for your team?

Table Of Contents

Portfolios

Related Posts

Quick Links

Legal Stuff