Architecting an Event Driven Workspace with Pub Sub Firebase and Gemini

Building point-to-point Google Workspace integrations might work for simple tasks, but they quickly become architectural bottlenecks when scaling or adding compute-heavy services like LLMs. Discover why rethinking your workflow architecture is the key to building robust, future-proof applications.

The Challenge of Tightly Coupled Workspace Integrations

When engineering custom workflows for Automatically create new folders in Google Drive, generate templates in new folders, fill out text automatically in new files, and save info in Google Sheets, the default approach for many developers is to build point-to-point, tightly coupled integrations. In this traditional model, an action within a Workspace application—such as receiving an email in Gmail, updating a row in Google Sheets, or generating a new Google Doc—triggers a direct, synchronous call to a backend service or an external API.

While this monolithic approach might suffice for simple, low-volume tasks, it quickly becomes an architectural bottleneck as your application scales or integrates with complex, compute-heavy services like Large Language Models (LLMs). Tightly coupling your Workspace UI or Apps Script triggers directly to your backend processing logic creates a fragile ecosystem where a failure or delay in one component cascades immediately to the end user.

Limitations of Direct API Calls in AC2F Streamline Your Google Drive Workflow

Relying on direct, synchronous API calls within the Automated Client Onboarding with Google Forms and Google Drive. ecosystem introduces several critical engineering constraints:

-

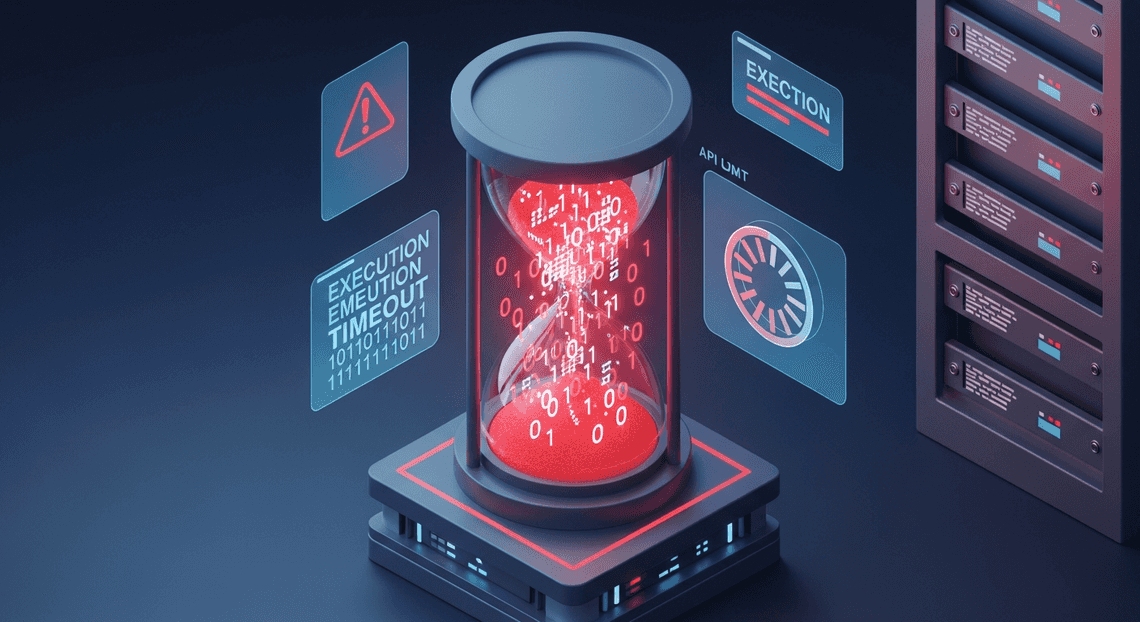

Execution Timeouts: Automated Discount Code Management System environments have strict execution limits. For instance, AI Powered Cover Letter Automation Engine generally enforces a 6-minute maximum execution time for standard scripts, and a highly restrictive 30-second limit for Add-on UI interactions and custom functions. If you are making a direct API call to a generative AI model like Gemini to analyze a massive document, the processing time can easily exceed these UI thresholds, resulting in ungraceful timeouts and broken workflows.

-

Quota Exhaustion and Throttling: Direct API calls leave your architecture vulnerable to traffic spikes. If a bulk operation updates 1,000 rows in a Google Sheet, and each row triggers a synchronous API call, you will rapidly hit Google Cloud or third-party API rate limits (HTTP 429 Too Many Requests). Handling exponential backoff within a synchronous execution thread is inefficient and often exacerbates timeout issues.

-

Poor User Experience (UX): Synchronous integrations block the user interface. When a user clicks a custom menu item to generate an AI summary, a direct API call forces them to stare at a spinning loading indicator until the entire process completes. If the backend takes 15 seconds to respond, the user experience feels sluggish and unresponsive.

-

Complex Error Handling: In a tightly coupled system, if the destination server is temporarily down, the Workspace script itself must manage the retry logic, state persistence, and failure notifications. This bloats the integration code and makes maintenance a nightmare.

Why Cloud Architects Need Event Driven Decoupling

To build enterprise-grade, resilient Workspace extensions, Cloud Architects must shift from synchronous procedural calls to an Event-Driven Architecture (EDA). Decoupling the system means separating the producer of the event (Automated Email Journey with Google Sheets and Google Analytics) from the consumer that processes it (your backend services and Gemini).

By introducing an event broker—such as Google Cloud Pub/Sub—architects can fundamentally transform how systems interact:

-

Asynchronous Processing: Instead of waiting for a response, the Workspace integration simply publishes a lightweight message to a Pub/Sub topic (e.g.,

document-created-event) and immediately returns a success state to the user. The UI remains snappy, and the heavy lifting is offloaded to the background. -

Built-in Fault Tolerance: If the Gemini API experiences a temporary latency spike, or if your backend service goes down for deployment, no data is lost. The event broker retains the messages and automatically retries delivery based on configurable backoff policies.

-

Independent Scalability: Event-driven decoupling allows your backend workers to scale independently of the Workspace UI. If a massive influx of Workspace events occurs, your consumer services (like Cloud Run or Cloud Functions) can scale out to process the queue at an optimal rate without overwhelming downstream APIs.

-

Frictionless Extensibility: A decoupled architecture embraces the “fan-out” pattern. If you later decide that every time a document is summarized by Gemini, a log should also be written to BigQuery and a notification sent to Google Chat, you simply add new subscribers to the existing event topic. You don’t need to touch or redeploy the original Workspace integration code.

By embracing event-driven decoupling, architects replace brittle, synchronous chains with robust, reactive ecosystems capable of handling the asynchronous nature of modern AI integrations.

Designing the Event Driven Message Bus Architecture

Transitioning from traditional, synchronous polling mechanisms to a reactive, event-driven model requires a robust architectural foundation. When integrating Automated Google Slides Generation with Text Replacement with external intelligence like Gemini and real-time state management like Firebase, the architecture must be capable of handling unpredictable spikes in traffic, ensuring zero data loss, and maintaining strict decoupling between services. The solution lies in designing a highly scalable event-driven message bus that acts as the central nervous system for your enterprise data flow.

High Level System Topology

At its core, our system topology relies on a strictly decoupled producer-consumer model, leveraging Google Cloud’s serverless ecosystem to minimize operational overhead. The architecture is composed of five distinct layers:

-

The Event Source (Producers): Automated Order Processing Wordpress to Gmail to Google Sheets to Jobber acts as the primary event generator. Whether it is a new email arriving in Gmail, a file being uploaded to Google Drive, or a message being posted in Google Chat, these actions trigger state changes.

-

The Ingestion & Routing Layer (Message Bus): Google Cloud Pub/Sub sits directly downstream from Workspace, capturing these state changes as discrete event messages.

-

The Orchestration & Processing Layer (Consumers): Serverless compute instances—typically Cloud Functions or Cloud Run services—are subscribed to the Pub/Sub topics. These services act as the connective tissue, pulling messages from the bus, parsing the payload, and executing business logic.

-

The Intelligence Layer: The serverless processors invoke the Gemini API. Depending on the event, Gemini might summarize a long email thread, extract action items from a newly uploaded Drive document, or generate an automated response for Google Chat.

-

The State & Delivery Layer: Once Gemini returns its intelligent output, the serverless processor writes the enriched data to Firebase (using Firestore for document storage and Firebase Cloud Messaging or Realtime Database for client synchronization). This ensures the end-user’s UI is updated instantaneously without requiring a page refresh.

This unidirectional flow of data—from Workspace to Pub/Sub, through Serverless/Gemini, and finally to Firebase—ensures that no single component acts as a bottleneck. If the Gemini API experiences latency, or if Firebase is undergoing maintenance, the message bus simply buffers the events, preserving the integrity of the system.

Role of GCP Pub Sub as the Central Ingestion Layer

In an enterprise Automated Payment Transaction Ledger with Google Sheets and PayPal environment, event volumes are rarely consistent. A company-wide announcement might trigger thousands of email events in seconds, while off-hours might see almost zero activity. Google Cloud Pub/Sub is uniquely positioned to handle this volatility, serving as the central ingestion layer for our architecture.

Pub/Sub provides asynchronous messaging that decouples the services producing events (Google Docs to Web) from the services processing them. Here is why Pub/Sub is the critical linchpin in this design:

-

Native Workspace Integration: Many SocialSheet Streamline Your Social Media Posting 123 APIs, such as the Google Drive Activity API and the SocialSheet Streamline Your Social Media Posting Events API (specifically for Google Chat and Meet), offer native, first-party support for delivering push notifications directly to a Pub/Sub topic. This eliminates the need to build and maintain custom webhook receivers or polling scripts just to get data out of Workspace.

-

The Fan-Out Pattern: Pub/Sub allows us to implement a “fan-out” architecture. A single event published to a topic (e.g.,

workspace-drive-uploads) can be routed to multiple independent subscriptions. One subscription might trigger a Cloud Function that sends the document to Gemini for summarization, while another subscription triggers a separate service that updates an audit log in BigQuery. Both processes happen concurrently without impacting one another. -

Guaranteed At-Least-Once Delivery: When dealing with critical business data, dropping events is unacceptable. Pub/Sub guarantees at-least-once delivery of messages. If a downstream Cloud Run service crashes while waiting for a response from Gemini, Pub/Sub will not receive an acknowledgment (ACK) and will automatically redeliver the message, ensuring the event is eventually processed.

-

Elastic Scalability and Buffering: Pub/Sub absorbs the shock of sudden traffic spikes. By acting as a highly durable buffer, it protects downstream services from being overwhelmed. You can configure message retention policies and Dead Letter Queues (DLQs) to capture and store unprocessable events for later debugging, ensuring your ingestion layer remains resilient even when downstream anomalies occur.

By positioning Pub/Sub directly behind Speech-to-Text Transcription Tool with Google Workspace, we create an ingestion layer that is globally available, infinitely scalable, and perfectly tailored to feed real-time data into advanced AI and frontend systems.

Managing State and Real Time Sync with Firebase

In an event-driven architecture, decoupling your services is a massive advantage for scalability and resilience. However, this asynchronous nature introduces a distinct challenge: how do you communicate the results of background processes—like a Pub/Sub message triggering a complex Gemini AI task—back to the end user without introducing clunky polling mechanisms?

This is where Firebase steps in as the crucial bridge between our asynchronous Google Cloud backend and our synchronous user experience. By leveraging Firebase, we can transform a disconnected series of backend events into a fluid, reactive application.

Firestore as the Intermediary State Store

To manage the flow of data between Google Workspace, Pub/Sub, Gemini, and the client, we need a highly scalable, low-latency database to act as our intermediary state store. Cloud Firestore is uniquely positioned for this role.

Instead of having our frontend wait on an HTTP request while Gemini processes a lengthy Google Doc or analyzes an email thread, we adopt a “fire-and-forget” pattern. The frontend initiates a request (or a Workspace event triggers one automatically), and the backend immediately acknowledges receipt. As our backend Cloud Functions or Cloud Run services process the data via Pub/Sub and Gemini, they write their progress and final outputs directly to Firestore.

Firestore excels here for several reasons:

-

Flexible NoSQL Document Model: Workspace data is inherently hierarchical and varied. Firestore allows us to store complex nested objects—like an original document snippet alongside Gemini’s generated summary and metadata—without rigid schema constraints.

-

State Tracking: We can easily implement state machines within our documents. A document might start with a

statusofPENDING, update toPROCESSINGwhen Gemini begins inference, and finally transition toCOMPLETEDwhen the AI-generated insights are safely written to the database. -

Decoupled Architecture: The backend services don’t need to know anything about the client. Their only job is to process the Pub/Sub event and update the corresponding Firestore document. This separation of concerns makes your Cloud Engineering topology much cleaner and easier to maintain.

Implementing Firebase Listeners for Seamless UI Updates

Having our data safely stored and updated in Firestore is only half the battle; the real magic happens when we push those updates to the client. Traditional REST architectures require the client to repeatedly poll the server to check if Gemini has finished its processing. This wastes bandwidth, drains battery life, and results in a sluggish user experience.

Firebase solves this elegantly through its real-time synchronization capabilities. By implementing Firebase listeners, the frontend establishes a persistent WebSocket connection to Firestore. When a backend service updates a document’s state, Firestore instantly pushes that delta to any subscribed clients.

Using the onSnapshot method in the Firebase Client SDK, we can bind our UI components directly to the database state. Here is a conceptual look at how this is implemented on the frontend:

import { doc, onSnapshot } from "firebase/firestore";

import { db } from "./firebase-config";

// Subscribe to a specific workspace event document

const eventId = "workspace-doc-update-12345";

const unsub = onSnapshot(doc(db, "workspace_events", eventId), (doc) => {

const data = doc.data();

if (data.status === 'PROCESSING') {

// Show a loading spinner or skeleton UI

renderLoadingState("Gemini is analyzing your document...");

}

else if (data.status === 'COMPLETED') {

// Instantly render the AI-generated insights

renderInsights(data.gemini_summary);

}

else if (data.status === 'ERROR') {

// Handle any backend failures gracefully

renderError(data.error_message);

}

});

With this listener in place, the UI updates seamlessly. A user might edit a Google Doc, which fires a Workspace event to Pub/Sub, triggering a Gemini summarization task. The user watches their dashboard transition from “Processing” to displaying a rich, AI-generated summary in milliseconds—all without a single page refresh. This real-time reactivity is what transforms a standard cloud application into a modern, collaborative workspace tool.

Integrating Gemini API and Apps Script for Intelligent Workflows

With our event-driven foundation securely established using Pub/Sub and Firebase, the next architectural leap is injecting intelligence and business utility into the pipeline. Capturing events in real-time is only half the battle; the true value lies in understanding those events and routing the insights to the tools your teams use daily. By bridging the generative power of the Gemini API with the deep Google Workspace integration of Genesis Engine AI Powered Content to Video Production Pipeline, we can transform raw event streams into automated, intelligent workflows.

Processing Cloud Events with Gemini AI

When a system event, customer interaction, or database mutation triggers a Pub/Sub message, the raw payload is often unstructured or lacks immediate business context. This is where Gemini steps in as the analytical engine of our architecture.

Typically, a Google Cloud Function acts as the subscriber to our Pub/Sub topic or Firebase event. Inside this function, we intercept the event payload and pass it to the Gemini API for processing. The goal here isn’t just to generate text, but to perform tasks like How to build a Custom Sentiment Analysis System for Operations Feedback Using Google Forms AppSheet and Vertex AI, entity extraction, or complex data categorization on the fly.

To ensure the output is programmatic and reliable for downstream systems, we leverage Gemini’s ability to return structured JSON. Here is an example of how a Node.js Cloud Function might process an incoming Pub/Sub event containing customer feedback:

const { GoogleGenerativeAI, SchemaType } = require("@google/generative-ai");

// Initialize the Gemini API client

const genAI = new GoogleGenerativeAI(process.env.GEMINI_API_KEY);

exports.processEventWithGemini = async (message, context) => {

try {

// Decode the Pub/Sub message payload

const rawData = Buffer.from(message.data, 'base64').toString();

const eventPayload = JSON.parse(rawData);

// Define the expected JSON schema for Gemini's output

const responseSchema = {

type: SchemaType.OBJECT,

properties: {

sentiment: { type: SchemaType.STRING, description: "Positive, Negative, or Neutral" },

summary: { type: SchemaType.STRING, description: "A one-sentence summary of the event" },

urgency: { type: SchemaType.INTEGER, description: "Urgency score from 1 to 5" },

actionItems: { type: SchemaType.ARRAY, items: { type: SchemaType.STRING } }

},

required: ["sentiment", "summary", "urgency"],

};

const model = genAI.getGenerativeModel({

model: "gemini-1.5-pro",

generationConfig: {

responseMimeType: "application/json",

responseSchema: responseSchema,

}

});

const prompt = `Analyze the following system event/feedback and extract the required insights: ${JSON.stringify(eventPayload)}`;

const result = await model.generateContent(prompt);

const aiProcessedData = JSON.parse(result.response.text());

console.log("Gemini Analysis Complete:", aiProcessedData);

// Next step: Send aiProcessedData to Google Workspace

await pushToAppsScript(aiProcessedData, eventPayload.id);

} catch (error) {

console.error("Error processing event with Gemini:", error);

}

};

By enforcing a strict responseSchema, we guarantee that Gemini acts as a predictable microservice within our event-driven architecture, cleanly parsing the chaos of raw events into structured, actionable intelligence.

Pushing Processed Data to Google Sheets via Apps Script

Once Gemini has enriched the event data, it needs to be surfaced to human operators. Google Sheets is a ubiquitous, highly collaborative interface for this. While you could use the Google Sheets REST API directly from the Cloud Function, routing the data through a Architecting Multi Tenant AI Workflows in Google Apps Script deployed as a Web App offers distinct advantages. Apps Script provides a secure, serverless environment that natively understands Workspace objects, allowing you to easily add complex formatting, trigger email notifications, or interact with other Workspace tools (like Docs or Calendar) in a single execution.

First, we create an Apps Script project bound to our target Google Sheet and deploy it as a Web App. The script utilizes the doPost(e) function to act as a webhook receiver for our Cloud Function:

// Google Apps Script: Deployed as a Web App

function doPost(e) {

try {

// Parse the incoming JSON payload from the Cloud Function

const payload = JSON.parse(e.postData.contents);

// Access the active sheet

const sheet = SpreadsheetApp.getActiveSpreadsheet().getSheetByName("Event Logs");

// Append the AI-processed data as a new row

sheet.appendRow([

new Date(), // Timestamp

payload.eventId, // Original Event ID

payload.sentiment, // Gemini: Sentiment

payload.urgency, // Gemini: Urgency Score

payload.summary, // Gemini: Summary

payload.actionItems.join(", ") // Gemini: Action Items

]);

// Optional: Add conditional formatting or trigger a Chat webhook if urgency is high

if (payload.urgency >= 4) {

// Logic to alert the team via Google Chat

}

return ContentService.createTextOutput(JSON.stringify({ status: "success" }))

.setMimeType(ContentService.MimeType.JSON);

} catch (error) {

return ContentService.createTextOutput(JSON.stringify({ status: "error", message: error.toString() }))

.setMimeType(ContentService.MimeType.JSON);

}

}

Back in our Google Cloud Function, completing the pipeline is as simple as making an HTTP POST request to the deployed Apps Script Web App URL.

// Node.js helper function inside the Cloud Function

async function pushToAppsScript(aiData, eventId) {

const appsScriptUrl = process.env.APPS_SCRIPT_WEB_APP_URL;

const payload = {

eventId: eventId,

...aiData

};

const response = await fetch(appsScriptUrl, {

method: 'POST',

headers: { 'Content-Type': 'application/json' },

body: JSON.stringify(payload)

});

if (!response.ok) {

throw new Error(`Failed to push to Apps Script: ${response.statusText}`);

}

console.log("Successfully pushed processed event to Google Sheets.");

}

This integration effectively closes the loop. A raw event occurs in your infrastructure, flows through Pub/Sub, is intelligently analyzed and structured by Gemini, and is instantly appended to a Google Sheet via Apps Script—all in near real-time, without any manual intervention.

Deployment Scaling and Security Considerations

Transitioning an event-driven architecture from a functional proof-of-concept to a production-grade system requires a fundamental shift in mindset. When connecting Google Workspace, Cloud Pub/Sub, Firebase, and Gemini, you are building a highly asynchronous, distributed pipeline. In the real world, networks partition, APIs rate-limit, and duplicate events fire. To ensure your architecture remains robust, scalable, and secure under load, we must address the realities of distributed systems and zero-trust security models.

Handling Idempotency and Message Retries

Google Cloud Pub/Sub guarantees at-least-once delivery. While this ensures no Workspace events (like a new email or a modified document) are ever lost, it explicitly means your subscriber applications will occasionally receive duplicate messages. If your pipeline isn’t idempotent, a single Google Drive update could trigger multiple identical prompts to Gemini, resulting in duplicate processing costs and redundant data written to Firebase.

To build a truly idempotent pipeline, you must design your subscribers to safely process the same event multiple times without changing the final system state.

1. State-Based Deduplication with Firestore:

The most effective way to handle idempotency in this stack is to leverage Firestore (Firebase’s database) as a transactional state store. Every Google Workspace push notification includes a unique X-Goog-Resource-ID and X-Goog-Message-Number. Alternatively, Pub/Sub assigns a unique messageId.

Before invoking the Gemini API, your Cloud Function or Cloud Run service should initiate a Firestore transaction:

-

Attempt to create a document in a

processed_eventscollection using themessageIdas the document ID. -

If the document already exists, the transaction fails, indicating a duplicate. The function can safely acknowledge (ACK) the Pub/Sub message and terminate.

-

If the document is created successfully, proceed with the Gemini invocation and subsequent Firebase updates.

2. Intelligent Retry Mechanisms:

Transient errors—such as a temporary Gemini API rate limit (HTTP 429) or a brief network hiccup—are inevitable. Your subscriber should be configured to negatively acknowledge (NACK) the message or throw an exception, triggering Pub/Sub’s built-in retry mechanism. However, rely on exponential backoff to avoid hammering downstream services during an outage.

3. Dead Letter Queues (DLQs):

Not all errors are transient. A malformed Workspace payload or an unprocessable Gemini prompt will cause a “poison pill” scenario, where the message fails continuously, consuming compute resources and blocking the queue. To prevent infinite retry loops, configure a Dead Letter Topic on your Pub/Sub subscription. After a defined threshold of failed delivery attempts (e.g., 5 attempts), Pub/Sub will automatically route the failing message to the DLQ, allowing your engineering team to inspect and debug the payload without halting the rest of the pipeline.

Securing the Data Pipeline Across GCP and Workspace

Data flowing from Google Workspace through GCP and into Firebase often contains highly sensitive organizational information. Securing this pipeline requires a defense-in-depth approach, establishing strict boundaries at every integration point.

1. Securing the Workspace Ingestion Point:

When Workspace sends push notifications to your webhook (e.g., a Cloud Function acting as a Pub/Sub publisher), you must verify the authenticity of the payload.

-

Domain Verification: Google requires you to prove ownership of the domain hosting the webhook.

-

Channel Tokens: When setting up the Workspace watch request, inject a cryptographic

channelToken. Your receiving webhook must validate this token on every incoming request to ensure the payload originated from your authorized Workspace subscription, rejecting any spoofed requests.

2. Principle of Least Privilege (IAM):

Avoid using default compute service accounts. Every component in your architecture must operate with a dedicated, narrowly scoped Service Account:

The* Ingestion Function** needs only roles/pubsub.publisher to the specific topic.

The* Processing Function** needs roles/pubsub.subscriber, roles/datastore.user (for Firestore idempotency checks), and roles/aiplatform.user (if utilizing Gemini via Vertex AI). It should have absolutely no access to other cloud resources.

3. Secret Management:

If you are using the Gemini Developer API (AI Studio) rather than Vertex AI, you will need to manage API keys. Never hardcode these keys or store them in plain text environment variables. Utilize Google Cloud Secret Manager to store the Gemini API keys and Workspace OAuth client secrets. Your serverless functions should retrieve these secrets dynamically at runtime, ensuring they remain encrypted at rest and access is strictly audited.

4. Firebase Security Rules:

The final leg of the pipeline involves pushing the AI-generated insights to the end-user via Firebase. Because Firebase allows direct client-side access to the database, robust Firebase Security Rules are non-negotiable. Ensure that the Cloud Function writes the generated Gemini response into a Firestore document that includes an owner_id or workspace_user_email field. Your Security Rules must then enforce that authenticated frontend clients can only read documents where their Firebase Authentication UID matches the document’s owner field, preventing cross-tenant data leaks.

Next Steps for Your Cloud Architecture

Transitioning to an event-driven architecture using Google Cloud Pub/Sub, Firebase, and Gemini is not just a technological upgrade; it is a fundamental shift in how your organization processes data, automates workflows, and interacts with Google Workspace. Now that you understand the mechanics of decoupling services, establishing real-time state synchronization, and leveraging AI-driven automation, it is time to translate these concepts into a tangible, actionable roadmap for your own environment.

Audit Your Current Infrastructure

Before deploying event triggers or wiring up Gemini models to your Workspace data, you must establish a clear baseline of your existing environment. A comprehensive infrastructure audit is the critical first step in identifying the friction points that an event-driven model can resolve.

When conducting your architectural review, focus on the following core areas:

-

Identify Synchronous Bottlenecks: Map out your current Google Workspace integrations, Add-ons, and background processes. Look for APIs that suffer from latency due to synchronous, blocking calls. These tight couplings are your prime candidates for Pub/Sub integration, allowing you to offload heavy processing to asynchronous worker services.

-

Evaluate State Management and Polling: Analyze how state is currently shared across your applications. If your architecture relies on constant API polling to check for updates in Drive, Gmail, or Sheets, you are wasting valuable compute resources and API quotas. Document these areas to replace them with Firebase Realtime Database or Firestore listeners.

-

Assess AI Readiness: Gemini thrives on context. Evaluate your data pipelines to ensure that your data streams are clean, structured, and securely accessible. Event-driven AI requires robust, real-time data ingestion, so verify that your current logging and event-routing mechanisms can support high-throughput AI inference.

-

Review Security and IAM: An event-driven architecture expands your surface area for internal service-to-service communication. Rigorously review your Identity and Access Management (IAM) policies. Ensure that service accounts interacting with Workspace APIs, Pub/Sub topics, and Firebase adhere strictly to the principle of least privilege.

Book a GDE Discovery Call with Vo Tu Duc

Architecting a highly scalable, event-driven ecosystem requires deep engineering expertise to avoid common distributed system pitfalls—such as message duplication, race conditions, or inefficient AI token usage. To accelerate your transformation and ensure your architecture is built on best practices, the most effective next step is to consult with a recognized industry expert.

Take the guesswork out of your cloud journey by booking a discovery call with Vo Tu Duc, a recognized Google Developer Expert (GDE). With extensive, hands-on experience in Google Cloud, Google Workspace, and advanced cloud engineering, Vo Tu Duc can provide the strategic direction your team needs.

During this specialized discovery session, you will:

-

Deconstruct Your Audit: Review the findings from your infrastructure audit and identify the highest-impact areas for modernization.

-

Tailor the Architecture: Explore custom strategies for seamlessly integrating Pub/Sub, Firebase, and Gemini into your specific, proprietary workflows.

-

Optimize for Scale and Cost: Gain actionable, expert recommendations on optimizing your cloud spend, securing your event pipelines, and future-proofing your Workspace integrations.

Do not navigate the complexities of modern cloud architecture alone. Leverage the insights of a GDE to ensure your event-driven Workspace is built on a flawless, scalable foundation.

Stop Doing Manual Work. Scale with AI.

Want to turn these blog concepts into production-ready reality for your team?

Table Of Contents

Portfolios

Related Posts

Quick Links

Legal Stuff