Export Apps Script Logs to Cloud Logging for Enterprise Monitoring

While workspace automations drive modern enterprise productivity, their silent background failures can lead to compounding data errors and missed SLAs. Discover how to solve the observability bottleneck and keep your mission-critical scripts running flawlessly.

The Challenge of Monitoring Workspace Automations

Automatically create new folders in Google Drive, generate templates in new folders, fill out text automatically in new files, and save info in Google Sheets automations powered by AI Powered Cover Letter Automation Engine are the unsung heroes of modern enterprise productivity. From synchronizing user directories and automating complex onboarding workflows to generating dynamic financial reports, these scripts frequently handle mission-critical business logic. However, as organizations scale their reliance on Apps Script, a significant operational bottleneck emerges: observability.

When automations run silently in the background, a quiet failure can lead to compounding data inconsistencies, missed Service Level Agreements (SLAs), and frustrated end-users. Managing a sprawling ecosystem of standalone, container-bound, and published add-on scripts requires a robust monitoring strategy. Treating these automations as “set-and-forget” scripts is a recipe for technical debt; they must be monitored with the same rigor as any other cloud-native application.

Limitations of the Default Apps Script Dashboard

For individual developers or small-scale workflows, the default Apps Script Executions dashboard serves as a convenient starting point. It provides basic insights into script runs, execution times, and immediate failure states. Yet, when evaluated through the lens of enterprise cloud engineering, its limitations become glaringly apparent:

-

Ephemeral Log Retention: The native dashboard retains execution logs for a very limited window (typically 7 days for basic executions). This short lifecycle makes historical auditing, compliance tracking, and long-term trend analysis nearly impossible.

-

Siloed Visibility: Each Apps Script project maintains its own isolated execution history. If your organization relies on dozens or hundreds of scripts distributed across different AC2F Streamline Your Google Drive Workflow accounts and shared drives, there is no centralized “single pane of glass” to monitor the fleet.

-

Inadequate Search and Filtering: Debugging complex, intermittent issues requires advanced querying capabilities. The default interface lacks the robust syntax needed to filter logs by specific JSON payloads, custom severity levels, or correlation IDs across multiple executions.

-

Lack of Proactive Alerting: While native Apps Script provides basic daily email digests for failed triggers, it lacks the sophisticated, real-time alerting mechanisms required by modern IT teams. You cannot easily route critical, context-rich errors to incident management tools like PagerDuty, Jira, or dedicated Slack channels based on specific error thresholds.

The Need for Enterprise Grade Visibility and Health Tracking

To elevate Workspace automations from fragile background tasks to resilient, enterprise-grade microservices, organizations must adopt standard cloud observability practices. This transition requires moving beyond reactive debugging to proactive health tracking.

Enterprise-grade visibility means having real-time, aggregated insights into script performance, execution durations, and error rates across the entire Workspace environment. Cloud engineers and IT administrators need the ability to define custom log metrics, establish Service Level Indicators (SLIs), and trigger automated alerts the moment an anomaly is detected—long before a script failure impacts downstream business operations.

Furthermore, strict compliance and security mandates often require immutable, long-term log storage that can be audited or seamlessly ingested into a Security Information and Event Management (SIEM) system. Achieving this level of operational maturity necessitates bridging the gap between Automated Client Onboarding with Google Forms and Google Drive. and professional cloud infrastructure, ensuring that every automation’s heartbeat is tracked, measured, and secured.

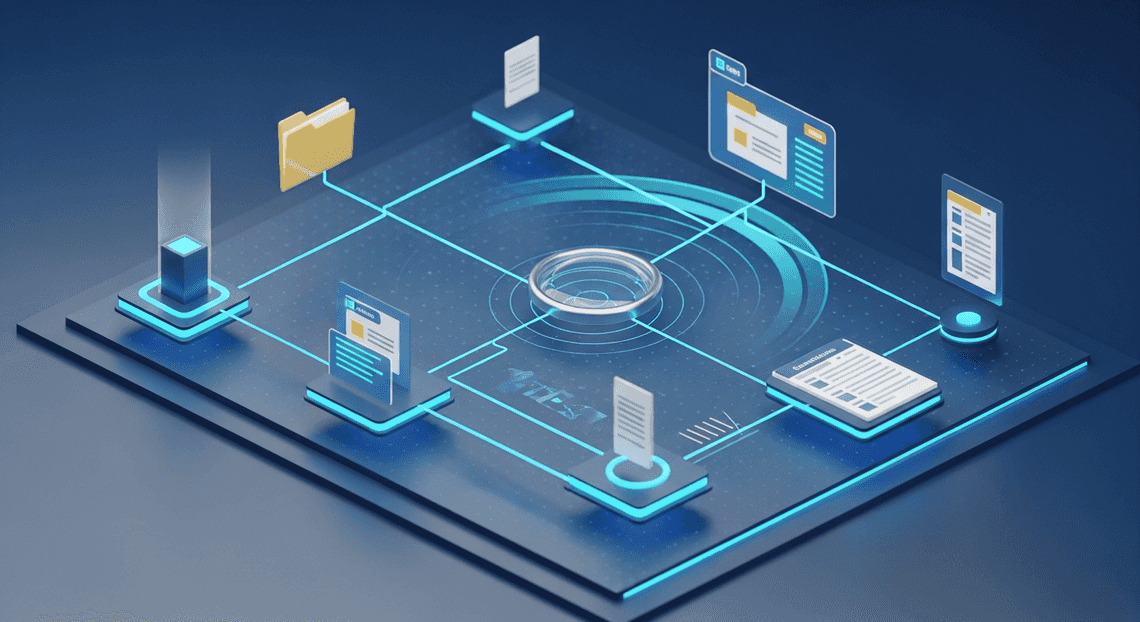

Architecture Overview for Apps Script and Cloud Logging

To build an enterprise-grade monitoring solution, you must first understand the underlying architecture that connects Genesis Engine AI Powered Content to Video Production Pipeline to Google Cloud. By default, Apps Script operates in a somewhat isolated environment, designed for simplicity and rapid development. However, when you need to monitor execution health, track security events, or audit script performance across an entire organization, that default sandbox is no longer sufficient.

The architecture required for enterprise monitoring relies on explicitly bridging your serverless Apps Script environment with the robust, centralized logging infrastructure of Google Cloud. This bridge transforms isolated script executions into highly visible, queryable, and actionable data streams.

Understanding the Standard Google Cloud Project Integration

Every Architecting Multi Tenant AI Workflows in Google Apps Script project is backed by a Google Cloud project. Out of the box, Google provisions a Default Cloud Project for your script. This default project is entirely managed by Google; it is hidden from your Google Cloud Console, meaning you have zero access to its Identity and Access Management (IAM) policies, billing configurations, or advanced APIs.

To unlock enterprise monitoring, you must transition your script from this hidden environment to a Standard Google Cloud Project. This integration is the foundational pillar of the logging architecture.

By linking your Apps Script file to a Standard GCP Project (using the GCP Project Number), you effectively move the script’s execution context into your organization’s managed cloud perimeter. This architectural shift provides several critical capabilities:

-

API Enablement: You can explicitly enable the Cloud Logging API, allowing your script to transmit high-volume log payloads.

-

IAM & Security: You gain granular control over who can view, route, and manage the logs generated by your scripts using standard GCP IAM roles (e.g.,

roles/logging.viewerorroles/logging.admin). -

Centralized Governance: Scripts scattered across various Automated Discount Code Management System accounts can be linked to a single, centralized GCP project, aggregating logs into a unified pane of glass.

Data Flow from Apps Script to Stackdriver

Once the Standard GCP Project integration is established, the telemetry pipeline becomes active. Understanding the data flow from the Apps Script runtime to Cloud Logging (historically known as Stackdriver) is essential for designing effective alerts and log sinks.

The lifecycle of a log message follows a distinct, asynchronous path:

-

Log Generation (The Runtime): The flow begins inside the Apps Script V8 runtime. It is crucial to note the difference between logging methods here. While

Logger.log()writes to the legacy Apps Script dashboard, using theconsoleclass (e.g.,console.log(),console.info(),console.error()) instructs the runtime to format the output as a JSON payload specifically designed for Stackdriver. -

Transmission (Cloud Logging API): Upon execution, the Apps Script runtime automatically captures these standard output and error streams. It attaches essential metadata—such as the execution ID, timestamp, severity level, and the specific function name—and securely transmits the payload via the Cloud Logging API to your linked Standard GCP Project.

-

Ingestion & Indexing (Stackdriver): The logs arrive in your GCP project and are ingested by the Cloud Logging backend. They are categorized under the specific monitored resource type

app_script_function. This categorization allows engineers to easily filter logs generated by Workspace extensions versus logs from other cloud resources like Compute Engine or Cloud Run. -

Routing & Action (Log Router): Once inside Stackdriver, the logs hit the Log Router. This is where enterprise architecture truly shines. Using inclusion and exclusion filters, the Log Router evaluates the incoming Apps Script data flow in real-time. From here, logs can be:

Stored in standard* Log Buckets** for immediate troubleshooting and alerting.

Routed to* BigQuery** for long-term analytics and performance auditing.

Streamed to* Pub/Sub**, triggering downstream event-driven architectures or forwarding to third-party SIEM tools (like Splunk or Datadog).

Archived in* Cloud Storage** to meet strict data retention and compliance mandates.

This seamless data flow ensures that every critical event occurring within your Workspace environment is captured, enriched, and routed with the same reliability as your core cloud infrastructure.

Configuring Cloud Logging for Apps Script

To unlock enterprise-grade observability for your Automated Email Journey with Google Sheets and Google Analytics automations, you must bridge the gap between the isolated Apps Script environment and the broader Google Cloud ecosystem. By default, every Apps Script project is bound to a hidden, Google-managed “default” Cloud project. While this suffices for basic development, it completely restricts your access to advanced tools like Cloud Logging, Log Router, and custom IAM policies. To route your logs effectively, you must first reconfigure your script to use a Standard Google Cloud Project.

Assigning a Standard Google Cloud Project to Your Script

Linking a standard Google Cloud Project (GCP) to your Apps Script is the foundational step for enterprise monitoring. This allows your script’s execution data to flow directly into a project where you have full administrative control.

Here is how to make the switch:

-

Obtain the Project Number: Navigate to the Google Cloud Console and select the project you intend to use for centralized logging. Go to the project dashboard and copy the Project Number (Note: You need the numeric Project Number, not the alphanumeric Project ID).

-

Access Apps Script Settings: Open your Apps Script project. On the left-hand navigation menu, click on the gear icon (Project Settings).

-

Change the GCP Project: Scroll down to the Google Cloud Platform (GCP) Project section. You will see a message indicating the script is currently using a default project. Click the Change project button.

-

Link the Project: Paste the Project Number you copied earlier into the input field and click Set project.

Pro-Tip for Cloud Engineers: Ensure that your user account has the necessary IAM permissions (specifically resourcemanager.projects.get, typically included in the Editor or Owner roles) on the target GCP project; otherwise, the linkage will fail. Additionally, ensure the Google Apps Script API** is enabled within your target GCP project.

Writing Custom Log Entries Using Console Methods

Once your standard GCP project is linked, your script is ready to transmit logs. However, the method you use to write these logs matters. While legacy Apps Script developers often rely on Logger.log(), enterprise logging requires the use of the console class.

The console methods natively integrate with Cloud Logging and automatically map to standard syslog severity levels, making it much easier to filter and trigger alerts based on log severity. Furthermore, the console class supports structured logging (JSON), which is critical for querying specific payload fields in Cloud Logging.

Here is an example of how to implement structured, severity-based logging in your Apps Script:

function processEnterpriseData() {

const executionId = Utilities.getUuid();

const userEmail = Session.getEffectiveUser().getEmail();

// INFO level log: Standard operational events

console.info({

message: "Data processing initiated.",

executionId: executionId,

user: userEmail,

action: "START_PROCESSING"

});

try {

// Simulate a business logic warning

const dataSize = 500;

if (dataSize > 400) {

// WARNING level log: Unexpected behavior that isn't fatal

console.warn({

message: "Payload size exceeds optimal threshold.",

executionId: executionId,

size: dataSize,

threshold: 400

});

}

// Simulate an error

throw new Error("Failed to connect to the external CRM API.");

} catch (error) {

// ERROR level log: Fatal execution failures

console.error({

message: "Data processing failed due to an exception.",

executionId: executionId,

errorName: error.name,

errorMessage: error.message,

stackTrace: error.stack

});

}

}

By passing JavaScript objects directly into the console methods, Cloud Logging will automatically parse the output as a jsonPayload, allowing you to write complex queries against custom fields like jsonPayload.executionId or jsonPayload.user.

Verifying Log Exports in the Cloud Logging Explorer

After executing your script, you need to verify that the logs are successfully routing to your GCP project and being parsed correctly. The Cloud Logging Explorer is your primary interface for this validation.

-

Navigate to the Logs Explorer: In the Google Cloud Console, ensure you have selected the standard project linked to your script. Go to Logging > Logs Explorer.

-

Filter by Resource Type: Apps Script logs are categorized under a specific resource type. In the Query builder, enter the following query to isolate your script’s logs:

resource.type="app_script_function"

- Refine the Query: You can further refine your search to look for specific severities or structured data fields you defined in your code. For example, to find all errors related to a specific user, you could run:

resource.type="app_script_function"

severity>=WARNING

jsonPayload.user="[email protected]"

- Inspect the Log Entries: Click on any log entry in the results to expand it. You should see the

jsonPayloadobject containing your structured data, alongside metadata such as thetimestamp,insertId, andresource.labels.function_name.

Confirming the presence and structure of these logs in the Logs Explorer guarantees that your Apps Script environment is successfully integrated with Google Cloud, paving the way for advanced log routing, metric extraction, and alerting.

Setting Up Real Time Alerts with Cloud Monitoring

Exporting your Google Apps Script logs to Google Cloud is only the first half of the observability equation. In an enterprise environment, passively storing logs isn’t enough; you need proactive observability to detect and respond to failures before your users report them. By integrating Cloud Logging with Cloud Monitoring, you can transform static log entries into dynamic, real-time alerts. This ensures your operations team is immediately notified whenever a mission-critical Apps Script automation fails.

Creating Log Based Metrics for Execution Errors

To trigger an alert based on a log entry, we first need to translate our log data into a measurable time-series metric. Google Cloud achieves this through Log-based Metrics.

A log-based metric continuously scans incoming log entries against a predefined filter and increments a counter every time a match is found. For our Apps Script monitoring, we want to create a counter metric that tracks execution errors.

Here is how to configure it:

-

Navigate to Logging > Log-based Metrics in the Google Cloud Console.

-

Click Create Metric.

-

Set the Metric Type to Counter. This will count the number of log entries matching our criteria.

-

Under Details, give your metric a descriptive name, such as

apps_script_execution_errors, and provide a brief description. -

In the Filter selection section, input a query that isolates your Apps Script errors. A robust enterprise filter should look like this:

resource.type="app_script_function"

severity>=ERROR

Pro-tip for Cloud Engineers: If you want to monitor a specific, highly critical Apps Script project rather than all scripts in the GCP project, you can narrow this filter down by appending the script ID: labels.script_id="YOUR_SCRIPT_ID".

- Click Create Metric.

Keep in mind that log-based metrics are not retroactive. The metric will only begin counting errors from the moment it is created. You can test it by intentionally forcing an error in your Apps Script code and watching the metric populate in the Cloud Monitoring Metrics Explorer.

Configuring Alert Policies and Notification Channels

With your custom metric actively counting errors, the next step is to configure an Alert Policy that dictates when to trigger an alarm and who to notify.

Setting up the Alert Policy:

-

Navigate to Monitoring > Alerting and click Create Policy.

-

Click Select a metric, uncheck the “Active” toggle to see newly created metrics, and search for the log-based metric you just created (e.g.,

logging.googleapis.com/user/apps_script_execution_errors). -

Configure the Condition. For critical enterprise scripts, you typically want to know immediately if a single failure occurs. Set the rolling window to 5 minutes, the rolling window function to Count, and the condition threshold to trigger if the value is Any time series > 0.

Configuring Notification Channels:

An alert is useless if it goes to an unmonitored inbox. Google Cloud Monitoring supports a wide array of Notification Channels designed to fit into your existing incident management workflows.

-

In the Alert Policy creation flow, move to the Notifications and name step.

-

Click Manage Notification Channels (this opens in a new tab). Here, you can authorize and configure endpoints such as:

-

Email: Best for non-critical, daily summary alerts.

-

Slack / Google Chat: Ideal for alerting DevOps or Workspace administration channels in real-time.

-

PagerDuty / Opsgenie: Essential for critical, tier-1 Apps Script automations that require waking up an on-call engineer.

-

Webhooks: Useful for triggering automated remediation scripts or logging the incident into a custom ITSM tool like ServiceNow.

- Once your channels are configured, return to your Alert Policy and select them from the dropdown.

Finally, fill out the Documentation section of the alert. This markdown-supported field is what your engineers will see when the alert fires. A well-architected alert should include a runbook link, the purpose of the failing Apps Script, and a direct URL to the Cloud Logging Logs Explorer with the relevant time range pre-selected, drastically reducing the Mean Time To Resolution (MTTR).

Scaling Your Workspace Automation Architecture

As your organization’s reliance on Automated Google Slides Generation with Text Replacement grows, what starts as a handful of standalone Apps Script macros often evolves into a complex web of mission-critical automations. Scaling this architecture requires a fundamental shift from decentralized, ad-hoc script management to a robust, enterprise-grade engineering mindset. By bridging Automated Order Processing Wordpress to Gmail to Google Sheets to Jobber with Google Cloud Platform (GCP), you unlock the ability to treat your Apps Script projects like any other microservice in your cloud ecosystem.

Centralized observability through Cloud Logging is the foundational step in this transition. It enables your cloud engineering teams to monitor execution health, track cross-system integrations, and proactively identify bottlenecks before they impact business operations. However, as your automation footprint expands, so does the telemetry data it generates, requiring a strategic approach to infrastructure management.

Best Practices for Log Retention and Cost Management

While exporting Apps Script logs to Cloud Logging provides unparalleled visibility, it can also introduce unexpected costs if not managed correctly. Cloud Logging bills based on the volume of logs ingested and the duration they are retained. To maintain a scalable and cost-effective architecture, cloud engineers should implement the following best practices:

-

Implement Strict Log Leveling: Train your developers to use Apps Script’s

consoleservice judiciously. Differentiate clearly betweenconsole.debug(),console.info(),console.warn(), andconsole.error(). In a production environment, you should configure your log routers to exclude verbose debug information, which significantly reduces ingestion volume while preserving critical error data. -

Leverage Log Routers and Sinks: Not all logs need to be kept in hot storage. Use Cloud Logging sinks to route critical, actionable logs to default log buckets for real-time alerting and metrics generation. For long-term compliance and historical auditing, route high-volume, low-priority logs directly to Google Cloud Storage (GCS) or BigQuery, which offer vastly cheaper long-term storage rates.

-

Define Custom Retention Policies: By default, the

_Defaultlog bucket in Cloud Logging retains logs for 30 days. If your enterprise requires longer retention for compliance—or shorter retention for cost savings—create custom log buckets with tailored retention periods that align strictly with your data governance policies. -

Set Up Exclusion Filters: Identify “noisy” scripts that generate high volumes of repetitive, low-value telemetry. Create exclusion filters at the GCP project level to discard these specific log entries at the routing layer before they incur ingestion charges.

Audit Your Business Needs with a GDE Discovery Call

Navigating the intersection of Automated Payment Transaction Ledger with Google Sheets and PayPal and Google Cloud Platform requires specialized expertise. As your automation architecture scales, ensuring that your infrastructure is secure, performant, and cost-optimized becomes a complex challenge. If your enterprise is struggling with fragmented scripts, runaway cloud costs, or a lack of centralized governance, it is highly recommended to seek external validation.

Booking a discovery call with a Google Developer Expert (GDE) in Google Docs to Web or Cloud Engineering can provide you with a strategic roadmap tailored to your specific business needs. During a comprehensive discovery audit, a GDE can help your team:

-

Evaluate Current Architecture: Assess your existing Apps Script deployments, execution quotas, and their integration points within your GCP environment.

-

Identify Security Gaps: Ensure your GCP service accounts, OAuth scopes, and IAM permissions follow the principle of least privilege, mitigating the risk of unauthorized data access.

-

Optimize Cloud Spend: Review your Cloud Logging configurations, log sinks, and overall GCP resource utilization to eliminate architectural waste.

-

Design for Scale: Blueprint a future-proof architecture that seamlessly transitions heavy workloads from Apps Script to advanced GCP services like Cloud Run, Pub/Sub, and Cloud Functions.

Investing in an expert audit ensures your Workspace automation ecosystem remains a powerful, scalable asset rather than a compounding source of technical debt.

Stop Doing Manual Work. Scale with AI.

Want to turn these blog concepts into production-ready reality for your team?

Table Of Contents

Portfolios

Related Posts

Quick Links

Legal Stuff