Automating Free Text Assignment Grading with Gemini Pro

As growing enrollments expand the modern classroom, the manual grading of free-text assignments is pushing higher education to its breaking point. Discover why this traditional approach has become an unsustainable operational bottleneck for overwhelmed faculty and teaching assistants.

The Challenge of Manual Grading in Higher Education

Higher education institutions are built on the foundation of rigorous assessment, but the mechanics of evaluating student performance have historically failed to scale. When dealing with free-text assignments—such as essays, short-answer responses, and complex case studies—the evaluation process remains overwhelmingly manual. From a systems engineering perspective, manual grading is a severe operational bottleneck. It relies entirely on non-scalable human compute, creating inefficiencies that ripple throughout the academic ecosystem. As enrollment numbers grow and distance-learning expands the modern classroom, the traditional approach to assessing unstructured text is rapidly reaching its breaking point.

Time Constraints for Faculty and Teaching Assistants

To understand the magnitude of this bottleneck, we simply have to look at the math. If a professor or Teaching Assistant (TA) manages a cohort of 200 students, and a single free-text assignment takes a modest 15 minutes to thoroughly read, evaluate, and annotate, that equals 50 hours of continuous, high-focus work per assignment cycle.

Faculty members are already pulled in multiple directions: conducting primary research, publishing papers, securing grant funding, and delivering lectures. TAs, who are often graduate students themselves, must juggle this grading burden alongside their own demanding coursework and thesis research. The sheer volume of unstructured text data generated by students creates an unsustainable workload that leads to burnout and delayed feedback loops.

In cloud architecture, we know that delayed telemetry and feedback degrade overall system performance; in education, delayed feedback severely hinders a student’s ability to learn, iterate, and improve before the next assessment. Educators desperately need to offload this “undifferentiated heavy lifting” so they can redirect their bandwidth toward high-value, synchronous interactions like one-on-one mentoring, active discussions, and dynamic curriculum development.

The Need for Consistent Rubric Evaluation

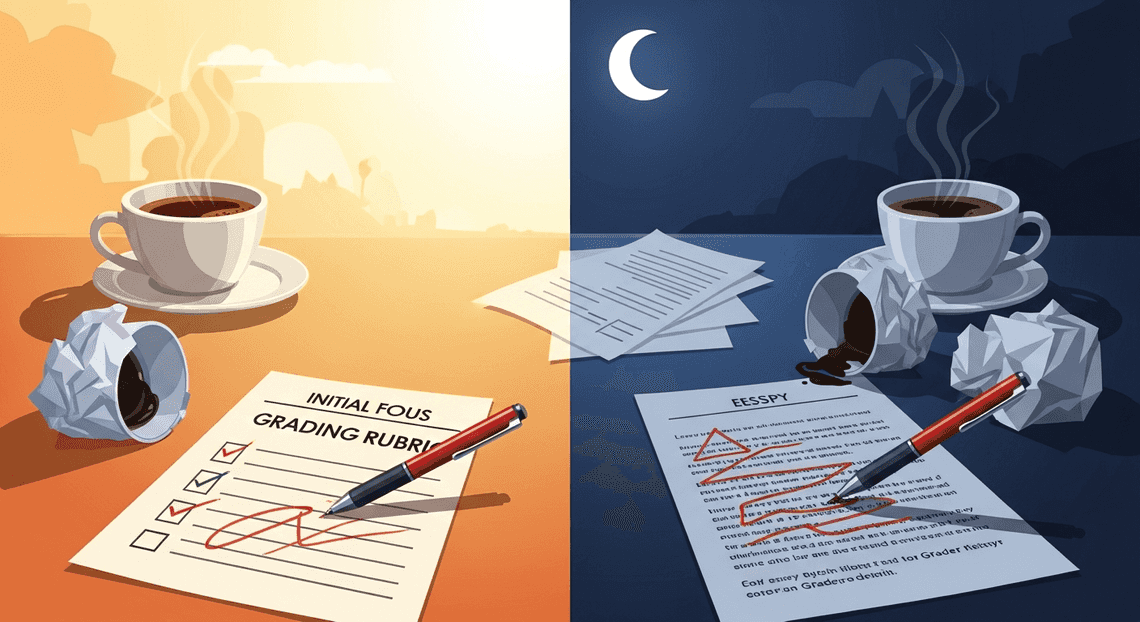

Beyond the massive time investment, manual grading introduces the highly unpredictable variable of human inconsistency. Evaluating nuanced, free-text responses against a multi-dimensional rubric requires immense cognitive stamina. As a human grader works through a stack of a hundred essays, decision fatigue inevitably sets in. A paper evaluated at 8:00 AM over a fresh cup of coffee is often judged through a different lens—and perhaps with a different level of strictness—than a paper evaluated at 11:30 PM.

Furthermore, large university courses frequently distribute grading workloads across a decentralized team of TAs. Despite rigorous calibration meetings and highly detailed rubrics, “grader drift” is a well-documented phenomenon. One TA might implicitly penalize grammatical issues more heavily, while another might over-index on conceptual depth, leading to an inequitable grading experience for the student body.

Maintaining stateless, objective consistency across hundreds of complex evaluations is a classic failure point of distributed human processing. To ensure academic fairness and integrity, institutions need an evaluation mechanism capable of applying complex rubric criteria with the exact same unwavering precision on the first assignment as it does on the thousandth.

Designing the Automated Grading Assistant Logic

To build an effective automated grading assistant, we need a robust orchestration layer that seamlessly bridges Automatically create new folders in Google Drive, generate templates in new folders, fill out text automatically in new files, and save info in Google Sheets and Google Cloud. The logic relies on a distinct, event-driven pipeline: ingesting the student’s work, processing the text through the Gemini Pro model using a standardized grading framework, and delivering the generated insights directly back to the student. By leveraging AI Powered Cover Letter Automation Engine or a lightweight Google Cloud Function, we can automate this entire lifecycle, transforming a traditionally manual, time-consuming chore into an intelligent, scalable workflow.

Syncing Student Assignment Documents to Google Drive

The first step in our automation pipeline is centralizing the student submissions. Whether your students are submitting their free-text assignments via Google Classroom, a custom web portal, or a third-party Learning Management System (LMS) via LTI integration, the objective is to route these files into a designated, secure Google Drive folder.

Using the Google Drive API, we can establish an automated synchronization mechanism. For instance, a webhook triggered by an LMS submission can invoke a Google Cloud Function that uploads, copies, or moves the submitted document into a strictly structured directory hierarchy (e.g., Course_Name/Semester/Assignment_Name/Student_ID).

If you are operating natively within the Google ecosystem, Google Classroom automatically handles this folder structuring for you. The critical engineering requirement at this stage is ensuring that all incoming files are converted to and stored as native Google Docs (application/vnd.google-apps.document). This standardization is vital, as it drastically simplifies the text extraction and manipulation processes required for the subsequent phases of our pipeline.

Evaluating Submissions Against a Master Rubric Document

Once the documents are securely housed and organized in Google Drive, the core intelligence of our application takes over. To evaluate a free-text assignment fairly, consistently, and without hallucination, Gemini Pro requires two critical pieces of context: the student’s raw submission and the master rubric.

Using the Google Docs API, our automation script extracts the text payload from both the student’s document and a pre-defined Master Rubric Document. We then construct a highly structured, few-shot prompt to send to the Gemini Pro model via Google Cloud Building Self Correcting Agentic Workflows with Vertex AI.

Prompt Engineering for Reliable Autonomous Workspace Agents is the linchpin of this step. We instruct Gemini Pro to adopt the persona of an objective, constructive academic grader. The prompt template programmatically injects the master rubric—detailing the grading criteria, point distributions, and qualitative expectations—followed by the student’s extracted text. By utilizing Gemini Pro’s expansive context window and advanced semantic understanding, the model cross-references the student’s arguments, formatting, and comprehension against the strict constraints of the rubric. We configure the model’s generation parameters (such as setting a low temperature like 0.2) to ensure the output remains highly deterministic, focused, and strictly aligned with the provided grading criteria.

Appending Actionable AI Feedback to the Original Assignment

Generating the feedback is only half the battle; delivering it seamlessly in the student’s flow of work is what truly drives educational value. Instead of exporting scores to a detached spreadsheet or sending an impersonal email, our logic writes the AI-generated evaluation directly back into the student’s original document.

Once Vertex AI returns the structured response from Gemini Pro (ideally formatted as JSON for easy parsing), we utilize the Google Docs API’s batchUpdate method to modify the document. The script programmatically inserts a page break at the end of the student’s text, followed by a stylized “AI Evaluation & Feedback” section.

This appended section includes the final calculated score, a granular breakdown of points awarded per rubric criterion, and actionable AI feedback. By leveraging the Docs API to apply specific text styles—such as bolding criteria headers or highlighting critical areas for improvement—we ensure the feedback is highly readable. Providing constructive, specific suggestions directly within their workspace creates an immediate feedback loop, enhancing the student’s learning experience while entirely removing the administrative overhead for the educator.

Exploring the Technical Stack

To build a seamless, zero-touch grading pipeline, we need to bridge the gap between where students submit their work and where the artificial intelligence resides. This requires a robust orchestration of AC2F Streamline Your Google Drive Workflow services paired with Google Cloud’s state-of-the-art generative AI. By combining the accessibility of Genesis Engine AI Powered Content to Video Production Pipeline with the reasoning power of large language models, we can create a highly efficient workflow. Let’s break down the core components that make this automation possible.

Managing Folders and Files with DriveApp

The foundation of any Automated Client Onboarding with Google Forms and Google Drive. document automation begins with file management. In our grading architecture, Architecting Multi Tenant AI Workflows in Google Apps Script’s DriveApp service acts as the primary file orchestrator. When an assignment deadline passes, educators shouldn’t have to manually download, organize, or open dozens of individual files. Instead, we use DriveApp to programmatically navigate through a designated Google Drive folder containing all the student submissions.

By utilizing methods like DriveApp.getFolderById() and iterating through the contents with getFilesByType(MimeType.GOOGLE_DOCS), our script systematically identifies every document awaiting review. DriveApp handles the heavy lifting of the file system logic—using FileIterator objects to process submissions one by one in a highly scalable loop. Furthermore, this service can be configured to dynamically move graded assignments into a separate “Reviewed” folder or adjust sharing permissions to lock the document from further student edits, ensuring a clean and secure workspace.

Leveraging Gemini 3 Pro for Advanced Text Parsing

The true brain of this operation is Gemini 3 Pro. Grading free-text assignments—such as essays, short-answer responses, or technical explanations—requires far more than simple keyword matching. It demands a deep understanding of context, nuance, logical flow, and argumentation.

By integrating the Gemini API via Google Cloud (Vertex AI), we can pass the extracted text from each student’s assignment alongside a carefully crafted system prompt that includes the specific grading rubric. Gemini 3 Pro excels in this environment due to its massive context window and advanced semantic reasoning capabilities. It evaluates the student’s text against the provided criteria, identifies areas of strength and weakness, and generates constructive, personalized feedback.

To ensure our pipeline remains robust and programmatically sound, we utilize Gemini’s structured output capabilities. By instructing the model to return its evaluation as a strictly formatted JSON payload, our script can effortlessly parse out the final numeric score, the rubric breakdown, and the qualitative feedback without relying on fragile string-matching techniques.

Automating Document Edits with DocsApp

Once Gemini 3 Pro has generated the grade and feedback, that data needs to be delivered back to the student in a meaningful way. This is where Google Apps Script’s DocumentApp (often referred to as DocsApp in automation workflows) comes into play to close the loop.

Rather than exporting the grades to a separate spreadsheet or sending an impersonal email, we use this service to inject the AI-generated insights directly into the student’s original submission. Using DocumentApp.openById(), the script accesses the specific Google Doc currently being processed. From there, it manipulates the Document Object Model (DOM) of the file. We can programmatically append a new “Instructor Feedback” section at the bottom of the document, insert a formatted table containing the rubric breakdown, or highlight specific passages. This automated editing process ensures that students receive their grades and actionable feedback directly within the context of their own work, completing the automated grading cycle without requiring a single manual keystroke from the educator.

Implementing the Grading Workflow

With the theoretical foundation and rubric design out of the way, it is time to build the actual automation pipeline. By leveraging Google Apps Script as our orchestration layer, we can seamlessly bridge Automated Discount Code Management System applications—like Google Sheets or Google Classroom—with the powerful natural language processing capabilities of Gemini Pro.

Setting Up the Google Apps Script Environment

Google Apps Script (GAS) provides a serverless JavaScript environment that natively integrates with your Automated Email Journey with Google Sheets and Google Analytics. For this workflow, we will assume you are collecting free-text assignments in a Google Sheet (a common output for Google Forms).

-

Initialize the Project: Open your Google Sheet containing the student submissions. Navigate to Extensions > Apps Script. This opens a new project bound to your spreadsheet.

-

Configure the Manifest: By default, GAS hides the manifest file. Click the gear icon (Project Settings) and check the box for “Show ‘appsscript.json’ manifest file in editor”. Ensure your manifest includes the necessary OAuth scopes to read the spreadsheet and connect to external APIs:

{

"timeZone": "America/New_York",

"dependencies": {},

"exceptionLogging": "STACKDRIVER",

"oauthScopes": [

"https://www.googleapis.com/auth/spreadsheets",

"https://www.googleapis.com/auth/script.external_request"

],

"runtimeVersion": "V8"

}

- Structure Your Code: In the GAS editor, rename the default

Code.gstoMain.gs. It is best practice to modularize your code. Create additional script files such asGeminiAPI.gsfor handling the HTTP requests andGradingLogic.gsfor prompt construction and response parsing.

Connecting the Gemini API to Your Workspace

To allow Apps Script to communicate with Gemini Pro, you need to securely configure your API credentials and set up the HTTP request using the UrlFetchApp service.

First, obtain your Gemini API key from Google AI Studio or your Google Cloud Console. Never hardcode API keys directly into your script. Instead, use the Apps Script Properties Service to store it securely:

-

Go to Project Settings in the Apps Script editor.

-

Scroll down to Script Properties and click Add script property.

-

Set the property name to

GEMINI_API_KEYand paste your key as the value.

Next, write the integration function in your GeminiAPI.gs file. This function will construct the payload and handle the POST request to the Gemini endpoint:

function callGeminiPro(promptText) {

const apiKey = PropertiesService.getScriptProperties().getProperty('GEMINI_API_KEY');

if (!apiKey) throw new Error("API Key is missing in Script Properties.");

// Using the gemini-1.5-pro model for complex reasoning tasks

const endpoint = `https://generativelanguage.googleapis.com/v1beta/models/gemini-1.5-pro:generateContent?key=${apiKey}`;

const payload = {

"contents": [{

"parts": [{

"text": promptText

}]

}],

"generationConfig": {

"temperature": 0.1, // Low temperature for deterministic grading

"responseMimeType": "application/json" // Enforce JSON output for easy parsing

}

};

const options = {

"method": "post",

"contentType": "application/json",

"payload": JSON.stringify(payload),

"muteHttpExceptions": true

};

try {

const response = UrlFetchApp.fetch(endpoint, options);

const jsonResponse = JSON.parse(response.getContentText());

if (response.getResponseCode() !== 200) {

Logger.log("API Error: " + response.getContentText());

return null;

}

// Extract the generated text from the Gemini response structure

return jsonResponse.candidates[0].content.parts[0].text;

} catch (error) {

Logger.log("Fetch failed: " + error.toString());

return null;

}

}

Testing the Rubric Logic on Sample Assignments

Before running the script across hundreds of student submissions, you must validate that Gemini Pro interprets your grading rubric accurately. The key to reliable automated grading is strict prompt engineering. By instructing Gemini to return a structured JSON object, we eliminate the need to parse messy, unstructured text.

In your GradingLogic.gs file, create a test function that combines a sample grading rubric with a dummy student submission:

function testGradingRubric() {

const sampleRubric = `

You are an expert grader. Grade the following student submission based on this rubric:

1. Accuracy (0-5 points): Is the historical information factually correct?

2. Clarity (0-5 points): Is the argument easy to follow and well-structured?

Provide your evaluation strictly as a JSON object with the following keys:

"accuracy_score", "accuracy_feedback", "clarity_score", "clarity_feedback", "total_score".

`;

const sampleSubmission = `

The Industrial Revolution began in the late 18th century in Great Britain.

It marked a shift from hand production methods to machines, new chemical manufacturing,

and iron production processes. However, it was mostly driven by the invention of the internet.

`;

const fullPrompt = `${sampleRubric}\n\nStudent Submission:\n"""${sampleSubmission}"""`;

Logger.log("Sending prompt to Gemini Pro...");

const rawResult = callGeminiPro(fullPrompt);

if (rawResult) {

try {

// Parse the returned JSON string

const gradingData = JSON.parse(rawResult);

Logger.log("Grading Successful!");

Logger.log("Total Score: " + gradingData.total_score);

Logger.log("Accuracy Feedback: " + gradingData.accuracy_feedback);

Logger.log("Clarity Feedback: " + gradingData.clarity_feedback);

} catch (e) {

Logger.log("Failed to parse JSON. Raw output was: " + rawResult);

}

}

}

Run the testGradingRubric function from the Apps Script editor and check the Execution Log. You should see Gemini correctly penalizing the accuracy score due to the anachronistic mention of the internet, while perhaps awarding points for the clear structure of the first two sentences. If the model’s feedback is too lenient or too harsh, tweak the wording of your sampleRubric and re-run the test until the logic aligns perfectly with your pedagogical standards.

Scale Your Technical Architecture

Transitioning your Gemini Pro grading assistant from a local proof-of-concept to a production-ready, enterprise-grade application requires a robust cloud engineering strategy. When dealing with hundreds or thousands of free-text assignments, synchronous API calls will inevitably lead to timeouts, bottlenecks, and a poor user experience. To handle this, you need to leverage the full power of Google Cloud Platform (GCP) to build an event-driven, scalable architecture.

The foundation of this scalable system relies on decoupling the ingestion of assignments from the actual grading process. By utilizing Google Cloud Pub/Sub, you can create an asynchronous messaging queue. When a student submits an assignment, an event is published to a Pub/Sub topic. This event then triggers a highly scalable serverless container running on Cloud Run, which executes the Gemini Pro grading logic via Vertex AI.

Because Cloud Run automatically scales up to handle massive spikes in submissions—such as right before a midnight deadline—and scales down to zero during inactive periods, your infrastructure remains both highly available and cost-effective. Furthermore, storing the generated feedback, rubric scores, and metadata in a NoSQL database like Firestore or a relational database like Cloud SQL ensures that grading histories are securely maintained, easily queryable, and ready for analytics.

Expanding the Assistant for Complex Educational Workflows

A scalable architecture does more than just handle volume; it unlocks the ability to build deeply integrated, complex educational workflows within the Automated Google Slides Generation with Text Replacement ecosystem. By wrapping your Gemini Pro logic in secure APIs, you can transform the grading assistant from a standalone script into a seamless part of the daily teaching experience.

Here is how you can expand the system to handle advanced workflows:

-

Seamless Google Classroom Integration: Utilizing the Google Classroom API, your Cloud Run service can automatically detect when an assignment status changes to “Turned In.” The system can then fetch the document, process it through Gemini Pro, and push the AI-generated score and feedback directly back into the Classroom gradebook as a draft for the teacher to review.

-

Human-in-the-Loop (HITL) Feedback via Google Docs: AI should augment educators, not replace them. By integrating the Google Docs API, your architecture can insert Gemini Pro’s feedback as targeted comments directly into the margins of the student’s Google Doc. This allows teachers to review, edit, or expand upon the AI’s suggestions before finalizing the grade, ensuring high-quality, personalized feedback.

-

Multi-Modal Document Processing: Educational submissions aren’t always plain text. Students often submit PDFs, scanned handwritten notes, or slide decks. By combining Google Drive API for file retrieval, Cloud Document AI for OCR, and Gemini Pro’s advanced multi-modal capabilities, your pipeline can ingest, parse, and grade diverse file types without breaking a sweat.

-

Longitudinal Student Analytics: By routing the structured grading data (e.g., grammar scores, critical thinking metrics, rubric adherence) into BigQuery, educational institutions can build Looker Studio dashboards. This allows educators to track a student’s writing progress over an entire semester, identifying systemic areas where the whole class might be struggling.

Book a GDE Discovery Call with Vo Tu Duc

Designing and deploying a scalable, AI-driven educational platform requires navigating complex architectural decisions, from optimizing Vertex AI prompt quotas to securing student data with strict IAM policies. If you are looking to implement Gemini Pro into your institution’s grading workflows or need to scale an existing Google Cloud architecture, expert guidance can save you months of development time.

Take the guesswork out of your cloud engineering journey by booking a discovery call with Vo Tu Duc, a recognized Google Developer Expert (GDE) in Google Cloud and Automated Order Processing Wordpress to Gmail to Google Sheets to Jobber.

During this customized session, you will:

-

Review your current technical architecture and identify scaling bottlenecks.

-

Explore best practices for integrating Vertex AI (Gemini Pro) securely with Automated Payment Transaction Ledger with Google Sheets and PayPal APIs.

-

Discuss tailored strategies for cost-optimization, event-driven design, and data governance.

Ready to transform your educational technology stack? [Click here to book your GDE Discovery Call with Vo Tu Duc today] and start building a smarter, more scalable future for your educators and students.

Stop Doing Manual Work. Scale with AI.

Want to turn these blog concepts into production-ready reality for your team?

Table Of Contents

Portfolios

Related Posts

Quick Links

Legal Stuff