Architecting a Predictive Maintenance Agent with BigQuery and Vertex AI

A single hour of unplanned downtime can cost modern manufacturers millions of dollars in lost yield, scrapped materials, and missed deadlines. Discover the true financial and operational impact of unexpected equipment failure—and why stopping the bleed is critical to your bottom line.

The Cost of Unplanned Downtime in Modern Manufacturing

In the highly optimized world of modern manufacturing, unplanned downtime is the ultimate adversary. When a critical asset—whether it’s a robotic welding arm, a massive industrial centrifuge, or a precision CNC machine—fails unexpectedly, the financial impact extends far beyond the cost of the replacement parts. Industry benchmarks routinely show that a single hour of halted production can cost facilities hundreds of thousands, if not millions, of dollars.

This financial bleed is multifaceted. It encompasses lost production yield, idled labor, wasted raw materials (especially in process manufacturing where temperature-sensitive batches must be scrapped), and severe supply chain cascading effects that lead to missed Service Level Agreements (SLAs). Furthermore, catastrophic equipment failures often pose significant safety hazards to floor personnel. While modern factory floors are heavily instrumented with Supervisory Control and Data Acquisition (SCADA) systems and IoT sensors generating terabytes of telemetry, the tragic irony is that most of this data is either siloed, overwritten, or simply ignored until a post-mortem analysis is required.

Shifting from Reactive to Predictive Maintenance

Historically, industrial maintenance has relied on two primary paradigms: reactive and preventive. Reactive maintenance, often termed “run-to-failure,” is essentially a gamble. You operate the machinery until it breaks, resulting in maximum downtime and expensive emergency repairs. Preventive maintenance attempts to solve this by scheduling servicing based on calendar time or operational hours. While safer, it is highly inefficient; organizations end up replacing perfectly healthy components, wasting labor and parts while still occasionally missing anomalous failures that occur between scheduled checks.

Predictive maintenance represents a paradigm shift from a heuristic-based approach to a purely data-driven one. By continuously analyzing the telemetry emitted by machinery—such as vibration frequencies, thermal fluctuations, acoustic anomalies, and torque resistance—we can identify the subtle, degrading signatures of an impending failure long before a human operator could detect them.

Instead of asking, “What just broke?” or “Is it time for a checkup?”, a predictive maintenance strategy uses machine learning to answer, “What is the remaining useful life (RUL) of this specific bearing?” This shift not only maximizes asset utilization and lifespan but also transforms maintenance teams from reactive firefighters into proactive strategists. However, making this leap requires an architecture capable of turning a firehose of raw sensor data into actionable foresight.

Core Requirements for an IoT Data Pipeline

To successfully power a predictive maintenance agent, the underlying cloud architecture must be engineered to handle the unique challenges of industrial IoT workloads. Building this foundation requires satisfying several strict technical requirements:

-

High-Throughput, Decoupled Ingestion: Industrial environments generate massive volumes of high-frequency time-series data. The pipeline must feature a highly available, globally scalable messaging layer capable of ingesting millions of events per second from edge gateways without dropping packets or creating bottlenecks.

-

Scalable, Unified Storage: The architecture must seamlessly bridge the gap between real-time streaming data (needed for instant anomaly detection) and massive historical datasets (needed for training robust machine learning models). The data warehouse must natively support time-partitioning and complex analytical queries over petabytes of telemetry.

-

Seamless AI/ML Integration: The friction between data engineering and data science must be eliminated. The pipeline requires a unified environment where feature engineering can happen directly where the data resides, and where training, deploying, and monitoring predictive models does not require moving data across disparate systems.

-

Low-Latency Inference and Actionability: A prediction is only valuable if it is acted upon in time. The system must support real-time model serving, scoring incoming sensor data on the fly to trigger automated alerts, generate maintenance work orders, or even send shutdown signals back to the edge before catastrophic damage occurs.

-

Resilience and Fault Tolerance: Because this pipeline monitors mission-critical physical infrastructure, the cloud architecture itself must be highly available, fault-tolerant, and capable of handling network partitions or intermittent connectivity from edge devices.

Designing the Predictive Maintenance Architecture

Building a predictive maintenance agent requires more than just training a machine learning model; it demands a resilient, end-to-end architecture capable of ingesting high-velocity telemetry, processing massive datasets, and executing autonomous actions. To move from reactive repairs to proactive, AI-driven maintenance, we must design a system that seamlessly bridges data engineering, machine learning, and workflow Automated Job Creation in Jobber from Gmail. Let’s break down how we architect this ecosystem on Google Cloud.

High Level System Design

The architecture follows a robust, event-driven pipeline enhanced by an agentic reasoning layer. At its core, the system is divided into four distinct operational phases:

-

Data Ingestion & Streaming: Real-time sensor data (such as temperature, vibration, acoustic emissions, and RPMs) from industrial equipment is captured at the edge and streamed securely into the cloud environment.

-

Storage & Analytics: The raw telemetry is aggregated, transformed, and stored in an enterprise data warehouse. In this layer, historical maintenance logs, ERP data, and real-time streams converge to create a unified, single source of truth.

-

Intelligence & Inference: Machine learning models continuously analyze the aggregated data to detect anomalies, predict equipment failure probabilities, and estimate the Remaining Useful Life (RUL) of critical components.

-

Agentic Action: This is where the system evolves from a standard ML pipeline into an “agent.” Instead of merely generating a dashboard alert, an AI agent interprets the model’s predictions, cross-references parts inventory, and autonomously orchestrates maintenance workflows.

This decoupled, modular design ensures that each layer can scale independently, maintaining high performance whether you are monitoring a single factory floor or a global fleet of assets.

Choosing the Right Google Cloud Components

To bring this high-level design to life, we rely on a carefully selected stack of Google Cloud and Automatically create new folders in Google Drive, generate templates in new folders, fill out text automatically in new files, and save info in Google Sheets services. Each component is chosen for its serverless scalability, deep interoperability, and managed nature, allowing us to focus on engineering intelligence rather than managing underlying infrastructure.

-

Cloud Pub/Sub & Cloud Dataflow (The Nervous System): For real-time data ingestion, Cloud Pub/Sub provides a highly scalable, globally distributed message bus that reliably captures high-throughput IoT telemetry. Cloud Dataflow (based on Apache Beam) consumes these streams in real-time, performing windowing, noise filtering, and aggregations before sinking the clean data into our warehouse.

-

BigQuery (The Analytical Core): BigQuery acts as the data foundation of our architecture. As a fully managed, serverless data warehouse, it effortlessly handles petabytes of machine telemetry. Crucially, its built-in BigQuery ML (BQML) and seamless federation with Building Self Correcting Agentic Workflows with Vertex AI allow us to run predictive models directly against the data using standard SQL, drastically reducing data movement and accelerating time-to-insight.

-

Vertex AI (The Cognitive Engine): Vertex AI is where our predictive and agentic logic lives. We utilize Vertex AI’s unified platform to train and deploy custom models for anomaly detection. Furthermore, we leverage Vertex AI’s generative AI capabilities (like Gemini) to build the reasoning agent. Using orchestration frameworks like LangChain on Vertex AI, the agent can reason over prediction outputs, query external databases, and decide on the optimal next action.

-

Cloud Run & Cloud Functions (The Actuators): When the Vertex AI agent determines that a machine requires imminent servicing, it triggers microservices hosted on Cloud Run or Cloud Functions. These serverless compute environments execute the actual business logic—such as interacting with external ERP APIs (like SAP or ServiceNow) to automatically generate work orders and order replacement parts.

-

AC2F Streamline Your Google Drive Workflow APIs (The Collaborative Hub): To keep human operators in the loop, the agent integrates deeply with Automated Client Onboarding with Google Forms and Google Drive.. Critical, time-sensitive alerts are routed directly to dedicated Google Chat spaces via webhooks. Simultaneously, automated maintenance schedules and inventory reports are dynamically generated and updated in Google Sheets via Apps Script, ensuring maintenance crews have real-time, collaborative access to actionable intelligence.

Building the Ingestion Layer with Pub Sub and BigQuery

In any predictive maintenance architecture, your machine learning models are only as good as the data feeding them. For a modern factory floor generating thousands of sensor readings per second, you need an ingestion layer that is resilient, highly available, and capable of handling massive throughput without breaking a sweat. This is where the combination of Google Cloud Pub/Sub and BigQuery shines, providing a serverless, fully managed pathway from the edge directly to the data warehouse.

Streaming Factory Sensor Data Reliably

Factory equipment—whether it is CNC machines, robotic arms, or industrial HVAC systems—continuously emits telemetry data such as temperature, vibration, pressure, and acoustic frequencies. To capture this firehose of information reliably, we use Cloud Pub/Sub as our global messaging bus.

Pub/Sub acts as a highly scalable shock absorber. It decouples the data producers (the factory sensors and IoT gateways) from the data consumers (our analytics and ML pipelines). If a downstream system experiences a spike in load or goes offline for maintenance, Pub/Sub retains the messages, ensuring zero data loss and providing at-least-once delivery guarantees.

Historically, streaming data from Pub/Sub into BigQuery required standing up an Apache Beam pipeline via Cloud Dataflow. While Dataflow is incredibly powerful for complex, in-flight data transformations and windowing, it can be architectural overkill if your primary goal is simply to land raw telemetry data.

To optimize this, we can leverage Pub/Sub BigQuery Subscriptions. This native feature allows Pub/Sub to write messages directly into a BigQuery table without any intermediate compute layer. By configuring a direct BigQuery subscription, we drastically reduce architectural complexity, minimize ingestion latency, and cut down on infrastructure costs. The raw sensor payloads are streamed directly from the factory floor into our analytics engine in near real-time, ready for processing.

Structuring Time Series Data for Analytics

Once the data lands in BigQuery, how it is structured dictates both the performance of your queries and your overall cloud spend. Sensor data is inherently time-series data; it grows rapidly, is append-only, and is almost always queried based on specific time windows.

To optimize BigQuery for predictive maintenance workflows, we must strategically implement table partitioning and clustering:

-

Time-Unit Column Partitioning: We partition the raw telemetry table by the event timestamp (

TIMESTAMPcolumn). This is critical. When Vertex AI pipelines or data engineers query the last 24 hours of vibration data to detect anomalies, BigQuery will only scan that specific day’s partition rather than reading the entire multi-terabyte historical dataset. This drastically reduces query costs and execution time. -

**Clustering: While partitioning divides the data by time, clustering organizes the data within those partitions based on the contents of specific columns. We cluster the table by

equipment_idandsensor_type. When a predictive maintenance model needs to extract the historical baseline specifically for “Motor Assembly A,” BigQuery can zero in on those specific blocks of data instantly, skipping irrelevant equipment records.

A robust, scalable schema for this raw ingestion table typically looks like this:

-

event_timestamp(TIMESTAMP): The exact moment the reading occurred at the edge. -

ingest_timestamp(TIMESTAMP): Automatically populated by Pub/Sub to track pipeline latency. -

equipment_id(STRING): The unique identifier for the machine or asset. -

sensor_type(STRING): The category of the reading (e.g., ‘vibration’, ‘temperature’, ‘rpm’). -

reading_value(FLOAT64): The actual telemetry metric. -

device_metadata(JSON): A flexible payload for varying device-specific attributes.

By leveraging BigQuery’s native JSON data type for the metadata column alongside rigid, strongly-typed columns for highly queried fields, we achieve the perfect balance. We maintain strict schema enforcement for our core analytics while retaining the flexibility to ingest new sensor attributes (like firmware versions or dynamic calibration settings) without requiring constant schema migrations. This structured, highly optimized foundation is exactly what Vertex AI needs to begin its predictive work.

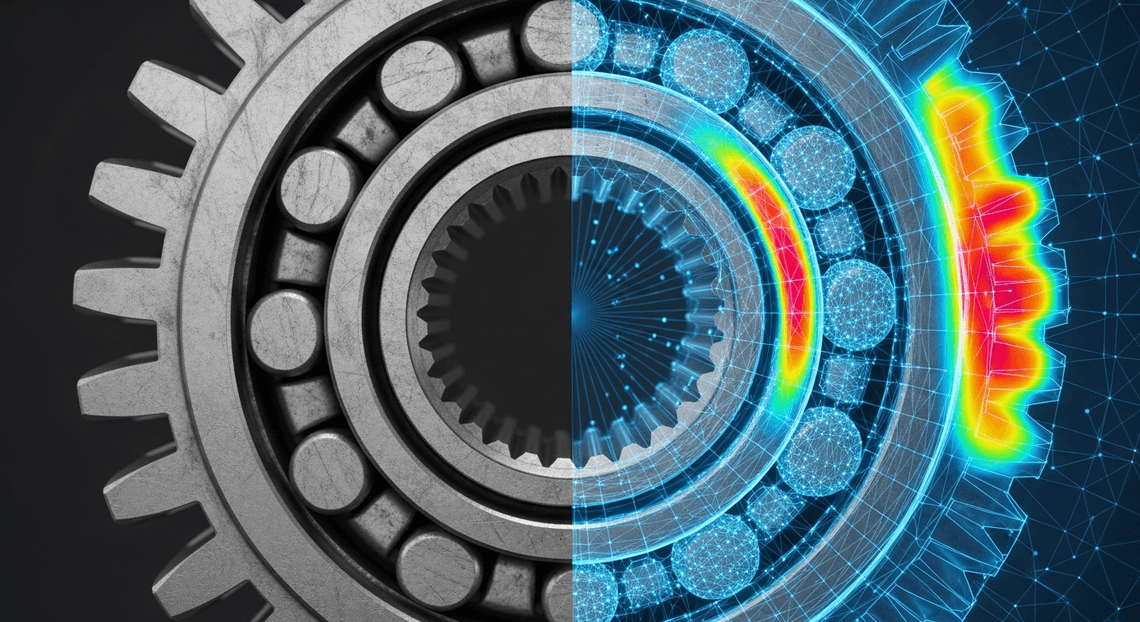

Training the Regression Model in Vertex AI

With our historical maintenance records and raw IoT data securely centralized, the next step is to build a model capable of predicting the Remaining Useful Life (RUL) of our machinery. Because RUL is a continuous variable—representing the number of hours or cycles before a machine is likely to fail—we need to train a robust regression model.

Vertex AI provides a unified ML platform that seamlessly bridges the gap between data storage and model execution. For this architecture, we can leverage Vertex AI’s Custom Training pipelines using frameworks like XGBoost or TensorFlow, or opt for AutoML Tabular if we want Google’s infrastructure to handle hyperparameter tuning and neural architecture search automatically. By pointing Vertex AI directly to our prepared BigQuery dataset, we eliminate the need to move massive amounts of data, ensuring a secure and highly performant training pipeline.

Feature Engineering for Sensor Telemetry

Raw sensor telemetry is inherently noisy, highly granular, and often lacks the contextual framing required for a machine learning model to detect subtle degradation patterns. Before we feed our data into the regression model, rigorous feature engineering is paramount.

In predictive maintenance, the goal is to capture the state and trajectory of a machine’s health. To achieve this, we must transform raw time-series data (such as temperature, vibration, and pressure) into informative features:

-

Rolling Aggregations: Using BigQuery’s powerful

OVERwindow functions, we can calculate sliding window statistics. A 24-hour rolling mean of motor temperature or a 7-day rolling standard deviation of vibration can expose gradual overheating or increasing mechanical looseness that a single data point would miss. -

Lag Features: Introducing lag variables (e.g., the pressure reading from exactly one hour ago, or one day ago) allows the regression model to understand the rate of change (derivatives) over time.

-

Signal Processing Metrics: For high-frequency vibration data, calculating the Root Mean Square (RMS), kurtosis, or peak-to-peak amplitude provides a mathematical representation of mechanical fatigue.

-

Operational Context: Merging sensor data with operational metrics—such as the current load, RPM, or the time since the last maintenance cycle—gives the model the necessary context to differentiate between normal heavy-load operations and actual anomalous wear-and-tear.

To maintain consistency between training and production, these engineered features should be registered in the Vertex AI Feature Store. This ensures that the exact same feature definitions used to train our regression model are served at low latency to our predictive agent during real-time inference, completely eliminating training-serving skew.

Deploying the Predictive Endpoint

Training a highly accurate model is only half the battle; operationalizing it is where the business value is realized. Once our regression model has achieved satisfactory evaluation metrics (such as a low Root Mean Squared Error and a high R-squared value), we register it within the Vertex AI Model Registry.

From the registry, we deploy the model to a Vertex AI Endpoint to serve online predictions. This endpoint acts as the brain of our Predictive Maintenance Agent. When configuring the deployment, Cloud Engineering best practices dictate that we carefully select our compute resources and scaling policies:

-

Compute Selection: For tabular regression models (like XGBoost or Random Forest), standard CPU instances (e.g.,

n2-standard-4) are typically sufficient and cost-effective. If the model relies on deep neural networks with complex feature crosses, attaching an NVIDIA T4 GPU might be necessary to meet latency Service Level Objectives (SLOs). -

Autoscaling: We configure minimum and maximum replica counts based on expected traffic. By setting a target CPU utilization threshold (e.g., 60%), Vertex AI will automatically spin up additional nodes during peak operational hours and scale down to the minimum replica count during off-hours, optimizing cloud spend.

-

Agent Integration: The Predictive Maintenance Agent interacts with this REST/gRPC endpoint via secure, authenticated API calls. As real-time telemetry flows into the system, the agent retrieves the latest engineered features from the Feature Store, constructs the JSON payload, and invokes the endpoint. The endpoint returns the predicted RUL, allowing the agent to trigger automated alerts, schedule maintenance tickets in Automated Discount Code Management System, or shut down machinery before a catastrophic failure occurs.

Automating Alerts via Google Chat API

A predictive maintenance model is only as valuable as the actions it drives. Once Vertex AI identifies an anomaly or predicts an impending equipment failure based on your BigQuery telemetry, that insight must be delivered to the right people instantly. Relying on engineers to constantly monitor a dashboard introduces latency that can lead to costly downtime. By integrating the Google Chat API, we can bridge the gap between our cloud intelligence and the plant floor, pushing proactive notifications directly into the communication channels your teams already use.

Setting Up Webhooks for Real Time Notifications

For one-way alerting—where our cloud architecture pushes information to a Chat space—Incoming Webhooks offer the most lightweight and efficient integration path. When your Vertex AI pipeline or a scheduled BigQuery ML job flags a high probability of failure, it can publish an event to Pub/Sub. A lightweight Cloud Function can then consume this event and trigger the webhook.

To set this up, navigate to the target space in Google Chat, click the space name to open the dropdown menu, select Apps & integrations, and click Manage webhooks. Generate a new webhook and securely store the resulting URL in Google Cloud Secret Manager.

Here is a streamlined example of how a JSON-to-Video Automated Rendering Engine-based Cloud Function can dispatch an alert to that webhook using the standard requests library:

import json

import requests

import os

from google.cloud import secretmanager

def get_webhook_url(secret_id, project_id):

client = secretmanager.SecretManagerServiceClient()

name = f"projects/{project_id}/secrets/{secret_id}/versions/latest"

response = client.access_secret_version(request={"name": name})

return response.payload.data.decode("UTF-8")

def send_chat_alert(event, context):

# Extract prediction data from the Pub/Sub event

pubsub_message = json.loads(event['data'].decode('utf-8'))

webhook_url = get_webhook_url("CHAT_WEBHOOK_URL", os.environ.get("GCP_PROJECT"))

# Basic text payload (to be upgraded to a Card)

message_payload = {

"text": f"⚠️ *Predictive Maintenance Alert:* Anomaly detected on Equipment {pubsub_message['equipment_id']}."

}

response = requests.post(

webhook_url,

data=json.dumps(message_payload),

headers={'Content-Type': 'application/json'}

)

if response.status_code != 200:

print(f"Failed to send alert: {response.status_code}, {response.text}")

This establishes the plumbing, but a simple text string is rarely enough for a busy plant engineer who needs to make split-second operational decisions.

Formatting Actionable Alerts for Plant Engineers

To prevent alert fatigue and empower immediate action, notifications must provide rich context. Google Chat supports Card Messages (specifically Card V2), which allow you to structure data visually and embed interactive UI elements like buttons and key-value pairs.

An actionable alert for a plant engineer should answer three immediate questions: What is failing? How certain are we? What do I do next?

By formatting the payload as a Card, we can highlight the specific machine ID, the Vertex AI confidence score, the predicted time-to-failure (TTF), and provide deep links to your operational dashboards or Work Order management system.

Here is how you structure the JSON payload to generate a highly actionable Card V2 message:

{

"cardsV2": [

{

"cardId": "maintenance_alert_card",

"card": {

"header": {

"title": "Critical Equipment Alert",

"subtitle": "Vertex AI Predictive Maintenance",

"imageUrl": "https://developers.google.com/chat/images/quickstart-app-avatar.png",

"imageType": "CIRCLE"

},

"sections": [

{

"header": "Diagnostic Details",

"widgets": [

{

"decoratedText": {

"startIcon": { "knownIcon": "SETTINGS" },

"topLabel": "Equipment ID",

"text": "Pump-Centrifugal-04A"

}

},

{

"decoratedText": {

"startIcon": { "knownIcon": "CLOCK" },

"topLabel": "Predicted Time to Failure",

"text": "<font color=\"#ff0000\"><b>Under 4 Hours</b></font>"

}

},

{

"decoratedText": {

"startIcon": { "knownIcon": "STAR" },

"topLabel": "Model Confidence",

"text": "94.2%"

}

}

]

},

{

"widgets": [

{

"buttonList": {

"buttons": [

{

"text": "View BigQuery Dashboard",

"onClick": {

"openLink": {

"url": "https://lookerstudio.google.com/your-dashboard-link"

}

}

},

{

"text": "Create Work Order",

"onClick": {

"openLink": {

"url": "https://your-cmms-system.com/new-order?eq=04A"

}

}

}

]

}

}

]

}

]

}

}

]

}

When the Cloud Function posts this JSON to the webhook, the plant engineer receives a beautifully formatted, color-coded card. Instead of hunting down the machine’s status in a separate system, they can immediately assess the severity and click “Create Work Order” to dispatch a maintenance crew before the failure ever occurs. This seamless handoff from BigQuery and Vertex AI to human operators is the hallmark of a mature, cloud-native predictive maintenance architecture.

Scaling Your Maintenance Architecture

Transitioning a predictive maintenance agent from a successful proof-of-concept to a global, enterprise-grade solution requires a deliberate shift in architectural thinking. When your deployment scales from monitoring a single factory floor to overseeing thousands of distributed assets, the volume, velocity, and variety of your telemetry data will increase exponentially. Fortunately, the native integration between BigQuery and Vertex AI provides a robust, serverless foundation designed to handle this exact level of elastic scale. To ensure your architecture remains highly available, cost-effective, and accurate, you must implement rigorous engineering standards across your data pipelines and MLOps lifecycle.

Best Practices for Production Deployments

Deploying a predictive maintenance agent into a live industrial environment means your model’s predictions will directly impact operational uptime and safety. To build a resilient production system on Google Cloud, adhere to the following best practices:

-

Optimize BigQuery for High-Velocity Telemetry: As IoT sensors stream gigabytes of data per minute, naive querying will quickly become cost-prohibitive. Implement Table Partitioning (typically by the

TIMESTAMPof the sensor reading) and Clustering (byequipment_idorfacility_location). This drastically reduces the amount of data scanned during Vertex AI feature extraction and batch prediction jobs. -

Implement Robust MLOps with Vertex AI Pipelines: Predictive maintenance models are highly susceptible to data drift—machine wear-and-tear patterns change, and sensor calibrations drift over time. Use Vertex AI Pipelines to establish a Continuous Training (CT) loop. Configure Vertex AI Model Monitoring to automatically detect training-serving skew or prediction drift, triggering an automated retraining pipeline when performance degrades below your defined threshold.

-

Decouple Ingestion from Inference: Use Cloud Pub/Sub and Dataflow to ingest real-time sensor streams. For immediate anomaly detection (e.g., a sudden temperature spike indicating imminent failure), route a subset of this stream directly to a deployed Vertex AI Endpoint. For long-term predictive maintenance (e.g., predicting a bearing failure in 30 days), route the data to BigQuery for scheduled Vertex AI Batch Predictions.

-

Right-Size Vertex AI Endpoints: When deploying models for real-time inference, configure auto-scaling on your Vertex AI Endpoints. Set minimum replica counts to handle baseline traffic and maximum replica counts to absorb sudden bursts in telemetry data, ensuring you only pay for the compute you actually consume.

-

Enforce Zero-Trust Security: Industrial data is highly sensitive. Wrap your BigQuery datasets and Vertex AI resources inside VPC Service Controls to mitigate data exfiltration risks. Utilize BigQuery Row-Level Security to ensure that regional facility managers can only query prediction data relevant to their specific sites.

Next Steps and Expert Consultation

Building the machine learning architecture is only half the battle; the true value of a predictive maintenance agent is realized when its insights drive immediate human action.

As a next step, consider integrating your Vertex AI outputs directly into your operational workflows using Automated Email Journey with Google Sheets and Google Analytics. For instance, you can use Google Cloud Functions to intercept high-confidence failure predictions and automatically trigger a Google Chat webhook, alerting the on-call engineering team. Alternatively, you can leverage AI-Powered Invoice Processor to build a custom, no-code mobile application that reads prediction data directly from BigQuery, allowing field technicians to view maintenance schedules, update task statuses, and log repair notes directly from the factory floor.

If you are ready to move your predictive maintenance initiatives out of the sandbox and into production, the path forward requires careful planning around data governance, MLOps maturity, and change management.

Whether you need assistance designing a high-throughput streaming pipeline, optimizing your BigQuery storage costs, or establishing a secure, automated Vertex AI lifecycle, expert guidance can significantly accelerate your time-to-value. Reach out for a comprehensive architecture review and expert consultation to ensure your Google Cloud and Automated Google Slides Generation with Text Replacement environments are perfectly aligned to support your next-generation maintenance operations.

Stop Doing Manual Work. Scale with AI.

Want to turn these blog concepts into production-ready reality for your team?

Table Of Contents

Portfolios

Related Posts

Quick Links

Legal Stuff