Implementing Data as Code Syncing Financial Sheets to Firestore for Real Time Dashboards

Discover how the Data as Code pattern is revolutionizing FinTech by applying software engineering rigor to critical financial datasets. Learn how combining the everyday familiarity of Google Sheets with the robust power of Google Cloud Platform transforms your financial records into versioned, fully auditable assets.

Understanding the Data as Code Pattern in FinTech

Data as Code (DaC) is revolutionizing how engineering teams manage critical datasets, applying the same rigorous lifecycle management to data that we have long applied to application source code. In the FinTech sector, where precision, auditability, and governance are non-negotiable, DaC provides a framework to treat financial records, configurations, and schemas as versioned, testable, and deployable assets.

When we apply this pattern at the intersection of Automatically create new folders in Google Drive, generate templates in new folders, fill out text automatically in new files, and save info in Google Sheets and Google Cloud Platform (GCP), we unlock a remarkably powerful paradigm. Finance teams naturally gravitate toward Google Sheets—it is highly collaborative, flexible, and universally understood by business units. However, spreadsheets are not production databases. By implementing a DaC pattern, we can treat a Google Sheet as a human-readable “source code” repository for financial data. When a financial model or ledger is updated, it acts as a commit. This action triggers an event-driven pipeline that validates the data against strict schemas and seamlessly syncs it to a highly scalable NoSQL database like Firestore. This architecture ensures that the data driving your production applications remains strictly governed, historically trackable, and instantly available to downstream services.

The Challenge of Scaling Real Time Financial Reporting

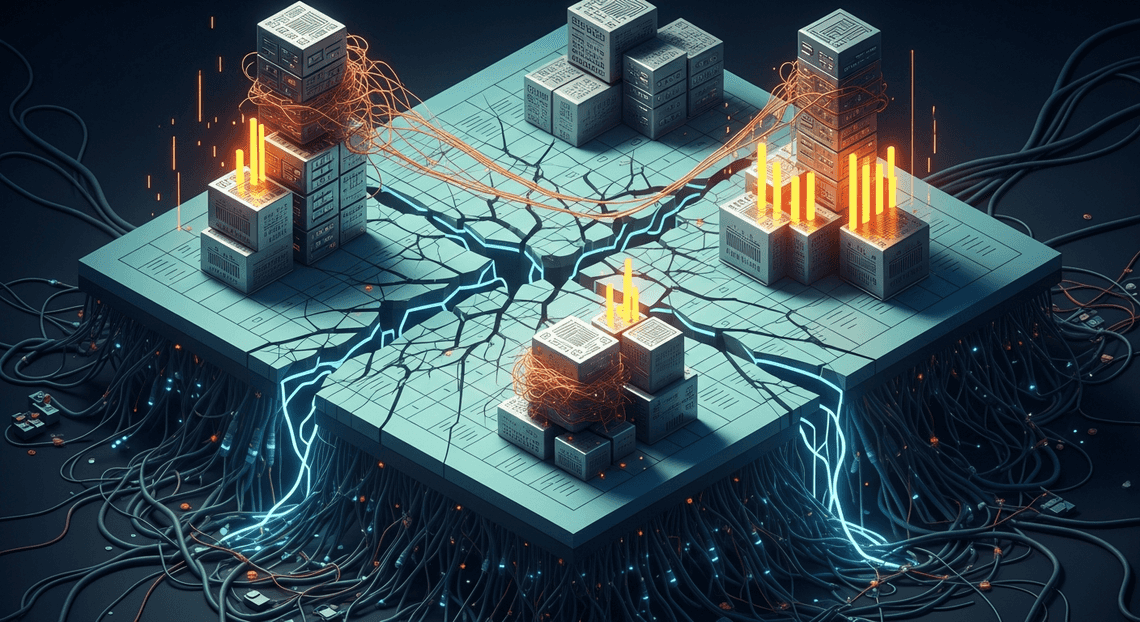

Historically, scaling financial reporting has been a brittle and frustrating endeavor. Finance departments often rely on complex, heavily formulated spreadsheets that inadvertently serve as both the database and the presentation layer. As an organization scales, this monolithic approach quickly breaks down under the weight of its own operational overhead.

From a cloud engineering perspective, attempting to power live, high-traffic dashboards directly from raw spreadsheets introduces severe technical bottlenecks. Querying the Google Sheets API directly for real-time application state will inevitably lead to exhausted API quotas and significant latency. The AC2F Streamline Your Google Drive Workflow ecosystem is brilliantly optimized for human collaboration, but it is not designed for high-throughput, programmatic reads from thousands of concurrent dashboard users.

To circumvent this, many organizations fall back on traditional batch processing. However, Extract, Transform, Load (ETL) jobs that run nightly—or even hourly—introduce unacceptable delays. In a modern FinTech environment, batch processing means downstream dashboards are perpetually stale. When dealing with live transaction volumes, dynamic currency fluctuations, or real-time budget tracking, operating on stale data can lead to catastrophic strategic missteps. Furthermore, without a DaC approach, tracking state changes across distributed financial models becomes a forensic nightmare, entirely lacking the automated testing, validation, and rollback capabilities inherent to modern cloud-native architectures.

Why Low Latency Architecture Matters for Stakeholders

In the fast-paced financial technology sector, the velocity of data directly correlates with business agility. Stakeholders—ranging from C-suite executives monitoring operational burn rates to risk managers evaluating live market exposure—cannot afford to wait for the next batch job to complete. They require a low-latency architecture that reflects financial realities the exact moment they occur.

This is precisely where syncing Workspace data to Firestore becomes a structural game-changer. Firestore is a globally distributed, highly scalable NoSQL document database purpose-built for real-time synchronization. By bridging Google Sheets to Firestore, you effectively decouple the data entry interface (the spreadsheet) from the high-performance data consumption layer (the database).

When a financial analyst updates a forecast or logs a transaction in Sheets, an event-driven microservice—such as a Google Cloud Function or Cloud Run service—can instantly process, validate, and push that payload to Firestore. Because Firestore natively supports real-time listeners via WebSockets, any connected client receives the update in milliseconds. Whether the stakeholder is viewing a custom React dashboard or a Looker application, the UI updates instantly without requiring a page refresh. This sub-millisecond latency ensures that decision-makers are always looking at the absolute latest figures, empowering them to react to market shifts instantly, mitigate risks proactively, and maintain absolute confidence in the metrics driving the business.

System Architecture and Tech Stack Overview

To build a robust “Data as Code” pipeline for financial metrics, we need an architecture that respects the daily workflows of business teams while delivering the high-throughput performance required by modern web applications. The solution lies in decoupling the data entry interface from the application database, creating a seamless, unidirectional data flow. By leveraging the deep integration between Automated Client Onboarding with Google Forms and Google Drive. and Google Cloud, we can construct a serverless, event-driven architecture. Let’s break down the core components of this stack.

Google Sheets as a Validated Financial Data Source

In the financial sector, the spreadsheet is the undisputed king. Rather than forcing finance teams and analysts to learn a bespoke CMS or interact directly with a database, this architecture embraces Google Sheets as the primary data entry point and ultimate source of truth. By treating the spreadsheet as our “codebase” for data, we empower non-technical stakeholders to maintain their existing, highly collaborative workflows.

However, raw spreadsheets are notoriously prone to human error. To elevate Google Sheets to a validated data source, we must enforce strict data governance directly within the UI. We achieve this by utilizing built-in Data Validation rules, protected ranges, and standardized drop-downs. This ensures that the data being entered—whether it is quarterly revenue projections, expense categorizations, or dynamic budget adjustments—strictly adheres to our expected schema before it ever triggers a downstream sync. In this pipeline, Google Sheets acts as the secure mutation layer, providing a familiar, version-controlled environment with granular access management via Automated Discount Code Management System IAM.

Firestore for High Speed Web App Consumption

While Google Sheets is unparalleled for collaborative data entry, it is fundamentally not a database designed for high-concurrency, low-latency web consumption. Relying directly on the Google Sheets API for dashboard queries will inevitably lead to rate-limiting bottlenecks and unacceptable load times. This is where Google Cloud’s Firestore enters the stack as our dedicated read layer.

Firestore is a fully managed, serverless NoSQL document database engineered for massive scalability and real-time synchronization. By mirroring our validated financial data from Sheets into Firestore collections, we create a highly performant backend for our web dashboards. Frontend applications (built in React, Angular, or Vue) can subscribe to Firestore documents via native WebSockets. This means any financial update pushed to the database is instantly, reactively reflected on the client side in milliseconds. This separation of concerns ensures that our dashboard remains lightning-fast and capable of scaling to thousands of concurrent users without putting any load on the underlying financial spreadsheet.

Connecting Systems with Apps Script and Firebase Admin SDK

The critical link in this pipeline is the synchronization mechanism that bridges the Automated Email Journey with Google Sheets and Google Analytics environment with Google Cloud. To achieve this, we utilize AI Powered Cover Letter Automation Engine as our serverless integration glue. Embedded directly within the Google Sheet, Apps Script can listen for specific user actions using Simple or Installable Triggers—such as an onEdit event firing the moment an analyst updates a cell, or a time-driven trigger executing a batch sync at the end of the trading day.

When a change is detected, the Apps Script captures the updated row, transforms the tabular data into a structured JSON document, and prepares it for transmission. Because Apps Script runs in a trusted, server-side environment, we can securely authenticate with Google Cloud using a dedicated Service Account. By utilizing Google’s OAuth2 libraries alongside the Firestore REST API—effectively replicating the capabilities of the Firebase Admin SDK within the Apps Script runtime—we can perform secure, authenticated CRUD operations directly against our Firestore database. This creates a fully automated, real-time pipeline: a financial metric is updated in Sheets, Apps Script catches the event, authenticates the payload, and pushes the document to Firestore, instantly driving the live dashboards.

Step by Step Implementation Guide

Bridging the gap between Automated Google Slides Generation with Text Replacement and Google Cloud requires a strategic approach to data extraction, transformation, and loading (ETL). By treating our spreadsheet as a configuration file—essentially “Data as Code”—we can establish a robust pipeline that feeds our real-time dashboards. Let’s dive into the technical execution.

Configuring the Google Sheets Environment

Before writing any code, the source environment must be structured predictably. Financial data requires strict schemas, even within a flexible interface like Google Sheets, because the structure here dictates the NoSQL document schema in Firestore.

-

Establish a Strict Schema: Open your Google Sheet and dedicate a specific tab for the sync (e.g.,

Financial_Data). Row 1 must act as your schema definition. Use exact, machine-readable headers in camelCase or snake_case (e.g.,transactionId,revenue,operatingCost,timestamp). Avoid spaces or special characters. -

Enforce Data Integrity: Use Google Sheets Data Validation rules on your columns. For instance, ensure the

revenuecolumn only accepts valid numbers andtimestampenforces a strict date-time format. This prevents malformed data from breaking your downstream dashboard. -

Initialize the Workspace: Navigate to Extensions > Apps Script. This opens the cloud-based integrated development environment (IDE) bound to your spreadsheet, where our synchronization logic will execute.

Writing the Apps Script Sync Logic

Inside the Apps Script editor, our primary goal is to extract the two-dimensional array of spreadsheet data and transform it into an array of JSON objects suitable for a NoSQL database.

First, we write a function to parse the sheet:

function getFinancialData() {

const sheet = SpreadsheetApp.getActiveSpreadsheet().getSheetByName("Financial_Data");

const data = sheet.getDataRange().getValues();

// Extract headers to use as JSON keys

const headers = data.shift();

// Map rows to objects

const financialRecords = data.map(row => {

let record = {};

headers.forEach((header, index) => {

// Handle empty cells or format specific types if necessary

record[header] = row[index] !== "" ? row[index] : null;

});

return record;

});

return financialRecords;

}

To make this pipeline “real-time” (or near real-time), we need to trigger the sync automatically. While you can use an onEdit(e) trigger for instant updates on individual cell changes, financial sheets often undergo bulk updates. A more resilient approach for dashboards is a time-driven trigger (e.g., running every 5 minutes) or a custom menu button that allows financial analysts to manually push the data once they have reconciled the sheet.

Authenticating and Pushing Data to Firestore

This is where Google Cloud Engineering comes into play. Apps Script operates outside your GCP project by default, so it needs explicit, secure authorization to write to your Firestore database.

1. Set up a GCP Service Account:

Navigate to your Google Cloud Console, go to IAM & Admin > Service Accounts, and create a new service account. Grant it the Cloud Datastore User role (which encompasses Firestore). Generate a JSON key and copy the project_id, client_email, and private_key.

2. Import the Firestore Library:

Writing a custom OAuth2 flow in Apps Script is tedious. Instead, leverage the well-maintained open-source FirestoreApp library. In the Apps Script editor, go to Libraries, add the script ID 1VUSl4b1r1eoNcRWotZM3mO7ZGOq20X15mQ1hjP04HKJzGPD-rlhIuP-d, and select the latest version.

3. Execute the Sync:

Now, write the logic to authenticate and upsert the data. To maintain idempotency—ensuring we don’t create duplicate records if the script runs twice—we will use the transactionId from our sheet as the Firestore Document ID.

function syncToFirestore() {

const email = "[email protected]";

const key = "-----BEGIN PRIVATE KEY-----\nYOUR_PRIVATE_KEY...\n-----END PRIVATE KEY-----\n";

const projectId = "your-gcp-project-id";

// Authenticate with Firestore

const firestore = FirestoreApp.getFirestore(email, key, projectId);

// Retrieve the parsed data

const records = getFinancialData();

const collectionName = "financial_metrics";

records.forEach(record => {

if (!record.transactionId) return; // Skip invalid rows

try {

// Upsert data: Creates the document if it doesn't exist, updates it if it does

firestore.updateDocument(`${collectionName}/${record.transactionId}`, record, true);

Logger.log(`Successfully synced record: ${record.transactionId}`);

} catch (error) {

Logger.log(`Error syncing record ${record.transactionId}: ${error.message}`);

}

});

}

By utilizing this architecture, every time syncToFirestore() executes, your Google Sheet state is mirrored directly into Firestore. Your frontend real-time dashboards can now listen to this Firestore collection via snapshot listeners, instantly reflecting the financial data managed by your operations team in Google Sheets.

Best Practices for Financial Data Synchronization

When treating your financial spreadsheets as a primary data source—effectively embracing a “Data as Code” philosophy—the pipeline syncing Google Sheets to Firestore must be robust, resilient, and secure. Financial data is unforgiving; a single dropped row or type mismatch can lead to wildly inaccurate real-time dashboards. To build a production-grade synchronization engine, you must adhere to strict cloud engineering best practices across validation, quota management, and security.

Ensuring Data Integrity and Validation

In a Data as Code paradigm, you wouldn’t deploy uncompiled or syntactically incorrect code to production. Similarly, you should never push unvalidated spreadsheet data into your Firestore database. Google Sheets is inherently flexible, which is great for human input but dangerous for automated pipelines. A user might accidentally type a string into a revenue column or leave a critical date field blank.

To guarantee data integrity, implement a strict validation layer between Sheets and Firestore:

-

Schema Enforcement: Utilize schema validation libraries (like Zod or Joi if you are routing data through a Node.js Cloud Function) to verify that every row extracted from the Sheet matches your expected data types. Ensure that currency values are parsed as floats or integers (preferably handling money in cents to avoid floating-point math errors) and dates are converted to standard ISO strings or Firestore Timestamps.

-

Atomic Operations: Never write financial records to Firestore one by one in a loop. If the script fails halfway through, your dashboard will display partial, corrupted data. Instead, use Firestore Batched Writes. Batched writes ensure that your data operations are atomic—either all the financial records update simultaneously, or none of them do.

-

Data Hashing for State Comparison: To avoid unnecessary writes and ensure exact synchronization, compute a hash (e.g., MD5 or SHA-256) of the row data. Compare this hash against the existing document in Firestore. If the hash hasn’t changed, skip the write. This idempotent approach ensures your dashboard always reflects the exact state of the spreadsheet.

Handling Rate Limits and Execution Quotas

Both Automated Order Processing Wordpress to Gmail to Google Sheets to Jobber and Google Cloud have strict API quotas designed to protect their infrastructure. When syncing large financial ledgers, you can easily hit these ceilings, resulting in 429 Too Many Requests errors and stalled dashboards.

To build a resilient pipeline, you must architect your sync logic to respect these boundaries:

-

Google Sheets API Read Limits: The Sheets API enforces a quota (typically 300 read requests per minute per project). If you are using Apps Script triggers (

onEditoronChange) to power real-time updates, a user making rapid, successive edits can exhaust this quota. Implement a debouncing mechanism or a message queue (like Google Cloud Pub/Sub) to aggregate rapid sheet changes into a single, scheduled read operation. -

Firestore Write Limits and Chunking: Firestore is incredibly scalable, but Batched Writes have a hard limit of 500 operations per batch. If your financial sheet contains 2,500 rows, you must programmatically chunk your payload into arrays of 500 records and commit them sequentially.

-

Exponential Backoff: Network anomalies and temporary quota exhaustion are inevitable in distributed systems. Wrap your Google Sheets API calls and Firestore commits in an exponential backoff retry block. If a request fails due to rate limiting, the script should pause for a short duration (e.g., 1 second), retry, and incrementally increase the wait time upon subsequent failures.

Securing Your Financial Data Pipeline

Financial data is highly sensitive. Moving it from a restricted Google Sheet to a NoSQL database requires a watertight security posture to prevent unauthorized access, both at the pipeline level and the dashboard level.

-

Principle of Least Privilege (IAM): If you are using a Google Cloud Function or Cloud Run service to broker the sync, assign it a dedicated Service Account. Do not use the default Compute Engine service account. Grant this specific Service Account only the

Datastore Userrole for Firestore and restrict its Automated Payment Transaction Ledger with Google Sheets and PayPal scopes tohttps://www.googleapis.com/auth/spreadsheets.readonly. It should only have the permissions necessary to read the specific financial sheet and write to the specific Firestore collection. -

Protecting API Credentials: Never hardcode API keys, spreadsheet IDs, or service account JSON keys in your synchronization scripts. Leverage Google Cloud Secret Manager to store these sensitive values, injecting them into your execution environment only at runtime.

-

Firestore Security Rules: Securing the pipeline is only half the battle; you must also secure the destination. Because your real-time dashboards will likely query Firestore directly from the client side, you must implement robust Firestore Security Rules. Ensure that read access to the financial collections is restricted to authenticated users (via Firebase Authentication) who possess the correct custom claims or role-based access control (RBAC) attributes. Explicitly deny all client-side write access, as Firestore should only ever be mutated by your secure, backend synchronization pipeline.

Scaling Your Architecture for Future Growth

As your organization’s financial operations expand, the volume and velocity of your data will inevitably increase. What begins as a straightforward Genesis Engine AI Powered Content to Video Production Pipeline pushing a few hundred rows from Google Sheets to Firestore can quickly evolve into a mission-critical pipeline handling thousands of daily transactions across multiple departmental ledgers. To ensure your “Data as Code” architecture remains resilient, you must transition from a simple point-to-point sync to a decoupled, enterprise-grade architecture.

By introducing intermediary services like Google Cloud Pub/Sub and Cloud Functions, you can buffer incoming data bursts, bypass Apps Script execution timeouts, and handle Firestore batch writes asynchronously. This event-driven approach ensures that even if a massive end-of-month financial reconciliation is triggered in Workspace, your downstream real-time dashboards remain highly available and perfectly synchronized.

Monitoring Sync Performance and Latency

You cannot confidently scale a system without deep observability. When financial data is the lifeblood of your dashboard, delayed or dropped syncs are unacceptable. To maintain a robust pipeline, you need to implement comprehensive monitoring using Google Cloud’s Operations Suite (formerly Stackdriver).

First, focus on Cloud Logging. Ensure that your sync scripts—whether running in Apps Script or Cloud Functions—are emitting structured logs. Track the exact payload sizes, the number of documents written, and the execution duration of each sync event.

Next, leverage Cloud Monitoring to keep a pulse on system latency and throughput. Key metrics to track include:

-

Execution Time: Monitor your serverless functions to ensure they aren’t creeping toward their maximum timeout limits (e.g., the 6-minute limit for Apps Script).

-

Firestore Write Latency: Keep an eye out for “hotspotting”—a scenario where sequentially increasing document IDs (like timestamps) cause performance bottlenecks on a single Firestore partition.

-

Error Rates and Retries: Set up custom log-based metrics to detect API quota limits (like the Google Sheets API read limits) or failed Firestore batch commits.

Tie these metrics together by configuring Alerting Policies. If the sync latency exceeds your defined Service Level Indicator (SLI) or if a dead-letter queue (DLQ) starts filling up with failed sync payloads, your data engineering team should receive an immediate notification via Google Chat, Slack, or PagerDuty.

Expanding Your Web Dashboard Capabilities

Once your financial data is reliably flowing into Firestore at scale, the potential for your web dashboards is virtually limitless. Firestore’s native real-time synchronization (onSnapshot listeners) already provides a live view of your financial health, but you can elevate this by integrating additional Google Cloud capabilities.

-

Historical Analytics with BigQuery: Firestore is optimized for fast, real-time document retrieval, but it is not designed for complex, multi-year financial aggregations. By deploying the official “Stream Firestore to BigQuery” Firebase Extension, you can automatically mirror your real-time financial data into a data warehouse. This allows your dashboard to serve real-time metrics from Firestore while querying BigQuery for deep historical trends and predictive analytics.

-

Granular Security and Access Control: Financial data requires strict governance. Expand your dashboard’s security posture by implementing Firebase Authentication paired with advanced Firestore Security Rules. You can restrict data access down to the document or field level based on a user’s custom claims (e.g., ensuring regional managers only see their specific territorial sheets). Additionally, implementing Firebase App Check will protect your backend from unauthorized API abuse.

-

Edge Caching for Global Teams: If your dashboard is being accessed by a globally distributed executive team, consider placing Firebase Hosting and Cloud CDN in front of your static assets, and utilize Firestore’s offline persistence and local caching to minimize database reads and reduce latency for end-users.

Book a GDE Discovery Call with Vo Tu Duc

Designing a real-time, scalable data pipeline between Google Docs to Web and Google Cloud requires a deep understanding of both ecosystems. If you are looking to implement a “Data as Code” architecture, optimize your current Firestore sync performance, or design a custom enterprise dashboard, expert guidance can save you months of trial and error.

Vo Tu Duc, a recognized Google Developer Expert (GDE) in Google Cloud, specializes in architecting seamless, high-performance integrations between SocialSheet Streamline Your Social Media Posting and GCP. Whether you need an architectural review of your current syncing mechanism, advice on mitigating API quota limits, or a strategic roadmap for scaling your cloud infrastructure, a discovery call is the perfect next step.

Book a GDE Discovery Call with Vo Tu Duc today to discuss your specific use case, uncover optimization opportunities, and ensure your financial data pipelines are built for the future.

Stop Doing Manual Work. Scale with AI.

Want to turn these blog concepts into production-ready reality for your team?

Table Of Contents

Portfolios

Related Posts

Quick Links

Legal Stuff