Automating Course Feedback Analysis with Vertex AI and Looker Studio

Student feedback is a goldmine of insights, but manually processing thousands of free-text responses quickly becomes a logistical nightmare. Discover how to overcome this massive data engineering challenge and efficiently scale your survey analysis to improve educational outcomes.

The Challenge of Scaling Student Feedback Analysis

Every semester, educational institutions sit on a goldmine of unstructured data: student feedback. Whether collected through Google Forms, learning management systems, or custom survey tools, these end-of-module evaluations and mid-semester check-ins hold the key to improving curriculum quality, instructor effectiveness, and overall student retention. However, as enrollment numbers grow and digital learning platforms expand, the sheer volume of this data transforms from a valuable asset into a logistical nightmare. Scaling the analysis of thousands—or tens of thousands—of qualitative, free-text responses is a massive data engineering challenge that traditional administrative workflows simply were not built to handle.

The Bottleneck of Manual Course Survey Processing

In a typical university setup, course survey processing relies heavily on manual intervention. Data is often collected seamlessly via Automatically create new folders in Google Drive, generate templates in new folders, fill out text automatically in new files, and save info in Google Sheets tools like Google Forms, but it inevitably ends up dumped into a static Google Sheet or exported as a CSV file. From there, faculty or administrative staff are tasked with reading through hundreds of rows of unstructured text to gauge student sentiment, identify recurring themes, and flag critical issues.

This manual pipeline introduces severe operational bottlenecks:

-

High Latency: Processing end-of-term surveys manually can take weeks. By the time actionable insights are compiled and distributed, the semester has concluded, and the students have moved on.

-

Subjectivity and Fatigue: Human analysis is inherently subjective. Two different administrators might interpret the same piece of constructive criticism in entirely different ways, and the accuracy of manual tagging degrades as reviewer fatigue sets in.

-

Data Silos: The manual approach traps valuable data in isolated spreadsheets. Performing cross-course comparisons, identifying departmental trends, or conducting historical How to build a Custom Sentiment Analysis System for Operations Feedback Using Google Forms AppSheet and Vertex AI becomes nearly impossible without tedious, error-prone data wrangling.

When your analytics engine is limited by human reading speed, scaling up requires hiring more staff—a costly, inefficient proposition that fails to leverage the true potential of the data.

Why University Admins Need Real Time Data Visibility

The modern educational landscape moves fast, and relying on post-mortem analytics is no longer sufficient. University administrators, department heads, and instructional designers require real-time data visibility to make proactive, rather than reactive, decisions.

When feedback analysis is instantaneous, the benefits cascade throughout the entire institution. Administrators can immediately identify “at-risk” courses where students are collectively struggling with the syllabus pacing or material, allowing for mid-flight pedagogical corrections before midterms or finals. Real-time visibility also empowers faculty to adapt their teaching methodologies on the fly, addressing specific pain points highlighted in weekly or bi-weekly pulse surveys rather than waiting for the end of the year.

Furthermore, having a centralized, live view of student sentiment across all departments enables institutional leadership to allocate resources dynamically. Whether that means deploying additional teaching assistants to a struggling cohort, adjusting cloud lab quotas for a computer science track, or upgrading facilities consistently flagged in survey responses, speed is the critical factor. To achieve this level of operational agility, institutions must bridge the gap between raw, unstructured survey data and live executive dashboards—a gap that manual processing can never close.

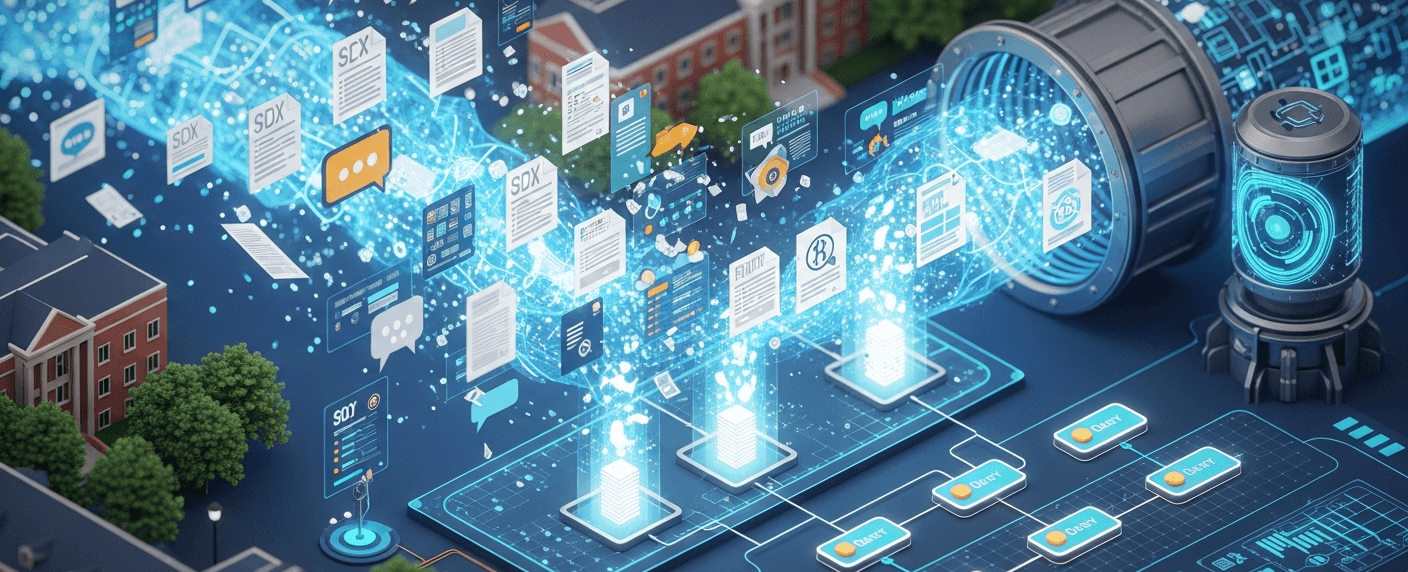

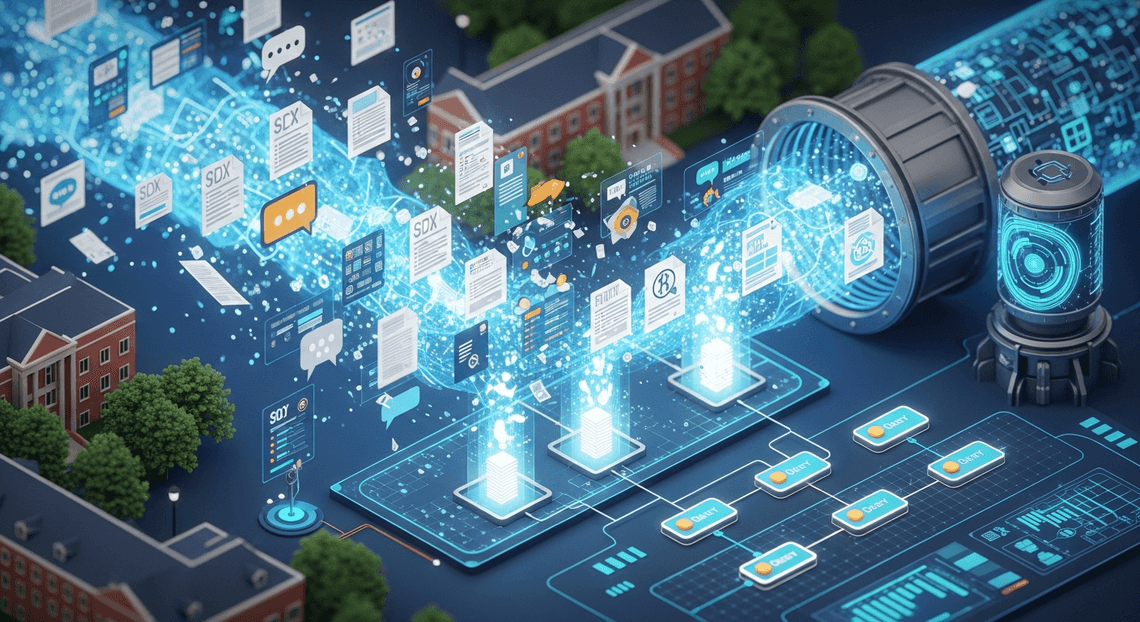

Designing an Automated Insight Reporting Architecture

To transform raw, unstructured student feedback into actionable intelligence, we need an architecture that is resilient, scalable, and entirely hands-off once deployed. By leveraging the native synergy between AC2F Streamline Your Google Drive Workflow and Google Cloud, we can build an event-driven system that processes qualitative data the moment it is submitted. The goal here is to eliminate the manual toil of reading through hundreds of survey responses and instead rely on a streamlined, automated reporting architecture that surfaces insights in real-time.

Connecting Google Sheets, Vertex AI, and Looker Studio

The foundation of this architecture relies on integrating three distinct platforms, each serving a specialized role: ingestion, processing, and presentation.

-

Google Sheets (The Ingestion & Storage Layer): Acting as the primary database for our lightweight architecture, Google Sheets natively captures responses from Google Forms. It provides an accessible, structured environment to hold both the raw input and the processed AI output.

-

Vertex AI (The Intelligence Layer): This is where the heavy lifting happens. By tapping into Vertex AI—specifically utilizing Google’s Gemini foundational models—we can perform complex natural language processing tasks such as sentiment analysis, topic extraction, and summarization on the raw feedback.

-

Looker Studio (The Presentation Layer): Looker Studio serves as the visualization engine. Using its native Google Sheets connector, it continuously polls the structured data to populate dynamic, interactive dashboards.

The “glue” binding these three components together is AI Powered Cover Letter Automation Engine. As a serverless JavaScript runtime built into Automated Client Onboarding with Google Forms and Google Drive., Apps Script is perfectly positioned to act as the orchestrator. It can listen for new data in Sheets, authenticate securely with Google Cloud using Application Default Credentials (ADC) or OAuth2, construct the payload, make REST API calls to Vertex AI, and parse the resulting intelligence back into the spreadsheet.

Mapping the Data Pipeline from Collection to Visualization

To truly understand how this architecture operates in production, let’s walk through the end-to-end data pipeline. This event-driven flow ensures that within seconds of a student hitting “Submit,” their feedback is analyzed and reflected in your reporting dashboard.

-

Data Collection (Event Trigger): The pipeline initiates when a student completes a course evaluation via Google Forms. The form automatically appends a new row to the linked Google Sheet containing the timestamp, student metadata, and their open-ended feedback.

-

Orchestration (Apps Script Execution): An

onFormSubmitevent trigger configured within Genesis Engine AI Powered Content to Video Production Pipeline detects the new row. The script extracts the raw text from the feedback column and formats it into a JSON payload, combining it with a carefully engineered system prompt (e.g., “Analyze the following course feedback. Return a JSON object containing a sentiment score from 1-10, the primary topic, and a one-sentence summary.”). -

AI Inference (Vertex AI Processing): The Apps Script makes an authenticated POST request to the Vertex AI endpoint. The Gemini model processes the prompt, evaluates the nuances of the student’s text, and returns a structured JSON response containing the requested analytical data points.

-

Data Structuring (Write-Back): Upon receiving the response from Vertex AI, the Apps Script parses the JSON and writes the extracted insights—such as Sentiment Score, Core Theme (e.g., “Curriculum,” “Pacing,” “Instructor”), and Summary—into designated, previously empty columns in that exact same row of the Google Sheet.

-

Visualization (Looker Studio Refresh): Looker Studio is connected directly to this Google Sheet. As the sheet updates with new structured data, Looker Studio ingests it. Course administrators viewing the dashboard will instantly see the new data reflected in their time-series charts, sentiment gauges, and thematic heatmaps.

By mapping the pipeline this way, we create a closed-loop system. Unstructured human language enters at the beginning, and highly structured, quantifiable business intelligence emerges at the end, ready to drive curriculum improvements.

Building the Sentiment Analysis Pipeline

To transform qualitative course feedback into actionable, quantitative insights, we need a robust pipeline that bridges Automated Discount Code Management System and Google Cloud. This pipeline acts as the central nervous system of our architecture: it automatically detects new survey responses, evaluates the nuanced sentiment and core topics of the text, and formats the output for seamless visualization.

Parsing Raw Survey Data Using Google SheetsApp

When students submit course feedback via Google Forms, the raw data is naturally aggregated into a Google Sheet. To automate the ingestion of this data, we leverage Architecting Multi Tenant AI Workflows in Google Apps Script—specifically the SpreadsheetApp service—to act as our lightweight, serverless extraction layer.

Instead of running batch jobs on a schedule, the most efficient approach is to use an onFormSubmit installable trigger. This event-driven architecture ensures that our pipeline processes data in near real-time. When a trigger fires, the script captures the event object, extracting the newly appended row.

Here is how you can isolate and parse the raw text data:

function onFeedbackSubmit(e) {

// Extract the submitted values from the event object

const responses = e.namedValues;

// Assuming the form has a question titled "Course Feedback"

const rawFeedback = responses["Course Feedback"] ? responses["Course Feedback"][0] : "";

const timestamp = responses["Timestamp"] ? responses["Timestamp"][0] : new Date().toISOString();

if (!rawFeedback || rawFeedback.trim() === "") {

console.warn("Empty feedback received. Skipping pipeline execution.");

return;

}

// Pass the parsed text to the AI processing function

const aiInsights = analyzeWithVertexAI(rawFeedback);

// Route to the structuring function

logProcessedData(timestamp, rawFeedback, aiInsights);

}

By utilizing e.namedValues, we decouple our script from specific column indices, making the pipeline resilient to changes in the Google Form structure (such as adding new questions later).

Applying Vertex AI for Sentiment and Topic Extraction

With the raw text parsed, the next step is to pass it to Google Cloud’s Vertex AI. For this task, Vertex AI’s Gemini models are highly effective, as they excel at natural language understanding, zero-shot classification, and returning strictly formatted JSON.

To communicate with Vertex AI from Apps Script, we use the UrlFetchApp service combined with a Google Cloud project configured with the Vertex AI API. You will need to ensure your Apps Script project is linked to a standard GCP project to utilize ScriptApp.getOAuthToken() for seamless authentication.

The secret to reliable extraction lies in Prompt Engineering for Reliable Autonomous Workspace Agents. We must instruct the model to act as a data analyst and strictly output a JSON object containing a sentiment score (e.g., -1.0 to 1.0), a sentiment label (Positive, Neutral, Negative), and an array of key topics.

function analyzeWithVertexAI(feedbackText) {

const projectId = 'YOUR_GCP_PROJECT_ID';

const location = 'us-central1';

const modelId = 'gemini-1.5-pro';

const endpoint = `https://${location}-aiplatform.googleapis.com/v1/projects/${projectId}/locations/${location}/publishers/google/models/${modelId}:generateContent`;

const prompt = `

Analyze the following course feedback.

Return a valid JSON object with exactly three keys:

1. "sentiment_score": A float between -1.0 (highly negative) and 1.0 (highly positive).

2. "sentiment_label": "Positive", "Neutral", or "Negative".

3. "topics": An array of 1 to 3 short strings identifying the main subjects discussed (e.g., "Pacing", "Assignments", "Instructor").

Feedback: "${feedbackText}"

`;

const payload = {

contents: [{ role: "user", parts: [{ text: prompt }] }],

generationConfig: { responseMimeType: "application/json" }

};

const options = {

method: 'post',

contentType: 'application/json',

headers: { Authorization: 'Bearer ' + ScriptApp.getOAuthToken() },

payload: JSON.stringify(payload),

muteHttpExceptions: true

};

const response = UrlFetchApp.fetch(endpoint, options);

const responseData = JSON.parse(response.getContentText());

// Extract the JSON string returned by Gemini

const rawJsonString = responseData.candidates[0].content.parts[0].text;

return JSON.parse(rawJsonString);

}

Expert Tip: Notice the use of responseMimeType: "application/json" in the generationConfig. This Vertex AI feature guarantees that the model’s output is syntactically valid JSON, eliminating the need for complex regex parsing to strip out markdown backticks in Apps Script.

Structuring the Processed Data for Analytics

Looker Studio thrives on flat, well-typed, tabular data. The nested JSON object returned by Vertex AI is incredibly useful, but it cannot be natively digested by Looker Studio without proper structuring. The final stage of our pipeline flattens this AI-generated payload and writes it to a designated “Processed Data” repository—either a separate tab in our Google Sheet or directly into a BigQuery table.

For a Sheets-based architecture, we want to append a single row per feedback submission. Arrays (like our extracted topics) should be joined into a comma-separated string, which Looker Studio can later parse using calculated fields or regex extractors.

function logProcessedData(timestamp, originalText, aiInsights) {

const ss = SpreadsheetApp.getActiveSpreadsheet();

// Ensure we are writing to a dedicated sheet for Looker Studio ingestion

let processedSheet = ss.getSheetByName("Processed_Insights");

if (!processedSheet) {

processedSheet = ss.insertSheet("Processed_Insights");

// Initialize headers if the sheet was just created

processedSheet.appendRow(["Timestamp", "Original Feedback", "Sentiment Score", "Sentiment Label", "Topics"]);

}

// Flatten the topics array into a single string

const topicsString = aiInsights.topics ? aiInsights.topics.join(", ") : "None";

// Construct the flat row

const newRow = [

timestamp,

originalText,

aiInsights.sentiment_score,

aiInsights.sentiment_label,

topicsString

];

// Append the structured data

processedSheet.appendRow(newRow);

}

By structuring the data this way, we establish a clear schema: Timestamp serves as our time-series dimension, Sentiment Score acts as a continuous metric for calculating averages and trends, Sentiment Label provides a categorical dimension for pie charts or bar graphs, and Topics allows for word clouds or categorical filtering. This clean separation ensures that when Looker Studio connects to this sheet, the data modeling phase is virtually effortless.

Visualizing Student Insights with Looker Studio

Data is only as valuable as the decisions it empowers you to make. After leveraging the natural language processing capabilities of Vertex AI to parse, categorize, and assign sentiment scores to raw student feedback, the next critical step is making those insights accessible. Raw JSON outputs or database rows aren’t particularly useful to course instructors or curriculum designers. This is where Looker Studio bridges the gap, transforming the enriched data sitting in BigQuery into an interactive, highly visual narrative.

By natively connecting Looker Studio to your Google Cloud data warehouse, you can build a dynamic reporting layer that updates automatically as new course evaluations and feedback forms are processed by your Vertex AI pipeline.

Creating a Centralized Feedback Dashboard

To build a truly effective feedback loop, educators and administrators need a single pane of glass. Creating a centralized feedback dashboard in Looker Studio begins with establishing a live connection to your BigQuery dataset. Because both tools exist within the Google Cloud ecosystem, this integration is seamless, requiring no complex ETL middleware—just a few clicks to authorize the data source.

When designing the layout of your centralized dashboard, structure is everything. A well-architected Looker Studio dashboard should follow a “top-down” analytical approach:

-

Global Controls: At the very top, implement drop-down lists and date range controls. This allows users to filter the entire dashboard dynamically by specific parameters such as

Course ID,Department,Instructor Name, orSemester. -

Executive Scorecards: Below the filters, use scorecard charts to display high-level aggregated metrics. Think “Average Overall Sentiment,” “Total Reviews Processed,” or “Net Promoter Score (NPS).” You can use Looker Studio’s calculated fields to translate Vertex AI’s numerical sentiment scores (e.g., -1.0 to 1.0) into intuitive 1-100 scales or categorical labels (Positive/Neutral/Negative).

-

Thematic Breakdown: Utilize pie charts or tree maps to visualize the distribution of topics extracted by Vertex AI. If the LLM categorized feedback into themes like “Course Pacing,” “Material Clarity,” or “Assignment Difficulty,” a quick visual breakdown instantly highlights what students are talking about the most.

Tracking Key Metrics and Sentiment Trends Over Time

While a snapshot of current feedback is helpful, the real power of this pipeline is revealed through temporal analysis. Tracking key metrics and sentiment trends over time allows educational institutions to measure the direct impact of curriculum adjustments, pedagogical shifts, or even changes in course delivery formats.

To achieve this in Looker Studio, time-series charts are your best asset. By mapping the Submission Date on the X-axis and the Average Sentiment Score on the Y-axis, you can visualize the emotional trajectory of a course. For instance, if you notice a sharp dip in sentiment during week four of a semester, instructors can investigate whether a specific module or assignment was particularly frustrating for students.

You can further enhance these visualizations by utilizing Looker Studio’s advanced charting features:

-

**Stacked Bar Charts for Sentiment by Topic: Create a bar chart where the X-axis represents the weeks of the course, and the bars are broken down by topic (e.g., “Lectures,” “Labs”). Color-code the segments based on sentiment (green for positive, red for negative). This instantly reveals not just when students were frustrated, but what they were frustrated about.

-

Scatter Plots for Correlation: Plot “Course Difficulty Rating” against “Overall Sentiment” to see if harder courses consistently yield lower sentiment, or if there is a sweet spot of challenging yet rewarding coursework.

-

Word Clouds and Table Heatmaps: For the actual text data, use a table with a heatmap applied to the sentiment column. This allows instructors to read the most critical (or most glowing) raw feedback directly within the dashboard, providing qualitative context to the quantitative trends.

By visualizing these Vertex AI-generated metrics dynamically, you transition from a reactive model—where feedback is only reviewed at the end of a semester—to a proactive, agile educational environment where student needs can be addressed in near real-time.

Transforming University Operations with Data

Higher education institutions generate massive amounts of data every semester, particularly through student feedback and course evaluations. Traditionally, analyzing this qualitative data has been a manual, time-consuming process, often resulting in delayed insights that arrive too late to benefit the current student cohort. By leveraging modern cloud architecture—specifically Google Cloud’s Vertex AI and Looker Studio—universities can fundamentally transform their operational models.

Instead of letting end-of-term surveys gather digital dust in isolated Automated Email Journey with Google Sheets and Google Analytics environments, educational leaders can automate the ingestion, processing, and visualization of student sentiment. This shift from reactive to proactive data management empowers universities to optimize resource allocation, enhance student retention, and streamline administrative workflows. When feedback is processed at scale using advanced Natural Language Processing (NLP) and machine learning models, university operations become agile, data-driven, and highly responsive to the evolving needs of the student body.

Driving Curriculum Improvements Through Analytics

The true power of automated feedback analysis lies in its ability to directly influence the quality of education. When Vertex AI parses thousands of open-ended student comments, it goes far beyond calculating a generic positive or negative sentiment score; it extracts nuanced entities, key phrases, and recurring themes. For example, a well-configured Looker Studio dashboard can highlight specific modules where students consistently struggle with pacing, or flag a recurring demand for more hands-on lab exercises in a particular engineering course.

Educators, department heads, and instructional designers can use these granular, AI-generated insights to make targeted curriculum improvements. Rather than overhauling an entire syllabus based on anecdotal evidence or gut feeling, academic departments can pinpoint exactly which weeks, assignments, or topics require revision. This continuous, analytics-driven feedback loop ensures that course materials remain relevant, engaging, and aligned with student learning objectives, ultimately elevating the academic standard and reputation of the institution.

Book a GDE Discovery Call with Vo Tu Duc

Implementing enterprise-grade AI and data analytics solutions requires strategic planning and deep technical expertise. If your institution is ready to modernize its feedback systems, break down data silos, and harness the full potential of Google Cloud and Automated Google Slides Generation with Text Replacement, it is time to consult with an industry expert.

Vo Tu Duc, a recognized Google Developer Expert (GDE), is available to help you navigate this digital transformation. Whether your engineering team needs guidance on architecting a scalable Vertex AI pipeline, integrating complex data sources, or designing high-impact Looker Studio dashboards, a discovery call with Duc can provide the clarity and technical direction your project needs. Book a GDE Discovery Call with Vo Tu Duc today to discuss your specific operational challenges, evaluate your current cloud infrastructure, and map out a tailored engineering strategy that drives measurable educational impact.

Stop Doing Manual Work. Scale with AI.

Want to turn these blog concepts into production-ready reality for your team?

Table Of Contents

Portfolios

Related Posts

Quick Links

Legal Stuff