Omnichannel Product Feed Automation With Google Apps Script

Selling across multiple platforms is essential for e-commerce growth, but keeping inventory and pricing perfectly synced is a massive distributed systems challenge. Discover how to master omnichannel synchronization and prevent stale data from eroding your bottom line.

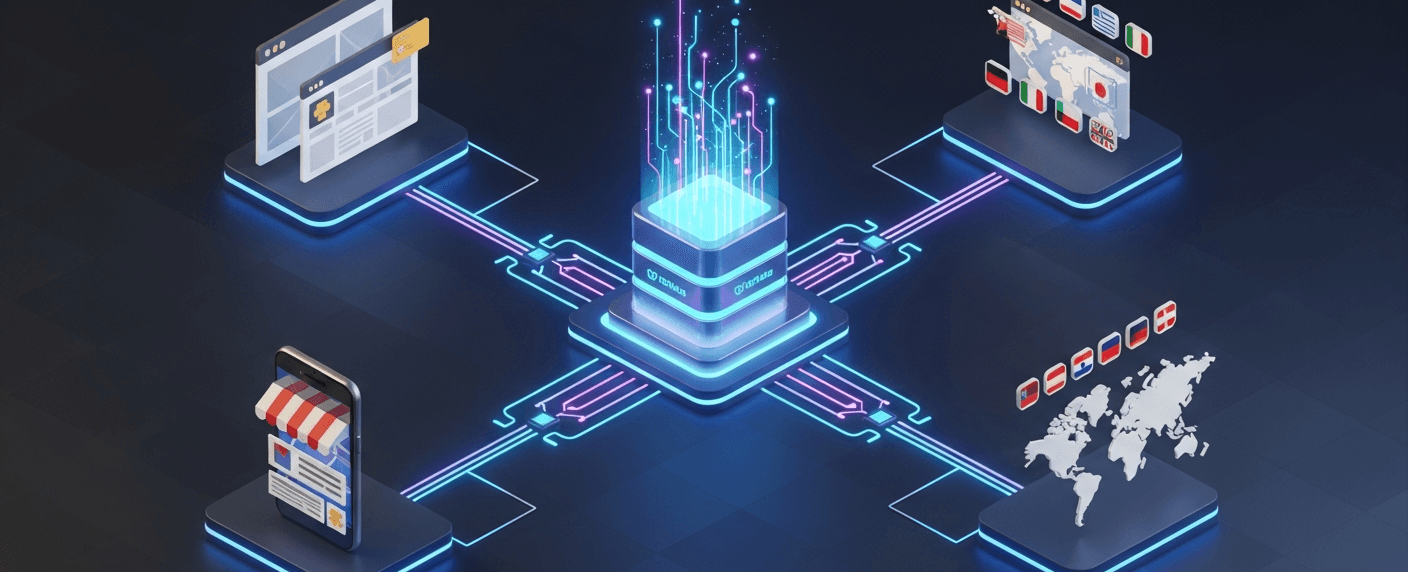

Solving Omnichannel Synchronization Challenges

In today’s hyper-connected retail landscape, merchants rarely rely on a single storefront. A modern e-commerce architecture might simultaneously push product data to a primary web store, Google Merchant Center, Amazon, Meta Commerce Manager, and various affiliate networks. While expanding reach is a proven strategy for revenue growth, it introduces a massive data orchestration problem: omnichannel synchronization.

Keeping pricing, inventory levels, and product metadata perfectly aligned across disparate APIs, data formats, and platforms is fundamentally a complex distributed systems challenge. When these systems fall out of sync—even by just a few minutes—the friction cascades from the underlying database directly to the end consumer.

The Impact of Stale Inventory Data on E commerce

Data latency in an omnichannel environment is more than just a technical hiccup; it directly erodes the bottom line. When product feeds rely on slow, batch-processed updates (such as a once-a-day manual CSV export), the resulting stale inventory data creates several critical failure points:

- Overselling and Fulfillment Failures: If a high-demand item sells out on Amazon, but the primary storefront and Google Shopping feeds aren’t updated immediately, customers will continue to place orders. This leads to forced cancellations, negative customer reviews, and severe algorithmic penalties from marketplace platforms.

-

Wasted Ad Spend: Routing paid traffic to out-of-stock product pages is a rapid way to burn through a marketing budget. Advertising platforms require near real-time accuracy; failing to provide it not only wastes money on dead clicks but can also result in automated account suspensions for policy violations.

-

Missed Revenue Opportunities (Underselling): Conversely, when new stock is ingested into the warehouse management system, delays in propagating that data to the product feeds mean those items sit invisible to buyers, artificially suppressing conversion rates.

-

Brand Erosion: Modern consumers expect a seamless, unified experience. Discrepancies in pricing, variants, or availability across different channels destroy trust and drive buyers directly to competitors.

Why DevOps Teams Need Automated Sync Agents

Historically, managing product feeds fell into a gray area between marketing operations and IT. This often resulted in brittle, monolithic cron jobs, spaghetti code, or worse—manual spreadsheet uploads. For modern DevOps and Cloud Engineering teams, this legacy approach is highly unsustainable. Engineering resources are simply too valuable to be spent untangling hardcoded API integrations or debugging failed batch jobs every time the marketing team adds a new sales channel.

This is where the deployment of Automated Sync Agents becomes critical. By utilizing lightweight, serverless sync agents, DevOps teams can effectively decouple the core inventory database from the edge sales channels.

Integrating automated sync agents into your cloud architecture provides several distinct advantages:

-

Elimination of Operational Toil: Serverless agents handle the extraction, transformation, and loading (ETL) of feed data automatically. This frees cloud engineers from babysitting legacy infrastructure and managing manual data pipelines.

-

Event-Driven Agility: Instead of relying on rigid, time-based schedules, modern sync agents can be triggered by webhooks or Automatically create new folders in Google Drive, generate templates in new folders, fill out text automatically in new files, and save info in Google Sheets events (such as an edit in a master Google Sheet). This ensures near real-time data propagation across the entire omnichannel network.

-

**Resilience and Error Handling: A well-architected agent doesn’t just push data; it handles the complexities of external networks. It can implement exponential backoff for API rate limits, log payload failures directly to Google Cloud Logging, and alert on-call teams via Google Chat or Slack before a marketplace feed gets suspended.

-

Zero-Infrastructure Overhead: Utilizing tools like AI Powered Cover Letter Automation Engine as the execution environment means there are no virtual machines to provision, patch, or scale. It acts as the perfect serverless glue, bridging the gap between AC2F Streamline Your Google Drive Workflow—where merchandisers and product managers often collaborate—and external REST APIs.

By treating product feed synchronization as a legitimate cloud engineering challenge and deploying automated agents, DevOps teams can transform a fragile operational bottleneck into a robust, highly available data pipeline.

Architecture of the Apps Script Sync Agent

To achieve true omnichannel automation, you need a robust, event-driven middleware that bridges your raw product data with your external sales channels. In our serverless architecture, Genesis Engine AI Powered Content to Video Production Pipeline acts as this central “Sync Agent.” Rather than relying on heavy, continuously running virtual machines, the Sync Agent leverages the native integrations within Automated Client Onboarding with Google Forms and Google Drive. and Google Cloud to execute code only when necessary. This architecture is designed around three core pillars: detecting changes, validating data integrity, and pushing updates via APIs.

Utilizing SpreadsheetApp for Event Triggers

At the heart of the Sync Agent’s responsiveness is the SpreadsheetApp class, which transforms a standard Google Sheet from a static database into a dynamic, event-driven interface. When a merchandiser updates a price or a warehouse system logs a change in stock levels, the Sync Agent needs to know immediately.

We achieve this by implementing Apps Script triggers. For real-time, granular updates, we utilize the onEdit(e) simple trigger or an installable edit trigger. By capturing the event object (e), the script can intelligently determine exactly what changed without needing to parse the entire product catalog.

For example, the agent inspects e.range.getColumn() and e.range.getRow() to verify if the modified cell belongs to a critical column—such as “Price” or “Availability.” If a non-critical field (like an internal note) is changed, the script terminates early, saving execution time and quota. For larger, catalog-wide synchronizations, we complement these event-driven triggers with time-driven triggers generated via ScriptApp.newTrigger(), allowing the Sync Agent to process bulk updates during off-peak hours.

Validating State Against the Core Inventory Database

While Google Sheets serves as an excellent operational frontend, enterprise architectures typically rely on a more robust backend—such as Google Cloud SQL, BigQuery, or an external ERP—as the ultimate source of truth. Before the Sync Agent broadcasts any changes to your omnichannel feeds, it must ensure that the data it is about to push is accurate and up-to-date.

This is where the validation layer of the architecture comes into play. When an update is triggered, the Sync Agent temporarily halts the outbound push to cross-reference the new state against the core inventory database. Using Apps Script’s native Jdbc service, the agent can securely connect directly to a Cloud SQL instance. Alternatively, if your inventory data resides in a data warehouse, the BigQuery Advanced Service can be invoked to run a rapid validation query.

The agent compares the SKU’s state in the spreadsheet against the database record. If the database confirms the stock level or price change, the validation passes. If there is a discrepancy (e.g., a race condition where the database shows the item as out-of-stock despite the spreadsheet indicating otherwise), the agent rejects the push, logs the anomaly, and automatically reverts the spreadsheet cell to match the core database. This strict validation state guarantees that you never publish phantom inventory or incorrect pricing to your sales channels.

Connecting the Google Content API for Shopping

Once the product data is captured and validated, the final step in the architecture is syndicating that data to the broader web. For Google-owned surfaces, this means integrating directly with the Google Merchant Center via the Google Content API for Shopping.

Because Apps Script is a first-party Google Cloud product, authenticating and connecting to the Content API is remarkably seamless. By enabling the “Content API for Shopping” Advanced Service in the Apps Script editor, the Sync Agent gains access to the ShoppingContent class without the need to manually manage complex OAuth2 flows or service account keys.

The Sync Agent takes the validated row data and maps it to the strict JSON schema required by Google Merchant Center. It constructs a product resource object containing mandatory attributes like offerId, title, condition, price, and availability. Depending on the volume of the update, the agent will either use ShoppingContent.Products.insert for a single, high-priority SKU update, or it will construct a batch request using ShoppingContent.Products.custombatch to update hundreds of items in a single API call. By handling the API responses and parsing any errors or warnings returned by Merchant Center, the Sync Agent ensures that your omnichannel feed remains healthy, compliant, and perfectly synchronized with your backend operations.

Constructing the Automation Pipeline

With the foundational architecture in place, it is time to build the core execution engine of our omnichannel strategy. A robust automation pipeline ensures that any product modification—whether it is a price adjustment, a title optimization, or an inventory update—flows seamlessly and securely from your operational spreadsheet to your customer-facing channels. We will break this pipeline down into three distinct phases: detecting changes, validating data integrity against our backend, and pushing the finalized payload to Google Merchant Center.

Setting Up Google Sheets Watchers

To make our pipeline reactive, we need to monitor the Google Sheet for changes in real-time. While Architecting Multi Tenant AI Workflows in Google Apps Script provides simple triggers like onEdit(e), interacting with external APIs and databases requires authorization scopes that simple triggers cannot execute. Therefore, we will programmatically set up an installable trigger.

The watcher’s job is to capture the event object (e), verify that the edit occurred in the correct worksheet and specific columns (e.g., avoiding triggers when someone just changes a header), and extract the modified row’s data.

/**

* Run this function once to authorize and create the installable trigger.

*/

function setupInstallableWatcher() {

const sheet = SpreadsheetApp.getActiveSpreadsheet();

ScriptApp.newTrigger('processFeedUpdate')

.forSpreadsheet(sheet)

.onEdit()

.create();

}

/**

* The callback function triggered on spreadsheet edits.

* @param {GoogleAppsScript.Events.SheetsOnEdit} e

*/

function processFeedUpdate(e) {

if (!e || !e.range) return;

const sheet = e.range.getSheet();

// Only watch the 'Master_Feed' sheet

if (sheet.getName() !== 'Master_Feed') return;

const row = e.range.getRow();

// Ignore header edits

if (row < 2) return;

// Fetch the entire row's data for processing

const rowData = sheet.getRange(row, 1, 1, sheet.getLastColumn()).getValues()[0];

// Map row data to a structured object (assuming predefined column order)

const productData = {

sku: rowData[0],

title: rowData[1],

price: rowData[2],

availability: rowData[3]

};

// Pass to the validation logic

validateAgainstDatabase(productData, row);

}

By leveraging the event object’s range, we avoid reading the entire spreadsheet into memory, ensuring our script executes within Automated Discount Code Management System’s optimal performance thresholds.

Writing the Database Validation Logic

In an omnichannel environment, your Google Sheet is often a staging area for marketing and merchandising teams, but it should rarely be treated as the absolute source of truth for inventory. Before we push any updates to Google Merchant Center, we must validate the spreadsheet data against our backend database (e.g., Cloud SQL) to prevent selling out-of-stock items or publishing incorrect pricing.

Google Apps Script’s Jdbc service allows us to connect directly to Google Cloud SQL. The following logic intercepts the product data, queries the database for the specific SKU, and validates the business rules.

/**

* Validates spreadsheet product data against Cloud SQL inventory.

* @param {Object} productData

* @param {number} rowIndex

*/

function validateAgainstDatabase(productData, rowIndex) {

const dbUrl = 'jdbc:google:mysql://YOUR_GCP_PROJECT:REGION:INSTANCE_ID/inventory_db';

const user = 'db_user';

const userPwd = 'db_password';

try {

const conn = Jdbc.getCloudSqlConnection(dbUrl, user, userPwd);

const stmt = conn.prepareStatement('SELECT stock_level, minimum_price FROM products WHERE sku = ?');

stmt.setString(1, productData.sku);

const results = stmt.executeQuery();

if (results.next()) {

const dbStock = results.getInt('stock_level');

const dbMinPrice = results.getFloat('minimum_price');

// Business Logic: Override availability if DB stock is 0

if (dbStock <= 0) {

productData.availability = 'out of stock';

}

// Business Logic: Prevent underpricing

if (parseFloat(productData.price) < dbMinPrice) {

throw new Error(`Price ${productData.price} is below DB minimum of ${dbMinPrice}`);

}

// If validation passes, proceed to API Push

executeMerchantCenterPush(productData);

} else {

throw new Error('SKU not found in master database.');

}

results.close();

stmt.close();

conn.close();

} catch (error) {

console.error(`Validation failed for SKU ${productData.sku}: ${error.message}`);

// Optional: Write error back to a "Status" column in the Sheet

SpreadsheetApp.getActiveSpreadsheet().getSheetByName('Master_Feed')

.getRange(rowIndex, 10).setValue(`ERROR: ${error.message}`);

}

}

This validation layer acts as a critical safeguard. By utilizing prepared statements (prepareStatement), we also protect our Cloud SQL instance from SQL injection attacks, maintaining strict security standards within our cloud architecture.

Executing the Merchant Center API Push

Once the product data is formatted and validated against our database, the final step is to synchronize it with Google Merchant Center. Instead of managing raw HTTP requests and OAuth2 flows manually, we will utilize the Content API for Shopping, which is available as an Advanced Google Service in Apps Script. (Note: You must enable the “Shopping Content API” in the Apps Script Services menu before running this code).

The Content API requires a highly specific JSON payload. We will construct this payload dynamically and use the Products.insert method to upsert the data into Merchant Center.

/**

* Pushes validated product data to Google Merchant Center.

* @param {Object} productData

*/

function executeMerchantCenterPush(productData) {

const merchantId = 'YOUR_MERCHANT_CENTER_ID'; // Replace with your actual Merchant ID

// Construct the Product resource payload

const productResource = {

offerId: productData.sku,

title: productData.title,

description: productData.description || 'Standard product description',

link: `https://www.yourstore.com/product/${productData.sku}`,

imageLink: `https://www.yourstore.com/images/${productData.sku}.jpg`,

contentLanguage: 'en',

targetCountry: 'US',

channel: 'online',

availability: productData.availability,

condition: 'new',

price: {

value: productData.price.toString(),

currency: 'USD'

}

};

try {

// Upsert the product via the Content API

const response = ShoppingContent.Products.insert(productResource, merchantId);

console.log(`Successfully pushed SKU: ${response.offerId}. Product ID: ${response.id}`);

} catch (error) {

console.error(`Merchant Center API Push failed for SKU ${productData.sku}: ${error.message}`);

// Implement exponential backoff or retry logic here if dealing with rate limits (429 errors)

if (error.message.includes('quota')) {

Utilities.sleep(2000);

// Retry logic could be invoked here

}

}

}

By using the insert method, the Content API will automatically add the product if it does not exist, or update it if the offerId (SKU) is already present in your feed. This idempotent behavior is perfect for an automated pipeline, ensuring that our Automated Email Journey with Google Sheets and Google Analytics environment remains perfectly synchronized with our live omnichannel advertising efforts.

Optimizing for Reliability and Scale

Building a Google Apps Script that successfully syncs a few dozen products is a straightforward task. However, scaling that same script to handle thousands of SKUs, dynamic pricing updates, and real-time inventory across multiple sales channels requires a cloud engineering mindset. As your omnichannel strategy grows, your automation will inevitably collide with execution timeouts, API restrictions, and unexpected data anomalies. To transform a basic script into an enterprise-grade integration, you must architect for resilience.

Managing API Quotas and Rate Limits

When automating product feeds, you are constantly negotiating between two sets of constraints: Google Apps Script’s internal quotas and the rate limits of your external omnichannel endpoints (like Shopify, Amazon Selling Partner API, or Google Merchant Center).

Google Apps Script enforces a strict 6-minute execution limit per script (up to 30 minutes for Automated Google Slides Generation with Text Replacement Enterprise accounts). Simultaneously, external APIs often utilize “leaky bucket” algorithms or strict requests-per-second (RPS) limits. Hitting these limits without a mitigation strategy results in partial feed uploads and 429 Too Many Requests errors.

To ensure reliable data synchronization at scale, implement the following strategies:

-

Stateful Execution and Pagination: Never assume a large product catalog will sync in a single execution. Use

PropertiesService.getScriptProperties()to save your script’s state (e.g., the last processed SKU index or a pagination cursor). If the script approaches the 5-minute mark, gracefully terminate the process and set up a time-driven trigger to resume exactly where it left off. -

Batch Operations: Minimize HTTP overhead by utilizing batch endpoints whenever the target API supports them. Within Apps Script, leverage

UrlFetchApp.fetchAll(requests)to execute multiple asynchronous requests in parallel, drastically reducing overall execution time compared to sequential loops. -

Exponential Backoff: Network hiccups and temporary API throttling are inevitable. Wrap your API calls in a robust retry mechanism using exponential backoff. If a request fails, the script should pause (using

Utilities.sleep()), retry, and progressively increase the wait time between subsequent failures before finally throwing an exception.

// Example: Simple Exponential Backoff for API Calls

function fetchWithBackoff(url, options, maxRetries = 5) {

let attempts = 0;

while (attempts < maxRetries) {

try {

const response = UrlFetchApp.fetch(url, options);

if (response.getResponseCode() === 200) return response;

} catch (e) {

attempts++;

if (attempts >= maxRetries) throw new Error(`Failed after ${maxRetries} attempts: ${e.message}`);

// Wait 1s, 2s, 4s, 8s, etc.

Utilities.sleep((Math.pow(2, attempts) * 1000) + Math.round(Math.random() * 1000));

}

}

}

Implementing Error Logging and Alerting Mechanisms

In omnichannel retail, silent failures are catastrophic. If your script fails to update an out-of-stock product due to an unhandled exception, you risk overselling, customer dissatisfaction, and account penalties on platforms like Google Shopping or Amazon.

Moving beyond the basic Logger.log(), your automation needs a comprehensive observability strategy to track feed health and alert stakeholders immediately when critical syncs fail.

-

Leverage Google Cloud Logging: By default, Apps Script projects are tied to a standard Google Cloud project. Use the

consoleclass (console.log,console.info,console.warn,console.error) instead ofLogger. These logs are automatically routed to Google Cloud Logging (formerly Stackdriver), allowing you to filter by severity, search through historical executions, and set up log-based metrics for your product feeds. -

Granular Try/Catch Blocks: Do not wrap your entire script in a single

try/catch. Implement granular error handling at the item level. If a single SKU contains malformed data (e.g., a missing image URL), catch the error, log the specific product ID, skip it, and allow the rest of the feed to process successfully. -

Automated Webhook Alerting: When a critical failure occurs—such as an API authentication token expiring or a feed dropping below a certain product count threshold—your script should proactively notify the team. Integrating a simple webhook to Google Chat, Slack, or Microsoft Teams ensures that cloud engineers or e-commerce managers are alerted in real-time.

// Example: Granular Error Handling and Alerting

function processProductFeed(products) {

let errorCount = 0;

products.forEach(product => {

try {

// Attempt to sync individual product

syncToChannel(product);

console.info(`Successfully synced SKU: ${product.sku}`);

} catch (error) {

errorCount++;

console.error(`Failed to sync SKU: ${product.sku}. Error: ${error.message}`);

}

});

// Trigger an alert if the error threshold is breached

if (errorCount > 0) {

sendSlackAlert(`⚠️ *Feed Sync Warning:* ${errorCount} products failed to sync across omnichannel endpoints. Check Google Cloud Logs for details.`);

}

}

By strictly managing your quotas and establishing a proactive logging and alerting pipeline, your Google Apps Script transforms from a fragile internal tool into a highly resilient, scalable engine capable of driving your entire omnichannel product strategy.

Next Steps for Your E commerce Infrastructure

Automating your omnichannel product feeds with Google Apps Script is a massive leap forward in operational efficiency, but it is rarely the final destination. As your catalog grows, your sales channels multiply, and your update frequencies increase, your underlying infrastructure must evolve to keep pace. Treating your e-commerce architecture as a dynamic, scalable ecosystem is crucial for maintaining a competitive edge and ensuring high availability across all your storefronts.

Evaluating Your Current Sync Architecture

Before building new features or adding more sales channels to your automated pipeline, it is essential to audit your existing synchronization architecture. While Google Apps Script and Google Sheets provide an incredibly agile and cost-effective foundation, enterprise-level scaling often introduces new technical requirements.

To determine if your current setup is future-proof, consider the following architectural checkpoints:

-

Execution Limits and Quotas: Are your Apps Script functions consistently brushing up against the 6-minute execution limit? If your SKU count has grown significantly, you might be experiencing timeout errors or hitting the daily URL Fetch Service quotas when pushing payloads to platforms like Shopify, Amazon, or Meta.

-

Data Volume and Latency: Google Sheets is an excellent lightweight database, but performance degrades as you approach millions of cells. If your product feed processing is experiencing high latency, it may be time to transition your data layer to a more robust solution like Google Cloud SQL or BigQuery.

-

Event-Driven vs. Schedule-Driven: Currently, your Apps Script is likely relying on time-driven triggers (e.g., syncing every hour). Does your business now require near real-time inventory updates to prevent overselling? If so, you need to evaluate migrating toward an event-driven architecture using Google Cloud Pub/Sub and Cloud Run.

-

Error Handling and Observability: How quickly do you know if a feed sync fails? A mature architecture requires comprehensive logging and alerting. Moving beyond basic Apps Script email alerts to Google Cloud Logging and Monitoring ensures you catch API payload rejections before they impact your bottom line.

If your evaluation reveals bottlenecks in any of these areas, the next logical step is to bridge your Automated Order Processing Wordpress to Gmail to Google Sheets to Jobber automation with the heavy-lifting capabilities of Google Cloud Platform (GCP).

Book a GDE Discovery Call with Vo Tu Duc

Navigating the transition from a lightweight Google Apps Script automation to a fully decoupled, enterprise-grade Google Cloud architecture can be complex. You don’t have to figure out the optimal scaling strategy through trial and error.

To ensure your e-commerce infrastructure is built on best practices, you can Book a GDE Discovery Call with Vo Tu Duc. As a recognized Google Developer Expert (GDE) in Google Cloud and Automated Payment Transaction Ledger with Google Sheets and PayPal, Vo Tu Duc specializes in designing high-performance, scalable cloud architectures tailored specifically for complex e-commerce operations.

During this discovery call, you will:

-

Review Your Current Pipeline: Walk through your existing Apps Script code, API integrations, and data flows to identify immediate bottlenecks and technical debt.

-

Explore GCP Modernization: Discover how to seamlessly offload heavy processing to serverless solutions like Google Cloud Functions or Cloud Run, while maintaining the accessibility of Google Docs to Web for your non-technical team members.

-

Define a Scaling Roadmap: Map out a concrete, step-by-step strategy to upgrade your omnichannel feed automation, ensuring it can handle holiday traffic spikes, massive catalog expansions, and real-time inventory syncing.

Don’t let infrastructure limitations throttle your omnichannel growth. Reach out today to schedule your consultation and transform your product feed automation into a resilient, enterprise-ready engine.

Stop Doing Manual Work. Scale with AI.

Want to turn these blog concepts into production-ready reality for your team?

Table Of Contents

Portfolios

Related Posts

Quick Links

Legal Stuff