Building a Source of Truth Blueprint Sync with AppSheet and Google Drive

Fragmented communication between the design office and the job site skyrockets the risk of field crews building from outdated blueprints. Discover why manual version control is failing project engineers and how to bridge the gap with a centralized source of truth.

The Danger of Outdated Blueprints in the Field

In the highly dynamic environment of a construction site, information is just as critical as concrete and steel. While the architectural and engineering teams in the back office are constantly iterating on designs, resolving clashes, and issuing change orders, the field teams are executing the physical work. The critical vulnerability in this lifecycle lies in the handoff. When the pipeline between the design office and the job site relies on fragmented communication, the risk of a field crew working off an outdated blueprint skyrockets. Without a centralized, synchronized source of truth, the gap between what is being designed and what is being built widens by the hour.

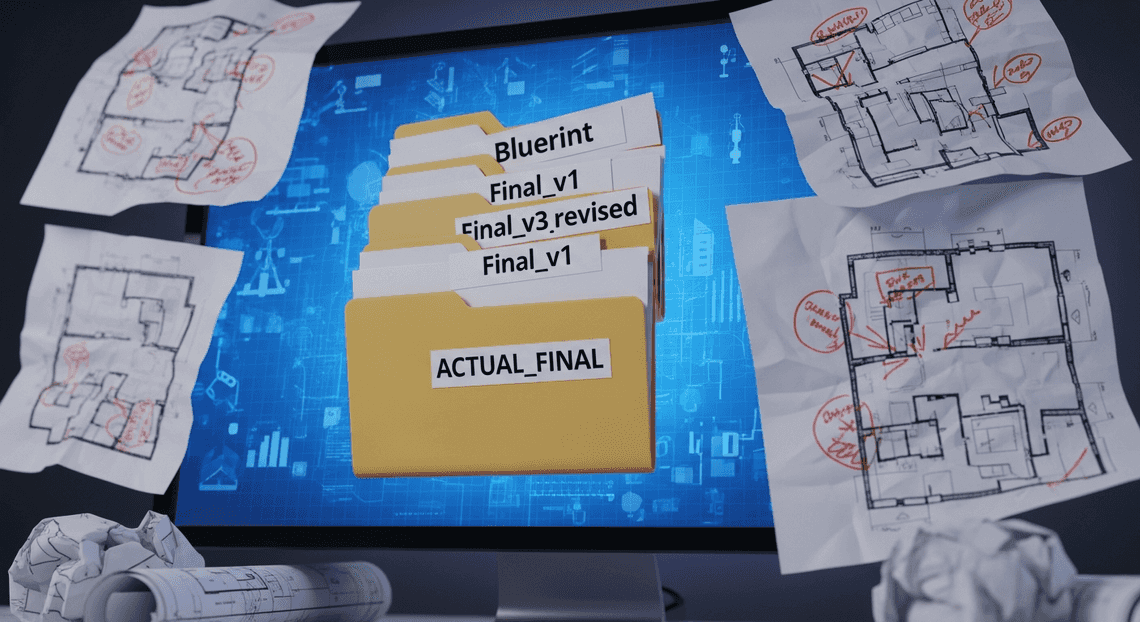

Why Manual Version Control Fails Project Engineers

Project engineers are often tasked with the heavy lifting of bridging the gap between the design team and the field contractors. Historically, this has meant relying on manual version control—a process that is fundamentally broken for modern, fast-paced projects.

Manual version control typically manifests as a chaotic web of email attachments, localized hard drive folders, and physical paper prints. This approach fails project engineers for several key reasons:

- The “Final_v3_revised” Naming Sprawl: Relying on file naming conventions to dictate the current version is a recipe for disaster. When multiple stakeholders download, annotate, and re-upload files, it becomes nearly impossible to track which document represents the actual approved design.

-

Synchronization Latency: When an architect updates a floor plan, a manual process requires someone to explicitly notify the field, distribute the new file, and ensure the old file is destroyed or deleted. This latency means there is often a dangerous window of time where the field is actively building based on obsolete data.

-

Offline Access Failures: Job sites are notorious for having poor or non-existent cellular and Wi-Fi coverage. If a project engineer manually downloads a blueprint to their tablet on Monday, and a critical structural revision is published on Tuesday, that engineer remains blissfully unaware while working offline on Wednesday.

-

Data Silos: Manual distribution inevitably leads to fragmented data silos. The electrical subcontractor might be looking at a PDF emailed last week, while the HVAC team is looking at a printed sheet from yesterday. When trades are not aligned on the exact same version, clashes are inevitable.

The Real Cost of Construction and Architectural Errors

Working off outdated blueprints isn’t just an administrative annoyance; it translates directly into catastrophic project impacts. The construction industry operates on tight margins and strict timelines, both of which are easily obliterated by version control failures.

1. The Financial Drain of Rework

The most immediate and painful cost of an outdated blueprint is rework. If a framing crew builds a wall according to Revision A, but Revision B had moved that wall two feet to the left to accommodate a new HVAC duct, that wall must be torn down and rebuilt. Rework not only wastes expensive raw materials but also doubles the labor costs for that specific task. Industry studies consistently show that rework can account for up to 5% to 9% of total project costs—a massive hit to profitability.

2. Cascading Schedule Delays

Construction is a highly sequential process. A delay in one trade cascades into delays for everyone else. If plumbing needs to be re-routed because the field team was looking at an old architectural layout, the drywallers cannot close the walls, the painters cannot paint, and the entire project schedule slips. These delays often trigger contractual penalties and liquidated damages.

3. Safety and Compliance Risks

Beyond budgets and schedules, outdated blueprints pose severe safety and compliance risks. Engineering revisions are frequently made to address structural integrity, fire safety codes, or load-bearing requirements. If the field executes an outdated design that lacks these critical safety updates, the building may fail municipal inspections, requiring invasive and costly remediation. In the worst-case scenario, it can lead to catastrophic structural failures and endanger lives.

4. The Erosion of Trust

Constant errors and rework breed frustration on the job site. When field crews lose faith in the accuracy of the documents provided by the project engineers, they begin to second-guess instructions, leading to a spike in Requests for Information (RFIs) that further bog down the administrative workflow. A lack of a reliable source of truth ultimately damages the working relationship between the office and the field.

Designing a Single Source of Truth Architecture

In complex operational environments—whether construction, manufacturing, or field engineering—working off an outdated blueprint is not just an inconvenience; it is a costly liability. Designing a Single Source of Truth (SSOT) architecture ensures that every stakeholder, from the lead architect in the office to the technician on the ground, is referencing the exact same, most up-to-date documentation. By leveraging the deep integration capabilities of Google Cloud and Automatically create new folders in Google Drive, generate templates in new folders, fill out text automatically in new files, and save info in Google Sheets, we can engineer a resilient architecture where data silos are eliminated, version control is automated, and governance is centralized.

Core Components of an Automated Sync System

To build a robust SSOT blueprint sync, we must decouple the storage of the physical files from the metadata that governs them, while seamlessly tying them back together in the user interface. An effective automated sync system relies on four foundational pillars:

-

The Storage Layer (Google Drive): Google Drive acts as the secure, scalable repository for the actual blueprint files (PDFs, DWGs, or image files). Instead of relying on static folder paths, the architecture utilizes unique Google Drive File IDs. This ensures that even if a file is renamed or moved within the Drive hierarchy, the system’s link to the asset remains unbroken.

-

The Metadata Database (Google Sheets or Cloud SQL): While Drive holds the heavy files, a structured database is required to manage the metadata—such as blueprint version numbers, project associations, approval statuses, and timestamps. For lightweight deployments, Google Sheets serves as an excellent, highly responsive backend. For enterprise-scale operations, Google Cloud SQL provides the relational rigor needed to manage thousands of concurrent blueprint records.

-

The Orchestration Engine (AI Powered Cover Letter Automation Engine & AI-Powered Invoice Processor Automation): This is the connective tissue of the sync system. Using Genesis Engine AI Powered Content to Video Production Pipeline, we can set up time-driven or event-driven triggers (e.g., a webhook that fires when a new file is added to a specific Drive folder). The script extracts the new file’s ID and metadata, automatically updating the database layer. AMA Patient Referral and Anesthesia Management System Automation then handles the downstream logic, such as sending push notifications to field workers when a critical blueprint is updated.

-

The Frontend Application (AppSheetway Connect Suite): OSD App Clinical Trial Management serves as the dynamic presentation layer. It reads the metadata database and dynamically renders the associated Google Drive files directly within a mobile or web interface, providing a unified portal for end-users.

Connecting AC2F Streamline Your Google Drive Workflow to Field Operations

The true power of this architecture lies in its ability to bridge the gap between back-office environments and remote field operations. Traditionally, connecting these two domains required complex VPNs, clunky enterprise software, or manual paper trails. By utilizing AppSheet on top of Automated Client Onboarding with Google Forms and Google Drive., we transform standard office tools into a ruggedized field solution.

Seamless Workflow Integration

Designers and engineers can continue working in their native Automated Discount Code Management System environment. When an engineer approves a new blueprint, they simply drop the revised file into a designated Google Drive folder. The orchestration engine instantly detects this change, updates the metadata, and pushes the new blueprint to the AppSheet application. The field worker does not need to navigate complex folder structures or request access; the updated blueprint simply appears in their app, tied to their specific daily task.

Offline Capabilities and Caching

Field operations frequently occur in environments with poor or non-existent network connectivity. AppSheet bridges this gap through intelligent offline caching. When a field worker’s device is connected to Wi-Fi or cellular data, the app syncs the latest blueprint metadata and caches the necessary Google Drive files locally. The worker can view high-resolution blueprints deep underground or in remote locations, with any field notes or markup data syncing back to the Google Cloud backend the moment connectivity is restored.

Role-Based Access and Security

Connecting the field to the back office requires strict governance. Leveraging Automated Email Journey with Google Sheets and Google Analytics’s robust Identity and Access Management (IAM), the architecture ensures that field personnel only have read-access to approved blueprints, preventing accidental deletions or unauthorized modifications. AppSheet’s user-based filtering ensures that a technician only sees the blueprints relevant to their assigned site, reducing cognitive load and securing sensitive intellectual property.

Building the Automated Sync Logic

To establish a true single source of truth, your architecture requires a reliable bridge between your file repository and your application interface. In this case, we need Google Drive to communicate seamlessly with AppSheet whenever a blueprint is added, modified, or removed. Because Google Drive does not natively push folder-level event webhooks directly to AppSheet, we will leverage Architecting Multi Tenant AI Workflows in Google Apps Script as our intelligent middleware.

By writing a custom Apps Script, we can periodically poll our target Drive folder, detect any delta changes, and push those updates directly into our AppSheet data tables using the AppSheet API.

Monitoring the Blueprints Folder with Apps Script

The first step in our automation logic is to create a script that watches our designated “Blueprints” folder. To do this efficiently, we want to avoid processing every single file every time the script runs. Instead, we will use the PropertiesService in Apps Script to store a timestamp of the last successful execution. During each run, the script will only flag files that have been modified after this timestamp.

Open the Apps Script editor (via Google Drive or script.google.com) and implement the following logic:

function checkBlueprintUpdates() {

// Replace with your specific Google Drive Folder ID

const folderId = 'YOUR_DRIVE_FOLDER_ID';

const folder = DriveApp.getFolderById(folderId);

// Retrieve the last run time from Script Properties

const scriptProperties = PropertiesService.getScriptProperties();

const lastRun = parseInt(scriptProperties.getProperty('LAST_RUN_TIME')) || 0;

const currentTime = new Date().getTime();

const files = folder.getFiles();

const updatedBlueprints = [];

// Iterate through files to find recent modifications

while (files.hasNext()) {

const file = files.next();

const lastUpdated = file.getLastUpdated().getTime();

if (lastUpdated > lastRun) {

updatedBlueprints.push({

id: file.getId(),

name: file.getName(),

url: file.getUrl(),

mimeType: file.getMimeType(),

lastUpdated: file.getLastUpdated()

});

}

}

// If changes are detected, trigger the AppSheet sync

if (updatedBlueprints.length > 0) {

syncToAppSheet(updatedBlueprints);

}

// Update the LAST_RUN_TIME property for the next execution

scriptProperties.setProperty('LAST_RUN_TIME', currentTime.toString());

}

This function forms the heartbeat of our sync process. To automate it, you will need to set up a Time-driven trigger within the Apps Script interface (the clock icon on the left sidebar). Configuring this trigger to run every 5 to 15 minutes ensures your AppSheet application remains closely synchronized with your Drive folder without exhausting your Automated Google Slides Generation with Text Replacement API quotas.

Triggering the AppSheet API for Cloud File Updates

Once our Apps Script has identified a batch of new or modified blueprints, it needs to hand that data over to AppSheet. AppSheet provides a robust REST API that allows you to programmatically Add, Edit, or Delete records in your app’s underlying tables.

Before writing the code, ensure that you have enabled the API for your AppSheet application. Navigate to your AppSheet Editor, go to Settings > Integrations > IN: from cloud services to your app, enable the API, and generate an Application Access Key.

With your App ID and Access Key in hand, we can build the syncToAppSheet function. This function takes the array of updated files, formats them into a JSON payload that AppSheet expects, and sends a POST request using UrlFetchApp.

function syncToAppSheet(blueprints) {

const appId = 'YOUR_APPSHEET_APP_ID';

const accessKey = 'YOUR_APPSHEET_ACCESS_KEY';

const tableName = 'Blueprints'; // The exact name of your AppSheet table

const apiUrl = `https://api.appsheet.com/api/v2/apps/${appId}/tables/${tableName}/Action`;

// Map the Apps Script file data to your AppSheet column names

const rows = blueprints.map(bp => ({

"BlueprintID": bp.id, // Assuming File ID is your Key column

"FileName": bp.name,

"DocumentLink": bp.url,

"LastModified": bp.lastUpdated,

"FileType": bp.mimeType

}));

// Construct the AppSheet API Payload

const payload = {

"Action": "Add", // Using "Add" handles both inserts and upserts (if the key exists)

"Properties": {

"Locale": "en-US",

"Timezone": "UTC"

},

"Rows": rows

};

const options = {

'method': 'post',

'contentType': 'application/json',

'headers': {

'ApplicationAccessKey': accessKey

},

'payload': JSON.stringify(payload),

'muteHttpExceptions': true

};

try {

const response = UrlFetchApp.fetch(apiUrl, options);

const responseCode = response.getResponseCode();

if (responseCode >= 200 && responseCode < 300) {

console.log(`Successfully synced ${rows.length} blueprints to AppSheet.`);

} else {

console.error(`AppSheet API Error (${responseCode}): ` + response.getContentText());

}

} catch (e) {

console.error('Network or execution error syncing to AppSheet: ' + e.message);

}

}

Key architectural notes on this payload:

-

The “Add” Action: In the AppSheet API, if you send an “Add” action for a row where the Key column (in our case,

BlueprintID) already exists in the table, AppSheet will automatically treat it as an “Edit” (an upsert). This is incredibly powerful because it means our script doesn’t need to query AppSheet first to determine whether a file is brand new or just recently modified. -

Column Mapping: Ensure that the keys in the

rowsmapping (e.g.,"BlueprintID","FileName") perfectly match the column headers in your AppSheet table. -

Mute HTTP Exceptions: Setting

muteHttpExceptions: truein theUrlFetchAppoptions prevents the script from crashing on a 400 or 500 error, allowing you to log the exact error response from AppSheet for easier debugging.

By combining these two functions, you have created a serverless, highly efficient synchronization engine. Google Drive acts as the secure, version-controlled repository for your blueprints, while the Apps Script automatically pushes metadata updates into AppSheet, ensuring your field workers or project managers always see the latest documents in their mobile or web app.

Implementing the Technical Stack

With our architectural strategy defined, it is time to build the engine that will drive our source of truth. To bridge the unstructured storage of Google Drive with the structured, relational environment of AppSheet, we will rely on Google Apps Script (GAS) running on the V8 engine. This serverless middleware will act as our orchestration layer, querying Drive for blueprint updates and securely pushing those changes into our AppSheet data model.

Configuring DriveApp for Seamless File Management

Automated Order Processing Wordpress to Gmail to Google Sheets to Jobber provides the DriveApp service, a powerful built-in class within Apps Script that allows us to programmatically interact with Google Drive. For a blueprint synchronization system, our primary goal is to target specific directories, extract file metadata (such as file names, unique IDs, web-viewable URLs, and modification timestamps), and format this data for our frontend.

When dealing with architectural blueprints or engineering schematics, you are typically managing PDF, CAD, or image files. To ensure our sync is performant and doesn’t waste execution time parsing irrelevant documents, we should filter our queries by MIME type and specific folder IDs.

Here is a robust approach to extracting blueprint metadata using DriveApp:

/**

* Retrieves metadata for blueprint files within a designated Source of Truth folder.

* @param {string} folderId - The Google Drive Folder ID containing the blueprints.

* @returns {Array<Object>} An array of blueprint metadata objects.

*/

function getBlueprintMetadata(folderId) {

const folder = DriveApp.getFolderById(folderId);

// Targeting PDFs as the standard blueprint distribution format

const files = folder.getFilesByType(MimeType.PDF);

const blueprints = [];

while (files.hasNext()) {

const file = files.next();

blueprints.push({

Blueprint_ID: file.getId(),

File_Name: file.getName(),

Drive_URL: file.getUrl(),

Last_Updated: file.getLastUpdated().toISOString(),

Status: 'Active'

});

}

return blueprints;

}

Cloud Engineering Tip: If your blueprint repository contains thousands of files, the while (files.hasNext()) loop might hit the Apps Script 6-minute execution limit. For enterprise-scale repositories, consider implementing a continuation token mechanism or utilizing the advanced Drive API service to perform paginated batch requests.

Securing the AppSheet API Connection

Once we have extracted the blueprint metadata from Google Drive, the next step is to push this data into AppSheet. AppSheet exposes a robust REST API (v2) that allows us to perform CRUD operations directly against our application’s tables. However, connecting to this API requires strict adherence to security best practices.

Hardcoding API keys in your Apps Script files is a massive security vulnerability. Instead, we will leverage the Apps Script PropertiesService to store our AppSheet Application ID and Access Key securely in the script’s environment variables.

To configure this securely, first manually add your APPSHEET_APP_ID and APPSHEET_ACCESS_KEY to the Script Properties in the Apps Script project settings. Then, we can use UrlFetchApp to construct a secure POST request to the AppSheet endpoint.

/**

* Securely pushes blueprint metadata to the AppSheet API.

* @param {Array<Object>} blueprintData - The formatted array of blueprint records.

*/

function syncToAppSheet(blueprintData) {

// Securely retrieve credentials from the environment

const scriptProperties = PropertiesService.getScriptProperties();

const appId = scriptProperties.getProperty('APPSHEET_APP_ID');

const accessKey = scriptProperties.getProperty('APPSHEET_ACCESS_KEY');

if (!appId || !accessKey) {

throw new Error("Missing AppSheet API credentials in Script Properties.");

}

// Define the AppSheet API v2 endpoint for the 'Blueprints' table

const apiUrl = `https://api.appsheet.com/api/v2/apps/${appId}/tables/Blueprints/Action`;

// Construct the AppSheet REST API payload

const payload = {

"Action": "Edit", // 'Edit' acts as an upsert if the key column matches

"Properties": {

"Locale": "en-US",

"Timezone": "UTC"

},

"Rows": blueprintData

};

const options = {

'method': 'post',

'contentType': 'application/json',

'headers': {

'ApplicationAccessKey': accessKey

},

'payload': JSON.stringify(payload),

'muteHttpExceptions': true // Allows us to handle errors gracefully

};

try {

const response = UrlFetchApp.fetch(apiUrl, options);

const responseCode = response.getResponseCode();

if (responseCode >= 200 && responseCode < 300) {

console.log(`Successfully synced ${blueprintData.length} blueprints to AppSheet.`);

} else {

console.error(`AppSheet API Error (${responseCode}): ${response.getContentText()}`);

}

} catch (error) {

console.error(`Failed to execute AppSheet sync: ${error.toString()}`);

}

}

Notice the use of the "Action": "Edit" parameter in the payload. In the AppSheet API, passing an “Edit” action alongside the primary key (in our case, the Blueprint_ID mapped from the Drive File ID) effectively acts as an upsert. If the blueprint is new, AppSheet will add it; if it already exists, AppSheet will update its metadata, ensuring your application perfectly mirrors the Google Drive source of truth without creating duplicate records.

Ensuring Reliability and Version Control

When dealing with architectural blueprints or engineering schematics, working off an outdated file isn’t just an inconvenience—it can lead to catastrophic project delays and massive financial losses. A robust “Source of Truth” architecture must guarantee that every user is looking at the absolute latest iteration of a document, while preserving the historical lineage of past designs. Achieving this requires a deep understanding of how AppSheet interacts with Google Drive’s file system and how to safeguard data integrity against the unpredictable nature of mobile field operations.

Handling File Overwrites and Document Revisions

By default, when AppSheet captures a file or image, it generates a unique filename (often appending a timestamp or a unique ID) and saves it to a designated folder in Google Drive. While this prevents accidental overwrites, it can quickly turn a project folder into a chaotic repository of duplicated files, making it impossible to identify the true “current” blueprint.

To build a reliable version control system, you must design your AppSheet data model to handle revisions systematically. There are two primary architectural approaches to this within the Automated Payment Transaction Ledger with Google Sheets and PayPal ecosystem:

-

The Immutable Ledger Approach (Recommended): Instead of overwriting files, create a one-to-many relationship in your AppSheet database (e.g., a

Projectstable and a childBlueprint_Revisionstable). Every time a new blueprint is uploaded, it creates a new child record with a timestamp, the user’s ID, and the new file path. You can then use AppSheet’sMAXROW()expression or a slice to ensure that the parent project record always displays the most recent child record. This provides a complete, auditable history of every blueprint version directly within the app UI. -

**The Native Drive Versioning Approach: If your workflow demands a single, static file link (for instance, if external contractors are accessing a shared Google Drive link), you can leverage Google Apps Script to intercept the AppSheet upload. When AppSheet saves a new file, an automation triggers a webhook to an Apps Script endpoint. The script uses the Google Drive API to upload the new file as a new revision of the existing file ID (using the

revisions.createmethod). This keeps the Google Drive file ID constant, preserving shared links while cleanly stacking the historical versions in Drive’s native “Manage Versions” interface.

Whichever method you choose, ensure that your AppSheet app is configured to clean up orphaned files if a record is deleted, keeping your Google Drive storage optimized and your source of truth pristine.

Testing the Sync Workflow for Mobile Field Teams

Field teams operate in notoriously hostile network environments—from deep inside concrete basements to remote construction sites with zero cellular reception. A blueprint sync solution is only as reliable as its offline capabilities. AppSheet excels at offline data collection, but syncing heavy blueprint files (often large PDFs or high-resolution images) requires rigorous, real-world testing.

To ensure your sync workflow is bulletproof, your testing protocol must simulate the exact conditions your field teams will face:

-

Payload and Caching Verification: Blueprints are data-heavy. In AppSheet, navigate to Settings > Offline Mode and ensure that “Store content for offline use” is enabled. Test the app by loading it on a mobile device, turning on Airplane Mode, and attempting to open a blueprint. You must verify that the high-resolution files are successfully caching to the device’s local storage and remain legible without a network connection.

-

Simulating Sync Conflicts: What happens if a project manager in the office uploads a new blueprint revision at the exact same time a field engineer is annotating the older version offline? You must test AppSheet’s conflict resolution. By default, AppSheet uses a “last writer wins” model. For critical blueprints, it is highly recommended to implement a check-out/check-in system or use AppSheet’s

ChangeTimestampcolumns to detect if the underlying record was modified while the field worker was offline. If a conflict is detected, surface a warning to the user rather than blindly overwriting the data. -

Background Sync Resiliency: Test the transition from offline to online. While in Airplane Mode, upload a new field annotation or blueprint revision. Reconnect to a weak network (you can simulate 3G throttling using developer tools on your device). Monitor the AppSheet sync queue to ensure the large file payload uploads successfully in the background without timing out or crashing the app.

By aggressively testing these edge cases, you ensure that the AppSheet and Google Drive integration remains a resilient, unbreakable source of truth, regardless of where your team is working.

Scale Your Project Architecture

Once your foundational Source of Truth Blueprint is operational and syncing seamlessly between AppSheet and Google Drive, the next critical phase is anticipating and engineering for growth. A localized, department-level application behaves very differently than an enterprise-wide deployment. Scaling your project architecture means moving beyond the constraints of basic file storage and standard spreadsheet backends to embrace the full power of the Google Cloud ecosystem.

To achieve high availability, low latency, and enterprise-grade security, you must evolve your architecture. This often involves migrating your AppSheet backend to robust relational databases like Cloud SQL, leveraging BigQuery for massive data analytics, and utilizing Google Cloud Functions to handle complex, event-driven automations that extend beyond AppSheet’s native capabilities.

Audit Your Specific Business Needs

Scaling is never a one-size-fits-all endeavor; it requires a highly targeted approach based on your operational realities. Before provisioning new Google Cloud resources or refactoring your AppSheet data models, you must conduct a comprehensive audit of your specific business needs.

A thorough architectural audit should evaluate the following core pillars:

-

Data Volume and Velocity: Are you approaching the row limits or performance thresholds of Google Sheets? If your blueprint generates thousands of records daily or requires complex relational queries, it is time to map out a migration to a true SQL backend. Furthermore, assess how your Google Drive folder structures are handling automated file generation—deeply nested or overloaded folders can severely impact sync times.

-

User Concurrency and Access: How many users will interact with the AppSheet application simultaneously? Evaluate your need for offline capabilities, granular security filters, and dynamic user roles. As your user base grows, you must ensure that your Google Docs to Web Identity and Access Management (IAM) policies are perfectly aligned with your AppSheet domain settings.

-

Security and Compliance: Does your blueprint manage sensitive intellectual property or PII? You need to assess whether your current setup meets organizational compliance. This might involve implementing SocialSheet Streamline Your Social Media Posting Data Loss Prevention (DLP) rules, configuring VPC Service Controls in Google Cloud, or ensuring strict regional data residency.

-

Integration Complexity: Look beyond the immediate AppSheet-to-Drive sync. Do you need to push this blueprint data into Looker for advanced business intelligence? Do you need to trigger external APIs via Cloud Run when a new blueprint is approved? Identifying these integration points early prevents technical debt down the road.

Book a GDE Discovery Call with Vo Tu Duc

Navigating the intricacies of Google Cloud, Speech-to-Text Transcription Tool with Google Workspace, and AppSheet requires deep technical foresight. Designing an architecture that is both cost-effective today and infinitely scalable tomorrow is a complex balancing act. To ensure your infrastructure is built on proven methodologies and industry best practices, expert guidance is an invaluable investment.

Take the guesswork out of your scaling strategy by booking a Discovery Call with Vo Tu Duc, a recognized Google Developer Expert (GDE).

During this focused, high-impact session, you will get the opportunity to:

-

Deconstruct Your Current Setup: Walk through your existing AppSheet and Google Drive blueprint to identify hidden bottlenecks and performance liabilities.

-

Map a Custom Architecture: Receive tailored recommendations on which Google Cloud services (Cloud SQL, Cloud Storage, Cloud Functions) will best serve your specific data load and workflow requirements.

-

Mitigate Technical Debt: Learn how to structure your data models and Google Workspace permissions correctly from day one, avoiding costly and time-consuming refactoring in the future.

Whether you are looking to optimize sync speeds, enforce rigorous security protocols, or integrate advanced cloud engineering solutions into your AppSheet environment, a GDE consultation provides the strategic roadmap you need to scale with absolute confidence.

Stop Doing Manual Work. Scale with AI.

Want to turn these blog concepts into production-ready reality for your team?

Table Of Contents

Portfolios

Related Posts

Quick Links

Legal Stuff