Building a Secure Private Vertex AI Environment for Factory Data

While artificial intelligence is unlocking unprecedented efficiency in manufacturing, relying on public AI models exposes your proprietary factory data to severe security risks. Discover why the convenience of public AI APIs isn’t worth the threat to your operational lifeblood.

The Security Challenge of Public AI Models in Manufacturing

The manufacturing sector is undergoing a massive transformation driven by Artificial Intelligence. From predictive maintenance on the assembly line to real-time quality assurance using computer vision, AI is unlocking unprecedented operational efficiencies. However, integrating these advanced capabilities introduces a critical friction point: security. Relying on public AI models or standard multi-tenant SaaS endpoints creates an unacceptable attack surface for industrial organizations. When you are dealing with the lifeblood of a factory—its operational data—the convenience of public AI APIs is vastly outweighed by the severe security challenges they present.

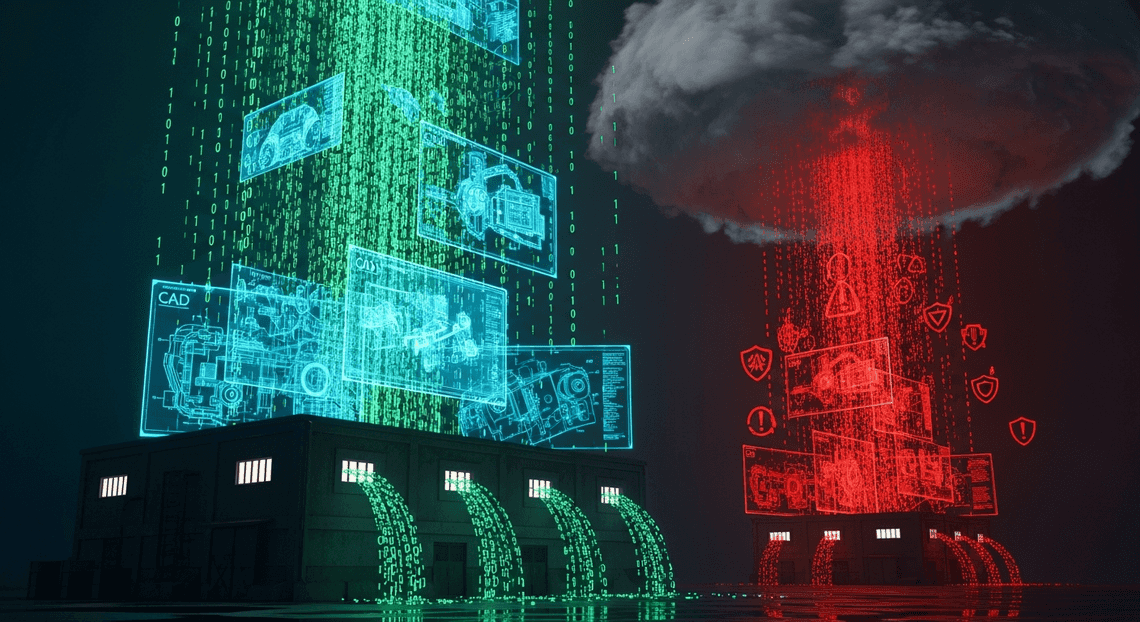

Risks of Exposing Proprietary Factory Data

Modern factories generate terabytes of highly sensitive information daily. This encompasses IoT telemetry from Programmable Logic Controllers (PLCs), proprietary CAD schematics, production yield metrics, defect rates, and intricate supply chain logistics. Sending this data to a public AI model exposes an organization to profound risks.

First and foremost is the threat of data leakage and intellectual property (IP) theft. Many public AI services explicitly state in their terms of service that user prompts and payloads may be used to train and refine their foundational models. If a factory feeds its proprietary production line data into a public model to generate an anomaly detection algorithm, those trade secrets could inadvertently become part of the model’s weights, potentially surfacing to competitors who prompt the same public model.

Furthermore, exposing factory data to the public internet increases the risk of interception. For industries bound by strict regulatory frameworks—such as defense manufacturing under ITAR, or regional data privacy laws like GDPR—routing sensitive Operational Technology (OT) data outside of a geographically and logically controlled environment is a direct compliance violation. A single breach or unauthorized data exfiltration event can result in crippling regulatory fines, loss of government contracts, and catastrophic damage to a manufacturer’s competitive advantage.

Why Enterprise IT Requires Strict Perimeter Security

To mitigate these risks, Enterprise IT and Cloud Engineering teams operate under a paradigm of strict perimeter security and Zero Trust architecture. In a traditional manufacturing IT/OT environment, critical systems are either air-gapped or heavily shielded behind firewalls, demilitarized zones (DMZs), and strictly controlled private networks.

When extending these environments into the cloud to leverage AI, enterprise IT demands the same—if not greater—level of isolation. This is exactly where standard public AI endpoints fail the enterprise architecture test. Transmitting sensitive telemetry data over the public internet to reach an AI service inherently breaks the secure perimeter, creating blind spots in network monitoring and bypassing internal data loss prevention (DLP) controls.

To maintain architectural integrity, IT requires robust, cloud-native isolation mechanisms. In the Google Cloud ecosystem, this translates to leveraging VPC Service Controls (VPC-SC), private IP addressing, and dedicated network connections. A secure cloud perimeter ensures that data flows exclusively through private, encrypted channels (such as Cloud Interconnect or HA VPN) and interacts only with AI services deployed within the organization’s isolated Virtual Private Cloud (VPC). By enforcing strict perimeter security, organizations can guarantee data residency, enforce granular Identity and Access Management (IAM) policies, and utilize Customer-Managed Encryption Keys (CMEK)—ultimately ensuring that the factory’s data never traverses the public internet or leaves the organization’s sovereign control.

Architecting a Private AI Environment on Google Cloud

When dealing with highly sensitive factory data—such as proprietary manufacturing tolerances, predictive maintenance telemetry, or high-resolution quality assurance imagery—security cannot be an afterthought. Architecting a private AI environment on Google Cloud requires a defense-in-depth approach. The goal is to construct an isolated ecosystem where Building Self Correcting Agentic Workflows with Vertex AI can ingest, process, and serve insights from this critical data without ever exposing it to the public internet or unauthorized internal actors. This involves weaving together advanced network security, identity management, and cryptographic controls into a cohesive, enterprise-grade architecture.

Core Components of a Secure AI Architecture

To build a robust and private Vertex AI environment, we must move beyond default configurations and rely on a specific combination of foundational Google Cloud services working in tandem. The core components of this architecture include:

-

Virtual Private Cloud (VPC) & Custom Subnets: The bedrock of the architecture. A custom-mode VPC provides an isolated network space where we can enforce granular firewall rules and segment traffic between data ingestion, model training, and model serving layers.

-

VPC Service Controls (VPC SC): This is the crown jewel for data exfiltration defense. VPC SC allows us to define a strict, logical security perimeter around Google-managed services. By placing Vertex AI, Cloud Storage, and BigQuery within the same perimeter, we ensure these services can communicate securely while blocking access from outside the boundary.

-

Private Services Access (PSA) & Private Service Connect (PSC): Because Vertex AI is a managed service, the underlying infrastructure resides in a Google-managed tenant project. PSA and PSC allow your custom VPC to communicate with Vertex AI APIs and training clusters using internal IP addresses, keeping all traffic entirely off the public internet.

-

Cloud Key Management Service (KMS): For proprietary factory data, default Google-managed encryption is often insufficient for strict compliance. Implementing Customer-Managed Encryption Keys (CMEK) via Cloud KMS ensures you retain absolute cryptographic control over your datasets, Vertex AI training pipelines, and deployed models. If the keys are revoked, the data and models become instantly inaccessible.

-

Granular Identity and Access Management (IAM): Implementing the principle of least privilege is critical. Instead of relying on default compute service accounts, we provision dedicated, workload-specific Service Accounts. A training pipeline, for example, operates under a service account that only has read access to a specific raw data bucket and write access to a specific model artifact bucket.

Ensuring Data Never Leaves the GCP Perimeter

The primary concern for plant managers and industrial data engineers when moving factory data to the cloud is preventing accidental or malicious data exfiltration. To guarantee that your intellectual property never leaves the Google Cloud perimeter, we must enforce strict network boundaries and access policies.

First, the implementation of VPC Service Controls (VPC SC) is non-negotiable. By placing our project and data services inside a VPC SC perimeter, we explicitly block any API requests originating from outside the defined boundary. Even if a malicious actor or careless employee possesses valid IAM credentials, they cannot access the factory data, download model weights, or query Vertex AI endpoints from an unauthorized network (such as a public Wi-Fi network or an unmanaged device).

Second, we configure Private Google Access for on-premises connectivity. Factory edge devices, PLCs, and on-premises servers communicate with the cloud via dedicated Cloud Interconnect or HA VPN. Private Google Access ensures that this on-premises telemetry data can reach Google APIs (like Vertex AI Prediction or Cloud Storage) using internal routing, completely bypassing the public internet.

Furthermore, when provisioning Vertex AI Workbench notebooks or custom training pipelines, we strictly disable public IP addresses. All model training, evaluation, and inference traffic is forced to route internally. To handle scenarios where data scientists need to download specific JSON-to-Video Automated Rendering Engine packages or ML libraries, we configure Cloud NAT alongside strict egress firewall rules or Secure Web Proxy. This allows compute instances to pull dependencies from approved, mirrored repositories (like Artifact Registry) while explicitly blocking all other outbound internet access.

Finally, to prove compliance and maintain continuous visibility, we enable comprehensive audit logging. Cloud Audit Logs (Data Access logs) and VPC Flow Logs are routed to a centralized, secure logging project. By feeding this telemetry into Security Command Center, cloud engineers can monitor real-time access patterns, detect anomalous behavior, and ensure that the perimeter remains uncompromised and data sovereignty is strictly maintained.

Implementing VPC Service Controls for Vertex AI

When dealing with proprietary factory data—such as manufacturing tolerances, IoT telemetry, and predictive maintenance logs—Identity and Access Management (IAM) alone is not enough. While IAM dictates who can access your resources, it does not restrict from where they can access them. If a developer’s credentials are compromised, an attacker could theoretically download your highly sensitive factory datasets from any internet-connected device.

This is where VPC Service Controls (VPC SC) becomes the cornerstone of your security architecture. VPC SC allows you to define a logical security perimeter around your Google Cloud services, mitigating the risk of data exfiltration. By enforcing VPC SC, you ensure that Vertex AI APIs and the underlying data storage can only be accessed from trusted networks, such as your on-premises factory floor via Cloud Interconnect or secure developer workstations.

Defining the Service Perimeter for Machine Learning Workloads

A successful VPC SC implementation requires a holistic view of your machine learning architecture. Vertex AI does not operate in a vacuum; it relies heavily on other Google Cloud services to train, tune, and deploy models. Therefore, your service perimeter must encapsulate the entire ML lifecycle.

To secure your factory’s ML workloads, you need to add the relevant projects to a regular service perimeter and explicitly restrict the following APIs:

-

aiplatform.googleapis.com(Vertex AI) -

storage.googleapis.com(Cloud Storage, holding raw factory data and model artifacts) -

bigquery.googleapis.com(BigQuery, for structured telemetry analytics) -

artifactregistry.googleapis.com(Artifact Registry, for custom training and serving containers)

By restricting these APIs within the perimeter, any API request originating from outside the boundary will be denied, even if the user has the correct IAM permissions.

Pro-Tip for Cloud Engineers: Always deploy your service perimeter in Dry Run mode first. Machine learning pipelines often have complex, hidden dependencies. Dry Run mode logs violations to Cloud Logging without actually blocking the traffic, allowing you to identify legitimate cross-perimeter API calls and adjust your configuration before enforcing the boundary and accidentally breaking your factory’s automated training pipelines.

Configuring Secure Ingress and Egress Rules

By default, a VPC SC perimeter acts as a strict firewall for Google APIs, blocking all traffic crossing the boundary. However, a completely isolated environment is rarely functional. Your factory edge devices need to push new telemetry data into the perimeter, and your data scientists need to access Vertex AI Workbench from their corporate laptops. This is achieved through carefully crafted Ingress and Egress rules.

Ingress Rules allow API clients outside the perimeter to access resources inside it. For a factory environment, you typically configure ingress based on Access Levels defined in Access Context Manager.

For example, you can create an ingress rule that allows access to aiplatform.googleapis.com and storage.googleapis.com only if the request originates from:

-

The specific IP ranges of your factory’s on-premises network (routed through a dedicated Cloud Interconnect).

-

Corporate devices that pass BeyondCorp Enterprise device posture checks (e.g., ensuring the data scientist’s laptop is company-issued and fully patched).

Here is a conceptual example of an ingress policy block that allows a specific service account from an external factory data-ingestion project to write to Cloud Storage within your ML perimeter:

ingressPolicies:

- ingressFrom:

identities:

- serviceAccount:[email protected]

sources:

- accessLevel: accessPolicies/123456789/accessLevels/factory_interconnect_ips

ingressTo:

operations:

- serviceName: storage.googleapis.com

methodSelectors:

- method: "*"

resources:

- projects/your-secure-ml-project

Egress Rules, conversely, allow identities inside the perimeter to access resources outside of it. In a secure Vertex AI environment, egress should be heavily restricted. However, you might need an egress rule if your Vertex AI training pipeline (running inside the perimeter) needs to pull a specific, pre-approved public dataset from an external Google Cloud project, or if a newly trained predictive maintenance model needs to be pushed to a separate, isolated “Production Serving” project perimeter.

By meticulously defining these directional rules, you create a Vertex AI environment that is highly collaborative for your engineering teams, seamlessly integrated with your physical factory networks, yet cryptographically sealed against unauthorized external access and data exfiltration.

Enforcing Least Privilege with GCP IAM

When dealing with proprietary factory data—such as manufacturing tolerances, IoT sensor telemetry, and production line metrics—security is paramount. In Google Cloud, Identity and Access Management (IAM) is your first line of defense. Enforcing the Principle of Least Privilege (PoLP) ensures that human users, automated pipelines, and Vertex AI services have exactly the permissions they need to perform their tasks, and absolutely nothing more. Relying on broad, primitive roles like Editor or even predefined roles like Vertex AI Administrator across your entire project introduces unnecessary risk. Instead, a robust security posture requires a granular, purpose-built IAM strategy tailored to your specific MLOps lifecycle.

Structuring Custom IAM Roles for AI Operations

Google Cloud provides excellent predefined roles, but in a highly regulated factory environment, these often bundle permissions that violate least privilege. For instance, a data scientist might need to trigger a model training job but shouldn’t have the authority to delete a deployed endpoint, modify the underlying VPC peering configurations, or access raw, unanonymized HR data that might reside in the same project.

To solve this, you must structure Custom IAM Roles mapped directly to your operational personas and automated workflows:

-

The Factory Data Scientist: Create a custom role that includes

aiplatform.models.create,aiplatform.trainingPipelines.create, andstorage.objects.get. This allows them to read sanitized factory datasets from specific Cloud Storage buckets and initiate training, without granting destructive permissions likeaiplatform.models.deleteoraiplatform.endpoints.delete. -

The MLOps Pipeline: An automated CI/CD pipeline requires a different custom role, focusing on deployment permissions such as

aiplatform.endpoints.deployandaiplatform.models.upload, completely stripped of any permissions to alter the raw data lakes or BigQuery datasets.

When defining these custom roles, leverage the principle of separation of duties. By explicitly selecting only the necessary aiplatform.*, storage.*, and bigquery.* permissions, you drastically reduce the blast radius if an identity is ever compromised. Furthermore, you should regularly audit these custom roles using the IAM Recommender, which utilizes machine learning to suggest permission reductions based on actual historical usage patterns within your GCP environment.

Securing Service Accounts and Resource Access

In Vertex AI, the identities executing your training jobs, batch predictions, and model deployments are Service Accounts (SAs). A common, yet dangerous, anti-pattern is relying on the Default Compute Engine Service Account, which inherently possesses broad Editor access to your project.

For a secure factory AI environment, you must implement purpose-built Service Accounts for each distinct Vertex AI operation:

-

Training Service Account: Dedicated solely to Vertex AI Custom Training jobs. It should only have read access to the specific Cloud Storage buckets containing training data and write access to the model artifact output bucket.

-

Prediction Service Account: Used by Vertex AI Endpoints. It requires permissions to read the model artifacts and write inference logs to Cloud Logging, but zero access to the raw factory data lakes.

To further secure resource access, enforce IAM Conditions. You can restrict a service account’s access so it can only interact with specific resources—like a BigQuery dataset containing sensitive factory telemetry—if specific conditions are met, such as matching a resource name prefix or operating within a designated time window.

Finally, strictly control who can act as these service accounts. Grant the Service Account User role (roles/iam.serviceAccountUser) only to the specific developers or CI/CD pipelines that need to attach these SAs to Vertex AI jobs. For factory edge devices (like PLCs, SCADA systems, or edge gateways) that need to push data into this environment or trigger inference, utilize Workload Identity Federation rather than exporting long-lived service account JSON keys. This allows your on-premises factory systems to authenticate with GCP using short-lived tokens, effectively eliminating the risk of leaked static credentials on the factory floor.

Deploying the Private Vertex AI Workshop

With our foundational network architecture and identity policies firmly established, it is time to bring the machine learning environment to life. Deploying a “Private Vertex AI Workshop” means creating a secure, isolated enclave where data scientists can interact with highly sensitive factory telemetry, quality control images, and production logs without risking data exfiltration. In this phase, we transition from infrastructure to application, configuring the specific Vertex AI components required to build, train, and deploy models securely.

Provisioning Secure Notebooks and Training Pipelines

The core of any data scientist’s workflow in Google Cloud is Vertex AI Workbench. To handle proprietary factory data, we must provision these notebook instances with a strict “defense-in-depth” approach.

First, when deploying Vertex AI Workbench instances (whether User-Managed or Instances), you must explicitly disable public IP addresses. The notebooks should be deployed directly into your private VPC subnetwork. To allow these instances to interact with other Google Cloud services—like pulling datasets from Cloud Storage or querying BigQuery—without traversing the public internet, you must enable Private Google Access on the subnetwork. Furthermore, to protect factory data at rest, ensure that the boot and data disks of these notebook instances are encrypted using Customer-Managed Encryption Keys (CMEK) via Cloud KMS, rather than relying solely on Google’s default encryption.

Once the data scientists have explored the data and developed their models, the next step is operationalizing these models using Vertex AI Training Pipelines. Standard training jobs run in Google-managed tenant projects, which can pose a security concern if they need to access private factory data. To solve this, we provision Private Worker Pools.

By setting up a Private Worker Pool, you establish a VPC Network Peering connection between your factory’s VPC and the Google-managed VPC hosting the training infrastructure. This allows your custom training jobs to securely access private datasets, container registries (Artifact Registry), and internal APIs using internal IP addresses. When defining your pipeline via the Vertex AI SDK, you simply specify the network parameter to point to your peered VPC, ensuring that the entire training lifecycle remains completely isolated from the public internet.

Validating Perimeter Security and Data Isolation

Deployment is only half the battle; rigorous validation is where we prove our security posture. Because we are dealing with critical factory data, we must empirically verify that our VPC Service Controls (VPC SC) and network policies are functioning exactly as intended.

To validate perimeter security, you should actively attempt to breach the boundaries you just built. Start by simulating an exfiltration attempt: log into a secure Vertex AI Workbench instance via Identity-Aware Proxy (IAP)—which allows secure SSH/TCP access without public IPs—and attempt to copy a sensitive factory dataset to an external Cloud Storage bucket that resides outside your VPC SC perimeter. If your perimeter is configured correctly, this action will be immediately blocked.

You can verify these blocks by querying Cloud Logging. Look for logs where the protoPayload.metadata.violationReason indicates a VPC Service Controls violation. This confirms that the API call was intercepted and denied at the perimeter level.

Next, validate data isolation from the outside in. Attempt to access the Vertex AI API or the secure Cloud Storage buckets from a machine outside your authorized corporate network or without the proper context-aware access credentials. Again, these requests should result in HTTP 403 Forbidden errors. Finally, review your firewall rules and route tables to ensure that no default internet gateways are inadvertently allowing egress traffic from your notebook subnets. By systematically testing both ingress and egress paths, you guarantee that your factory’s intellectual property remains strictly confined within the Private Vertex AI Workshop.

Next Steps for Scaling Your Secure AI Architecture

Now that we have established the foundational blueprint for a private Vertex AI environment, the journey is far from over. Manufacturing environments are highly dynamic—new IoT sensors come online, production lines evolve, and the volume of operational technology (OT) and telemetry data grows exponentially. Scaling this architecture requires a proactive approach to maintain the delicate balance between high-performance machine learning and zero-trust security.

To transition from a localized pilot to a global, multi-factory AI deployment, you must operationalize your security controls, automate your MLOps pipelines within secure boundaries, and establish a clear, compliant roadmap for enterprise-wide expansion.

Auditing Your Current Cloud Security Posture

You cannot scale what you haven’t secured, and you cannot secure what you haven’t measured. Before extending your Vertex AI footprint across additional manufacturing sites, conducting a comprehensive audit of your existing Google Cloud environment is non-negotiable. Factory data—ranging from proprietary CAD models and supply chain logistics to real-time SCADA telemetry—is highly sensitive and often subject to strict regulatory compliance.

A robust audit of your AI infrastructure should focus on the following critical areas:

-

Service Perimeter Integrity: Utilize VPC Service Controls (VPC SC) troubleshooting tools to verify that your perimeters are tightly configured. Ensure there are no unauthorized egress paths from your Vertex AI Workbench instances or training pipelines that could lead to data exfiltration.

-

Continuous Threat Detection: Leverage Security Command Center (SCC) Premium to continuously monitor your Google Cloud assets. SCC will help you identify misconfigurations, such as publicly exposed Cloud Storage buckets containing raw factory data or unencrypted Vertex AI model endpoints.

-

IAM and Least Privilege: Conduct a rigorous review of your Identity and Access Management (IAM) policies. Use the IAM Recommender to identify and strip away over-provisioned roles from service accounts and human users interacting with your ML pipelines. Ensure that data scientists only have access to the specific datasets required for their current models.

-

Data Protection and Governance: Confirm that Customer-Managed Encryption Keys (CMEK) via Cloud Key Management Service (KMS) are uniformly enforced across all Vertex AI datasets, models, and endpoints. Additionally, implement Cloud Data Loss Prevention (DLP) to automatically scan and mask any sensitive intellectual property or PII before it enters your model training phases.

By rigorously auditing these components, you ensure that your underlying infrastructure is resilient enough to support advanced, scaled AI workloads without introducing new attack vectors.

Booking a Discovery Call with Vo Tu Duc

Navigating the complexities of Google Cloud security, OT/IT network convergence, and enterprise-grade machine learning is rarely a solo endeavor. If you are ready to transition your factory’s AI initiatives from a secure proof-of-concept to a highly scalable production reality, expert guidance is your most valuable asset.

To ensure your architecture is built to industry best practices, you can book a one-on-one discovery call with Vo Tu Duc. As an authoritative expert in Google Cloud, Automatically create new folders in Google Drive, generate templates in new folders, fill out text automatically in new files, and save info in Google Sheets, and Cloud Engineering, Vo Tu Duc specializes in architecting bespoke, high-security environments tailored for sensitive industrial workloads.

During this strategic discovery session, you will cover:

-

Architecture Review: A high-level evaluation of your current data ingestion methods, network topology, and Vertex AI pipelines.

-

Security Gap Analysis: Identification of potential security bottlenecks, compliance risks, or networking vulnerabilities within your current factory data flows.

-

Custom Scaling Roadmap: Actionable insights on implementing advanced controls like Private Service Connect, automated MLOps CI/CD pipelines, and robust AC2F Streamline Your Google Drive Workflow integrations for secure team collaboration.

Scaling your AI capabilities shouldn’t mean compromising on the security of your proprietary manufacturing data. Reach out to book your discovery call with Vo Tu Duc today, and take the next definitive step toward securing your factory’s AI-driven future.

Stop Doing Manual Work. Scale with AI.

Want to turn these blog concepts into production-ready reality for your team?

Table Of Contents

Portfolios

Related Posts

Quick Links

Legal Stuff