Mastering Chain of Thought Prompting for Google Workspace Agents

Step into the future of enterprise automation, where rigid rules are replaced by intelligent AI agents capable of real reasoning. Discover how integrating Large Language Models can empower your workspace to dynamically analyze emails, cross-reference calendars, and draft complex proposals on the fly.

Introduction to Reasoning in Workspace Automations

The integration of Large Language Models (LLMs) into Automatically create new folders in Google Drive, generate templates in new folders, fill out text automatically in new files, and save info in Google Sheets has fundamentally shifted how we approach enterprise Automated Job Creation in Jobber from Gmail. We are no longer limited to the rigid, rule-based triggers of traditional AI Powered Cover Letter Automation Engine or third-party integration platforms. Today, we are building AI agents—systems capable of reading an email thread in Gmail, extracting structured data, cross-referencing availability in Google Calendar, and dynamically generating a proposal in Google Docs.

However, transitioning from simple text generation to autonomous task execution requires a critical capability: reasoning. For an AI agent to reliably interact with the AC2F Streamline Your Google Drive Workflow ecosystem, it cannot simply guess the final output. It must be able to break down complex workflows, evaluate intermediate states, and make logical decisions based on the data it retrieves from Workspace APIs. Understanding how to engineer this reasoning process is the key to building robust, enterprise-grade automations.

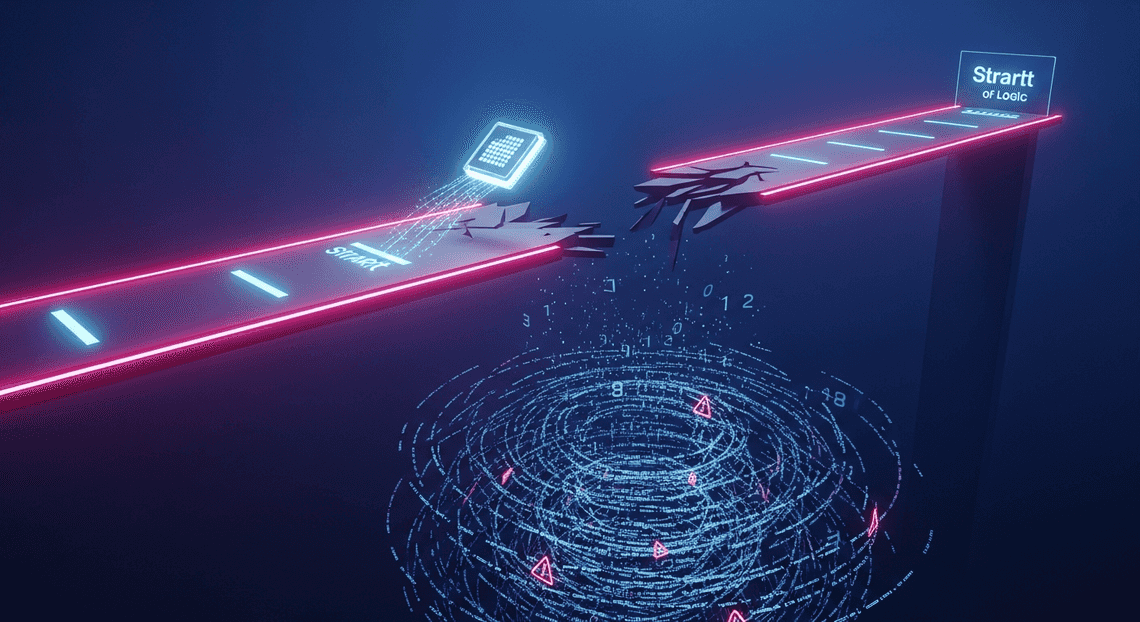

The Limitations of Standard Prompting

When developers first begin building AI-driven automations in Google Cloud—often leveraging Vertex AI to interact with Workspace data—they typically rely on standard, or “zero-shot,” prompting. A standard prompt asks the model to go directly from the input to the final output.

For example, a standard prompt might look like this:

“Read this customer email, check my Google Calendar for tomorrow, and draft a reply proposing a meeting time.”

While modern LLMs like Gemini are incredibly powerful, standard prompting falls short in complex automation scenarios for several reasons:

-

The “Leap of Logic” Fallacy: Standard prompts force the model to compute multiple hidden variables simultaneously. The model must parse the email intent, extract constraints, formulate a calendar query, and write the response all in one hidden computational pass. This often leads to hallucinations, such as the model suggesting a meeting time without actually verifying calendar availability.

-

Poor Error Recovery: If an API call to Google Drive or Sheets fails, or returns unexpected data, a standard prompt lacks the framework to pause, evaluate the error, and try an alternative approach. It simply crashes or outputs a flawed final response.

-

**Lack of Auditability: In enterprise environments, understanding why an automation took a specific action is just as important as the action itself. Standard prompting acts as a black box; if the agent drafts an inappropriate response, the developer has no visibility into the logical missteps that led to that outcome.

-

Context Window Dilution: When dealing with massive payloads—such as analyzing a 50-page Google Doc alongside a lengthy Gmail thread—standard prompts often cause the model to lose track of the core objective, leading to generic or inaccurate outputs.

Defining Chain of Thought for AI Agents

To overcome the fragility of standard prompting, Cloud Engineers turn to Chain of Thought (CoT) prompting. At its core, Chain of Thought is a technique that forces the LLM to articulate its intermediate reasoning steps before it generates a final answer or takes a final action. Instead of demanding an immediate solution, CoT asks the model to “think aloud.”

In the context of Automated Client Onboarding with Google Forms and Google Drive. agents, Chain of Thought acts as the cognitive glue between different Google APIs. It transforms the model from a simple text predictor into a methodical problem solver. When utilizing CoT, the agent’s internal process looks less like a single command and more like a structured internal monologue:

-

Thought: I need to propose a meeting time based on the customer’s email.

-

Thought: First, I must extract the customer’s timezone and preferred dates from the Gmail thread.

-

Action: [Extract Date/Time constraints]

-

Observation: The customer prefers tomorrow afternoon, EST.

-

Thought: Now, I need to check the user’s Google Calendar for tomorrow afternoon, converting EST to the user’s local timezone (PST).

-

Action: [Query Google Calendar API]

-

Observation: The user has a 30-minute opening at 1:00 PM PST (4:00 PM EST).

-

Thought: The time aligns with the constraints. I will now draft the email.

By explicitly defining this Chain of Thought, we drastically reduce the cognitive load on the model for any single step. If the Calendar API returns a conflict, the agent’s “Thought” process allows it to pivot and search for the next available slot rather than hallucinating a false opening. For developers building on Google Cloud, implementing CoT—often in tandem with frameworks like ReAct (Reasoning and Acting)—is the definitive standard for creating Workspace agents that are deterministic, reliable, and highly auditable.

Architectural Foundations of Chain of Thought

At its core, Chain of Thought (CoT) prompting is more than just a clever phrasing technique; it represents a fundamental shift in how we architect interactions with Large Language Models (LLMs) like Google’s Gemini. In a traditional zero-shot architecture, an LLM is treated as a direct function: you provide an input, and it attempts a single probabilistic leap to generate the final output. While sufficient for simple tasks, this architecture breaks down when applied to the multifaceted, context-heavy environment of Automated Discount Code Management System.

By integrating CoT, we introduce a sequential reasoning layer into the agent’s architecture. Instead of demanding an immediate final answer, the architecture forces the model to generate intermediate reasoning steps. For a Automated Email Journey with Google Sheets and Google Analytics agent—which often needs to orchestrate actions across Gmail, Google Drive, Docs, and Calendar—this foundation transforms the LLM from a basic text generator into a state-aware cognitive engine capable of executing complex, multi-stage workflows.

How Step by Step Logic Enhances Accuracy

The primary mechanism by which Chain of Thought enhances accuracy lies in token allocation and computational depth. When an LLM is forced to articulate its reasoning step-by-step, it effectively spends more computational “time” (represented by generated tokens) processing the logic before arriving at a conclusion.

In the context of Automated Google Slides Generation with Text Replacement, this drastically reduces hallucinations and logical leaps. Consider an agent tasked with reading an email thread, summarizing the action items, and scheduling a follow-up meeting. Without step-by-step logic, the model might conflate dates mentioned in the email or assign tasks to the wrong stakeholders.

By enforcing a CoT structure, the agent’s internal logic is serialized:

-

Extraction: Identify all individuals in the email thread.

-

Analysis: Isolate the specific commitments made by each individual.

-

Temporal Grounding: Extract proposed dates and cross-reference them with standard formatting (e.g., converting “next Friday” to a specific ISO date).

-

Synthesis: Formulate the final Calendar API payload and the summary text.

This deterministic stepping-stone approach grounds the model’s output in the provided context. Furthermore, it provides observability; if the agent schedules a meeting on the wrong day, developers can inspect the generated “thought process” to pinpoint exactly where the logical failure occurred, making debugging highly efficient.

Integrating the Gemini API with Apps Script

To bring this architectural concept to life within Automated Order Processing Wordpress to Gmail to Google Sheets to Jobber, we must bridge the cognitive power of the Gemini API with the orchestration capabilities of Genesis Engine AI Powered Content to Video Production Pipeline. Apps Script serves as the perfect serverless execution environment, allowing us to seamlessly authenticate and interact with Workspace services while offloading the heavy reasoning to Gemini.

Integrating Gemini requires making HTTP POST requests to the Gemini endpoint using Apps Script’s native UrlFetchApp service. The key to implementing CoT here is structuring the payload so that the prompt explicitly demands the step-by-step format before returning the final actionable data (like a JSON object meant for a Calendar insertion).

Here is a practical example of how to architect this integration within Apps Script:

function processWorkspaceTaskWithCoT(emailContent) {

// Retrieve the API key securely stored in Apps Script Properties

const apiKey = PropertiesService.getScriptProperties().getProperty('GEMINI_API_KEY');

const endpoint = `https://generativelanguage.googleapis.com/v1beta/models/gemini-1.5-pro:generateContent?key=${apiKey}`;

// The Chain of Thought Prompt

const prompt = `

You are an intelligent <a href="https://votuduc.com/Automated-Payment-Transaction-Ledger-with-Google-Sheets-and-PayPal-p291971">Automated Payment Transaction Ledger with Google Sheets and PayPal</a> agent. Process the following email content.

You MUST use a step-by-step Chain of Thought approach before providing the final answer.

Step 1: Identify the primary sender and the main objective of the email.

Step 2: Extract any specific dates, times, or deadlines mentioned.

Step 3: Determine the necessary <a href="https://votuduc.com/Google-Docs-to-Web-p230029">Google Docs to Web</a> action (e.g., Create Calendar Event, Draft Gmail Reply).

Step 4: Based on the previous steps, generate a strict JSON payload containing the parameters needed to execute the action.

Email Content:

"${emailContent}"

Output your response starting with "Thought Process:" followed by the steps, and end with "Final JSON:".

`;

const payload = {

"contents": [{

"parts": [{"text": prompt}]

}],

"generationConfig": {

"temperature": 0.2 // Lower temperature for more deterministic, logical outputs

}

};

const options = {

"method": "post",

"contentType": "application/json",

"payload": JSON.stringify(payload),

"muteHttpExceptions": true

};

try {

const response = UrlFetchApp.fetch(endpoint, options);

const jsonResponse = JSON.parse(response.getContentText());

// Extracting the text generated by Gemini

const generatedText = jsonResponse.candidates[0].content.parts[0].text;

// Further Apps Script logic would go here to parse out the "Final JSON"

// and execute the corresponding Workspace API calls.

return generatedText;

} catch (error) {

console.error("Error communicating with Gemini API:", error);

return null;

}

}

In this integration, Apps Script handles the secure credential management and network requests, while the prompt design forces Gemini into a CoT framework. By setting a lower temperature in the generationConfig, we further optimize the model for analytical tasks, ensuring that the step-by-step logic remains focused, deterministic, and highly accurate for Workspace automation.

Structuring System Prompts for Complex Logic

When building autonomous agents for SocialSheet Streamline Your Social Media Posting 123, you are moving beyond simple conversational AI and entering the realm of deterministic task orchestration. An agent tasked with cross-referencing a Google Drive document, summarizing a Gmail thread, and subsequently scheduling a Google Meet via the Calendar API requires a highly structured system prompt. Complex logic demands an architectural blueprint—a prompt structure that explicitly defines the agent’s persona, available tools, and the exact cognitive steps it must take to process multi-modal Workspace data.

To achieve high reliability and prevent hallucinated API calls, cloud engineers must treat system prompts as code. This involves establishing strict boundaries for dynamic data and enforcing a rigorous reasoning framework before the model is allowed to execute any external actions.

Designing the Prompt Injection Pattern

In the context of agent architecture, the “Prompt Injection Pattern” refers to the systematic method of injecting dynamic Workspace context (like email bodies, document text, or calendar availability) and reasoning frameworks into the base system prompt without degrading the model’s instruction-following capabilities.

When a Gemini model processes an unformatted, 50-message Gmail thread alongside its core instructions, the risk of instruction drift is high. To mitigate this, your prompt injection pattern must utilize strict delimiters—typically XML tags or Markdown blocks—to separate the agent’s core logic from the injected Workspace data.

A robust prompt injection pattern for a Workspace agent typically follows this structure:

-

Core Directives: The immutable rules of the agent (e.g., “You are a SocialSheet Streamline Your Social Media Posting scheduling assistant”).

-

Tool Definitions: Explicit descriptions of available APIs (e.g.,

search_drive,create_calendar_event). -

Dynamic Context Windows: Safely delimited areas where real-time Workspace data is injected.

-

The CoT Framework: The step-by-step reasoning template the model must follow.

Here is an example of how to structure this pattern using XML delimiters:

<system_directives>

You are an intelligent <a href="https://votuduc.com/Speech-to-Text-Transcription-Tool-with-Google-Workspace-p133052">Speech-to-Text Transcription Tool with Google Workspace</a> agent. Your goal is to manage the user's schedule and communications. You have access to the Gmail and Google Calendar APIs.

</system_directives>

<workspace_context>

<current_time>2023-10-27T09:00:00Z</current_time>

<injected_email_thread>

\{\{DYNAMIC_GMAIL_CONTENT\}\}

</injected_email_thread>

</workspace_context>

<execution_rules>

1. Always evaluate the <injected_email_thread> for actionable meeting requests.

2. You must follow the Chain of Thought process defined below before invoking any API.

</execution_rules>

By encapsulating the \{\{DYNAMIC_GMAIL_CONTENT\}\} within specific tags, you create a semantic firewall. The model understands that the text inside those tags is data to be processed, not instructions to be followed, drastically improving the reliability of complex logical operations.

Forcing Internal Reasoning Before API Output

The most critical failure point in Workspace agents occurs when the LLM attempts to generate an API payload (like a JSON object for the Google Calendar API) before it has fully processed the context. For example, an agent might try to schedule a meeting without checking for timezone discrepancies or verifying attendee email addresses from the Gmail thread.

To master Chain of Thought prompting, you must explicitly force the model to engage in internal reasoning before it outputs any actionable API command. This is achieved by requiring the model to utilize a <scratchpad> or <thinking> block.

By instructing the model to write out its thought process, you are effectively forcing it to compute the intermediate steps required for complex Workspace tasks.

Consider the following instruction block added to your system prompt:

Before you output a JSON payload to call a Google Workspace API, you MUST outline your logic inside a `<scratchpad>` block.

Inside the `<scratchpad>`, you must:

1. Identify the core objective requested by the user.

2. Extract all necessary entities (email addresses, dates, times, Drive file IDs).

3. Identify missing information and determine which API tool is needed to retrieve it.

4. Formulate the exact parameters needed for the final API call.

Only after closing the `</scratchpad>` tag may you output the final JSON tool call.

When this logic is applied, the agent’s response transforms from a risky, zero-shot API guess into a verifiable, deterministic process:

<scratchpad>

1. Objective: Schedule a follow-up meeting based on the email thread.

2. Entities extracted:

- Attendees: [email protected], [email protected].

- Time mentioned: "Next Tuesday at 2 PM EST".

3. Conversions: "Next Tuesday" is Nov 2nd. 2 PM EST is 19:00 UTC.

4. Action required: I need to call the `create_calendar_event` tool with the converted UTC times and the extracted attendee emails.

</scratchpad>

{

"tool": "create_calendar_event",

"parameters": {

"summary": "Follow-up Sync",

"start_time": "2023-11-02T19:00:00Z",

"attendees": ["[email protected]", "[email protected]"]

}

}

Forcing this internal reasoning ensures that the Gemini model leverages its full contextual window to resolve ambiguities—such as relative dates or implicit permissions—resulting in highly accurate, production-ready Google Workspace API executions.

Building the Apps Script Implementation

Now that we understand the theoretical mechanics of Chain of Thought (CoT) prompting, it is time to bring our Google Workspace agent to life. Google Apps Script (GAS) serves as the perfect connective tissue, bridging the gap between everyday Workspace applications—like Gmail, Docs, and Drive—and Google’s powerful generative AI models. In this section, we will engineer a robust Apps Script backend capable of communicating with the Gemini API, dissecting complex CoT responses, and executing decisions safely within your Workspace environment.

Setting Up the Gemini API Connection

To empower our Workspace agent with Gemini’s reasoning capabilities, we first need to establish a secure and efficient connection. Whether you are routing through Google AI Studio for rapid prototyping or leveraging Vertex AI for enterprise-grade IAM controls, the integration relies on the UrlFetchApp service native to Apps Script.

As a best practice in Cloud Engineering, never hardcode your API keys. Instead, store them securely using the Apps Script PropertiesService. Here is how you construct the HTTP request to pass your CoT prompts to the Gemini model:

function callGeminiAPI(prompt) {

const scriptProperties = PropertiesService.getScriptProperties();

const apiKey = scriptProperties.getProperty('GEMINI_API_KEY');

// Using the Gemini 1.5 Pro model for advanced reasoning

const endpoint = `https://generativelanguage.googleapis.com/v1beta/models/gemini-1.5-pro:generateContent?key=${apiKey}`;

const payload = {

"contents": [{

"parts": [{"text": prompt}]

}],

"generationConfig": {

"temperature": 0.2, // Low temperature for more deterministic reasoning

"response_mime_type": "application/json" // Enforcing JSON output for easier parsing

}

};

const options = {

'method': 'post',

'contentType': 'application/json',

'payload': JSON.stringify(payload),

'muteHttpExceptions': true

};

const response = UrlFetchApp.fetch(endpoint, options);

return response;

}

By setting the response_mime_type to application/json and keeping the temperature low, we ensure the model focuses on logical deduction rather than creative deviation, which is critical for an agent executing tasks in a corporate Workspace environment.

Parsing the Chain of Thought Payload

The true power of a CoT prompt lies in its output structure. Unlike standard prompts that return a single conversational string, a CoT-instructed model returns a structured payload containing its step-by-step internal reasoning alongside its final execution command.

Assuming you have instructed the model to output a specific JSON schema (e.g., containing thought_process and workspace_action keys), you need a reliable way to extract this data. Because LLMs occasionally wrap JSON in markdown code blocks, your parsing logic must be resilient enough to strip these out before evaluation.

function parseCoTPayload(apiResponse) {

const responseCode = apiResponse.getResponseCode();

const responseBody = apiResponse.getContentText();

if (responseCode !== 200) {

throw new Error(`API Error ${responseCode}: ${responseBody}`);

}

const jsonResponse = JSON.parse(responseBody);

let rawText = jsonResponse.candidates[0].content.parts[0].text;

// Strip markdown formatting if the model wraps the JSON

rawText = rawText.replace(/^```json\n/, '').replace(/\n```$/, '');

try {

const agentDecision = JSON.parse(rawText);

// Log the reasoning for auditability and debugging

console.log("Agent Reasoning: ", agentDecision.thought_process);

return agentDecision;

} catch (e) {

throw new Error("Failed to parse the CoT JSON structure: " + e.message);

}

}

By isolating the thought_process, you gain full observability into why the agent chose a specific action. This is invaluable for debugging complex workflows, such as an agent deciding whether an incoming email requires a calendar invite or a Google Drive folder creation.

Handling Edge Cases in Decision Making

When building autonomous agents for Google Workspace, you must engineer for unpredictability. Even with rigorous CoT prompting, the LLM might occasionally hallucinate an unsupported API action, return malformed JSON, or get stuck in a logic loop. Handling these edge cases gracefully prevents your agent from wreaking havoc on a user’s inbox or Drive.

Robust edge-case handling requires a combination of try-catch blocks, schema validation, and safe fallback mechanisms. Before executing any Workspace action, the script must verify that the requested action is both valid and permitted.

function executeAgentAction(agentDecision) {

const allowedActions = ['CREATE_DOC', 'DRAFT_EMAIL', 'ADD_CALENDAR_EVENT', 'NO_ACTION'];

try {

// 1. Validate the action exists in our allowed list

if (!allowedActions.includes(agentDecision.workspace_action.type)) {

console.warn(`Hallucinated action detected: ${agentDecision.workspace_action.type}. Defaulting to NO_ACTION.`);

agentDecision.workspace_action.type = 'NO_ACTION';

}

// 2. Execute the validated action

switch (agentDecision.workspace_action.type) {

case 'DRAFT_EMAIL':

if (!agentDecision.workspace_action.recipient || !agentDecision.workspace_action.body) {

throw new Error("Missing required fields for DRAFT_EMAIL");

}

GmailApp.createDraft(

agentDecision.workspace_action.recipient,

agentDecision.workspace_action.subject,

agentDecision.workspace_action.body

);

console.log("Successfully drafted email.");

break;

case 'CREATE_DOC':

// Implementation for Docs API

break;

case 'NO_ACTION':

console.log("Agent determined no action was necessary.");

break;

}

} catch (error) {

// 3. Fallback mechanism: Log to Stackdriver and notify admin

console.error(`Agent Execution Failed: ${error.message}`);

console.error(`Failed State Reasoning: ${agentDecision.thought_process}`);

// Optional: Send a fallback email to the user indicating the agent encountered an issue

GmailApp.sendEmail(

Session.getEffectiveUser().getEmail(),

"Workspace Agent Alert: Manual Intervention Required",

`The agent failed to execute a task. \n\nError: ${error.message}\nAgent's last thought: ${agentDecision.thought_process}`

);

}

}

By implementing strict validation against an allowedActions array and wrapping the execution in a try-catch block, we create a “sandbox” for the agent. If the Chain of Thought process leads to a flawed conclusion, the system gracefully degrades, logging the exact reasoning that led to the failure. This approach transforms unpredictable AI behavior into manageable, auditable Cloud Engineering workflows.

Real World Application and Performance Scaling

Transitioning a Google Workspace agent from a localized sandbox to a production environment requires more than just a well-crafted prompt. When your agent is interacting with live Gmail inboxes, orchestrating complex Google Calendar schedules, or synthesizing data across terabytes of Google Drive documents, the stakes are significantly higher. In these real-world scenarios, Chain of Thought (CoT) prompting must be rigorously managed to ensure that the agent remains both highly accurate and performant under load. Scaling these agents effectively means striking the perfect balance between deep, multi-step reasoning and the hard constraints of cloud infrastructure.

Benchmarking Reasoning Accuracy

How do you empirically prove that your CoT prompt is actually improving your Workspace agent’s performance? In production, “it looks right” is not a scalable metric. Benchmarking reasoning accuracy requires a systematic approach to evaluating the intermediate steps your agent takes before executing a Google Workspace API call.

To build a robust benchmarking pipeline, you should establish a “Golden Dataset” of complex Workspace tasks. For example, a test case might involve an agent tasked with: “Find the latest project proposal in Drive, cross-reference the budget figures with the spreadsheet attached to yesterday’s email from the finance director, and draft a summary email.”

When benchmarking CoT against this dataset, consider the following strategies:

-

Vertex AI AutoSxS (Side-by-Side): Leverage Google Cloud’s LLM-as-a-judge capabilities. You can pit a standard zero-shot prompt against your CoT prompt and have a larger model (like Gemini 1.5 Pro) evaluate which response followed the logical constraints of the Workspace environment more accurately.

-

Step-Level Validation: Don’t just measure the final output. Parse the generated “thought process” (the

<thinking>orStep 1, Step 2blocks). If the agent correctly identifies the Drive file ID but hallucinates the Gmail message ID during its reasoning phase, the accuracy score must reflect that point of failure. -

Deterministic API Execution Tracking: In a Workspace context, reasoning accuracy directly correlates with API success rates. Track the HTTP error rates (e.g., 400 Bad Request, 404 Not Found) of the Google Workspace API calls generated by the agent. A drop in error rates is a strong quantitative indicator that your CoT prompting is successfully guiding the model to format valid API payloads.

Optimizing Token Usage and Latency

The inherent trade-off of Chain of Thought prompting is overhead. By forcing the model to “think out loud,” you are intentionally increasing the number of output tokens generated. In a high-traffic Google Workspace Add-on or a busy Google Chat app, this translates directly to higher API costs and increased Time to First Action (TTFA) or Time to First Token (TTFT).

To scale CoT without ballooning your Google Cloud billing or frustrating users with slow response times, you must implement aggressive optimization techniques:

-

Dynamic CoT Routing: Not every query requires deep reasoning. Implement a lightweight intent-classification layer. If a user asks, “What’s my next meeting?”, route this to a standard, low-latency prompt. Reserve your heavy CoT prompts for complex, multi-hop queries like, “Reschedule all my 1:1s this afternoon to tomorrow morning and notify the attendees.”

-

Leveraging Gemini Context Caching: When your agent needs to reason over massive context windows—such as analyzing a 50-message Gmail thread or a 200-page Google Doc—the input token processing can cause significant latency. By utilizing Vertex AI’s Context Caching feature, you can cache the underlying Workspace data. The model then only needs to process the CoT instructions and the user’s immediate query, drastically reducing both latency and input costs.

-

Prompt Distillation and Few-Shot Pruning: Over time, analyze the reasoning paths your model takes. You will often find that a verbose 5-step CoT example can be condensed into a tighter 3-step example without losing accuracy. Pruning your few-shot examples reduces the input payload size, saving tokens on every single execution.

-

Streaming and UI/UX Mitigation: From a user experience perspective, latency can be masked. If your agent is operating within Google Chat or a custom web interface, always stream the model’s response. You can even choose to stream the “reasoning” steps to the UI (e.g., “Searching Drive…”, “Reading email thread…”, “Drafting response…”) so the user perceives active progress while the model is consuming output tokens for its CoT process.

Next Steps in AI Transformation

Mastering Chain of Thought (CoT) prompting is a critical milestone, but it is merely the first step in a much broader AI transformation journey. Once you understand how to guide large language models through complex reasoning pathways, the next logical phase is to operationalize this capability across your entire organization. True AI transformation happens when these intelligent, reasoning agents are no longer confined to a chat interface but are seamlessly woven into the fabric of your daily operations.

To achieve this, organizations must bridge the gap between advanced Prompt Engineering for Reliable Autonomous Workspace Agents and robust cloud architecture. This involves leveraging the deep synergy between Google Workspace and Google Cloud. Imagine moving beyond a manual CoT prompt in Gemini to deploying automated, event-driven workflows: a background process that monitors a shared Gmail inbox, utilizes a CoT-powered Vertex AI endpoint to analyze complex customer inquiries, breaks down the required resolution steps, and automatically generates a comprehensive briefing document in Google Docs while updating a tracking pipeline in Google Sheets.

Scaling these solutions requires a shift from isolated experimentation to systematic cloud engineering. It demands a solid understanding of API integrations, identity and access management (IAM) within Google Cloud, and the deployment of serverless functions to orchestrate these intelligent Workspace agents securely and efficiently. The future belongs to those who can transform advanced cognitive prompts into reliable, enterprise-grade automated systems.

Join the Automation Workshop

Theory and prompt design will only take you so far; the real magic happens when you start writing code and connecting systems. If you are ready to transition from conceptualizing intelligent workflows to actually building them, I invite you to join our upcoming Automation Workshop.

In this intensive, hands-on session, we will take the Chain of Thought principles we’ve explored and apply them directly within a live Google Workspace and Google Cloud environment. Designed for forward-thinking IT admins, developers, and cloud engineers, this workshop will provide you with the practical skills needed to architect your own custom AI agents.

During the workshop, we will cover:

-

Architecting the Agent: Setting up a secure Google Cloud Project and configuring the Gemini API (Vertex AI) for enterprise use.

-

Programmatic Prompting: Translating complex CoT frameworks into dynamic, code-generated prompts using Google Apps Script and JSON-to-Video Automated Rendering Engine.

-

Cross-App Orchestration: Building a fully functional agent that triggers from a Google Form submission, reasons through the data using CoT, and executes actions across Google Drive, Docs, and Gmail.

-

Error Handling & Guardrails: Implementing best practices for validating LLM outputs and ensuring your automated agents act reliably within organizational boundaries.

This is your opportunity to look under the hood, ask highly technical questions, and leave with a working prototype of a CoT-powered Google Workspace agent. Space is limited to ensure a highly interactive environment, so secure your spot today and take the definitive next step in your organization’s AI transformation.

Stop Doing Manual Work. Scale with AI.

Want to turn these blog concepts into production-ready reality for your team?

Table Of Contents

Portfolios

Related Posts

Quick Links

Legal Stuff