Automating Terraform Infrastructure Generation with Google Sheets and Gemini AI

While Infrastructure as Code revolutionized cloud deployments, manually writing every line of Terraform is quickly becoming a major bottleneck for growing environments. Discover how to rethink the provisioning lifecycle to seamlessly bridge the gap between high-level architectural intent and automated execution.

Rethinking Infrastructure Provisioning

The shift to Infrastructure as Code (IaC) was arguably the most significant leap forward in modern cloud engineering. Tools like Terraform allowed us to move away from fragile, click-ops deployments in the Google Cloud Console toward version-controlled, repeatable, and declarative infrastructure. However, as cloud environments grow in complexity, the way we generate and manage this code is ripe for its own evolution. We are reaching a point where writing every line of HashiCorp Configuration Language (HCL) by hand is no longer the most efficient use of a cloud engineer’s time. To truly scale cloud operations, we must rethink the provisioning lifecycle and bridge the gap between high-level architectural intent and low-level code execution.

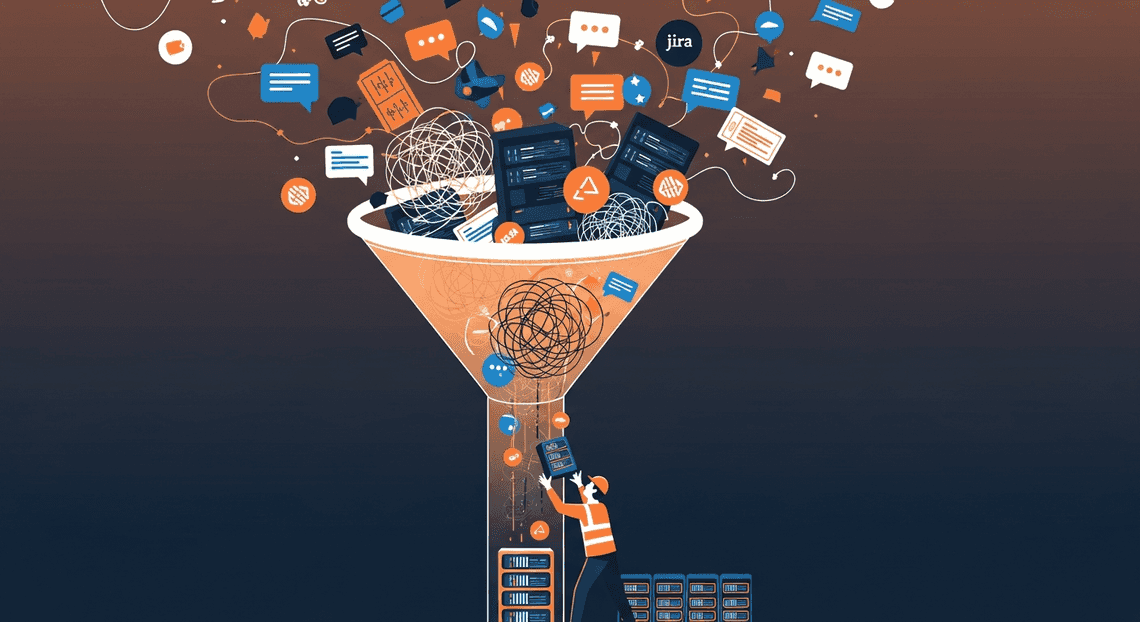

The Bottleneck of Manual Configuration

Despite the power of Terraform, the day-to-day reality for many cloud engineering teams involves a significant amount of friction. When a development team needs a new environment—perhaps a Google Kubernetes Engine (GKE) cluster, a few Cloud Storage buckets, and a Cloud SQL instance—the request usually arrives as a Jira ticket or a Slack message.

From there, the manual configuration bottleneck begins. Cloud engineers must translate these human-readable requirements into precise HCL. This involves:

-

Writing repetitive boilerplate code.

-

Scouring Terraform registry documentation for the latest Google Cloud provider syntax.

-

Manually mapping variables, outputs, and IAM bindings.

-

Cross-referencing organizational naming conventions and tagging strategies.

This translation layer is inherently flawed. It is time-consuming, highly susceptible to copy-paste errors, and creates a massive dependency on a small pool of specialized engineers. Furthermore, it creates a disconnect between the stakeholders who request the infrastructure (who often plan and track resources in tabular formats) and the engineers who build it. The cognitive load of maintaining thousands of lines of Terraform state and configuration files slows down deployment velocity, turning infrastructure provisioning from an enabler into a organizational roadblock.

Introducing Infrastructure as Spreadsheet

To eliminate this bottleneck, we need to meet stakeholders where they already work while maintaining the rigorous standards of IaC. Enter a novel concept: Infrastructure as Spreadsheet.

By leveraging Automatically create new folders in Google Drive, generate templates in new folders, fill out text automatically in new files, and save info in Google Sheets—specifically Google Sheets—we can provide a universally understood, highly collaborative, and structured front-end for infrastructure requests. Everyone from project managers to data scientists understands how to fill out a row in a spreadsheet detailing a resource type, a region (e.g., us-central1), and a required capacity.

However, the true paradigm shift happens when we pair this accessible interface with the reasoning capabilities of Gemini AI. Instead of building rigid, brittle JSON-to-Video Automated Rendering Engine scripts to parse CSVs into Terraform modules, we can utilize Gemini as an intelligent translation engine. Gemini can ingest the structured data from Google Sheets, understand the contextual intent of the infrastructure request, and automatically generate production-ready, syntactically correct Terraform code tailored for Google Cloud.

This approach democratizes infrastructure provisioning. It transforms Google Sheets into a dynamic intake portal and uses generative AI to instantly output the required HCL. By abstracting away the syntax without losing the declarative power of Terraform, cloud engineers are freed from writing boilerplate and can refocus their efforts on security, architecture, and governance.

Architecting the Automated Job Creation in Jobber from Gmail Pipeline

To transform a simple spreadsheet into a powerful infrastructure-as-code (IaC) engine, we need a robust, event-driven architecture. The pipeline acts as a seamless bridge between human intent—captured in Google Sheets—and machine execution, handled by Terraform. By chaining together AC2F Streamline Your Google Drive Workflow APIs, serverless compute, and advanced large language models, we create a workflow that is both highly accessible to product teams and strictly compliant with cloud engineering standards. Let’s break down the core components of this architecture.

Connecting Automated Client Onboarding with Google Forms and Google Drive. to Cloud Deployments

The journey begins in Google Sheets, which serves as our user-friendly frontend. Instead of expecting developers or product managers to write HashiCorp Configuration Language (HCL) from scratch, we allow them to input their infrastructure requirements—such as region, environment type, and resource specifications (e.g., “Standard GKE cluster with a dedicated Cloud SQL Postgres instance”)—directly into a structured spreadsheet.

To connect this Workspace environment to our Google Cloud backend, we leverage AI Powered Cover Letter Automation Engine. We can configure a custom menu or a time-driven/onEdit trigger within the Sheet that captures the row data the moment a user marks an infrastructure request as “Ready for Provisioning.”

Apps Script acts as the initial orchestrator. It formats the row data into a structured JSON payload and securely transmits it to a Google Cloud Function or Cloud Run endpoint. Security is paramount here; the Apps Script project is bound to a Google Cloud Project, utilizing an IAM Service Account with tightly scoped permissions to invoke the serverless endpoint via OAuth 2.0. This serverless layer serves as the crucial middleware that validates the incoming request, handles state management, and prepares the data for the AI engine.

Leveraging Gemini AI for Code Generation

Once the serverless middleware receives the structured infrastructure request, it hands the baton to the brain of our pipeline: Gemini AI via the Building Self Correcting Agentic Workflows with Vertex AI API. This is where the translation from plain English business requirements to deployable infrastructure happens.

The Cloud Function constructs a highly specific prompt combining the user’s input from the Google Sheet with strict system instructions. As cloud engineers, we know that AI-generated code can be unpredictable without guardrails. Therefore, the Prompt Engineering for Reliable Autonomous Workspace Agents must enforce Terraform best practices. We instruct Gemini to:

-

Use specific versions of the

hashicorp/googleandhashicorp/google-betaproviders. -

Implement modular design patterns, separating configurations logically into

main.tf,variables.tf, andoutputs.tf. -

Apply mandatory organizational tagging, secure networking defaults (like Private Google Access), and least-privilege IAM bindings.

-

Return strictly valid HCL without conversational filler or markdown code blocks.

Gemini processes this context and synthesizes the exact Terraform configurations required to build the requested architecture. Once the Cloud Function receives the generated HCL payload from Vertex AI, it programmatically commits the code to a version control system (such as Cloud Source Repositories, GitHub, or GitLab) via their respective REST APIs. This commit acts as the final trigger, kicking off a standard GitOps CI/CD pipeline—such as Google Cloud Build—to execute terraform plan and terraform apply, ultimately bringing the infrastructure to life.

Designing the Master Configuration Sheet

The success of any AI-driven automation hinges on the quality and predictability of its input. In this architecture, Google Sheets acts as the primary user interface—a low-barrier entry point where developers, architects, or even product managers can declare their infrastructure needs without writing a single line of HashiCorp Configuration Language (HCL). However, designing this Master Configuration Sheet requires a delicate balance. It must remain intuitive for human operators while maintaining strict structural integrity so that Gemini can parse the data, understand the context, and generate flawless Terraform code.

Structuring Resource Requirements for AI Parsing

When bridging tabular data with Large Language Models (LLMs) like Gemini, ambiguity is your biggest enemy. To ensure Gemini accurately translates spreadsheet rows into Google Cloud resources (like Compute Engine instances, Cloud SQL databases, or GKE clusters), the sheet must be designed with machine readability in mind.

Here are the core principles for structuring your resource requirements:

-

Flat Data Structures: Avoid merged cells, nested tables, or complex formatting (like color-coding to denote status). When Genesis Engine AI Powered Content to Video Production Pipeline extracts this data to feed into the Gemini API, it will typically do so as a JSON array or CSV string. Merged cells create null values and break the parsing logic. Keep the data strictly flat and tabular.

-

Explicit Column Headers: Your headers serve as the foundational schema for Gemini’s prompt. Use clear, explicit names such as

Resource_Type,Resource_Name,GCP_Region,Machine_Tier, andVPC_Network. -

Data Validation for Core Fields: Use Google Sheets Data Validation (dropdowns) for standard Google Cloud parameters. For example, the

GCP_Regioncolumn should only allow valid regions (e.g.,us-central1,europe-west4), andResource_Typeshould be restricted to supported Terraform resources (e.g.,google_storage_bucket,google_compute_instance). This prevents typos that could cause Gemini to hallucinate invalid Terraform providers or modules. -

The “Natural Language” Column: This is where the magic of using an LLM shines. Include a column titled

Architecture_NotesorSpecific_Requirements. Instead of creating a dozen columns for every possible Terraform argument, allow users to write plain English instructions here, such as: “Ensure this bucket has uniform bucket-level access enabled and a lifecycle rule to transition to Coldline after 30 days.” Gemini excels at parsing these natural language constraints and injecting the correct HCL arguments into the generated output.

Standardizing Cloud Environments and Variables

Infrastructure is rarely deployed in a vacuum; it spans across multiple environments like Development, Staging, and Production. If your Master Configuration Sheet doesn’t account for environment standardization, Gemini will likely generate hardcoded, monolithic Terraform files rather than dynamic, reusable modules.

To enforce cloud engineering best practices directly from Google Sheets, you should structure your environment variables systematically:

-

The “Globals” Tab: Create a dedicated sheet (tab) within your workbook specifically for global variables. This should operate as a key-value store containing base configurations like

Billing_Account_ID,Organization_ID,Default_Project_Prefix, andState_Bucket_Name. When querying Gemini, this tab is passed as global context, ensuring the AI knows exactly which GCP organization it is writing code for. -

Environment-Specific Scoping: You can handle environments in one of two ways. The first is an

Environmentcolumn in your main resource sheet, where a user specifies if a row belongs todev,uat, orprod. The second (and often cleaner) approach is to have separate tabs for each environment. When the automation runs, it can instruct Gemini to generate amain.tffor the resources, alongside environment-specificterraform.tfvarsfiles based on the active tab. -

Enforcing Naming Conventions: Prompt engineering starts in the spreadsheet. You can use hidden columns with Google Sheets formulas (e.g.,

=CONCATENATE(Environment, "-", AppName, "-", ResourceType)) to automatically generate standardized GCP resource names. By passing these pre-calculated, standardized names to Gemini, you guarantee that the generated Terraform adheres to your organization’s strict cloud naming conventions, reducing the cognitive load on the AI and eliminating naming drift across your cloud environments.

Building the Generation Engine

The true power of this architecture lies in the generation engine—a Architecting Multi Tenant AI Workflows in Google Apps Script that acts as the connective tissue between your spreadsheet frontend, the Gemini AI brain, and your version control system. By leveraging built-in Automated Discount Code Management System services alongside external APIs, we can create a seamless, event-driven pipeline that transforms plain-text infrastructure requirements into deployable code.

Extracting Data with SheetApp

To begin, we need to pull the infrastructure specifications defined by the user out of Google Sheets. In the Google Apps Script environment, we utilize the SpreadsheetApp service to programmatically interact with our workbook.

The goal here is to read the structured data—such as project IDs, resource types, regions, and instance sizes—and format it into a clean JSON object that our AI model can easily digest. We typically bind this script to a custom menu button in the Google Sheet, allowing users to trigger the extraction manually once they have filled out their requirements.

Here is a streamlined example of how to extract this data:

function extractInfrastructureData() {

const sheet = SpreadsheetApp.getActiveSpreadsheet().getSheetByName("Infra_Requests");

const dataRange = sheet.getDataRange();

const values = dataRange.getValues();

// Assuming row 1 contains headers

const headers = values[0];

const requests = [];

for (let i = 1; i < values.length; i++) {

let rowObject = {};

for (let j = 0; j < headers.length; j++) {

rowObject[headers[j]] = values[i][j];

}

// Only process rows that are marked as 'Pending'

if (rowObject['Status'] === 'Pending') {

requests.push(rowObject);

}

}

return requests;

}

By parsing the rows into an array of JSON objects, we create a standardized payload. This payload serves as the dynamic context we will inject into our prompt for Gemini.

Prompting Gemini for Valid Terraform HCL

With our data extracted, the next step is to pass it to the Gemini API. This is where prompt engineering becomes critical. Large Language Models (LLMs) are naturally conversational; if you simply ask Gemini to “write Terraform for this data,” it will likely return the code wrapped in Markdown formatting, accompanied by a polite greeting and a detailed explanation of how the code works.

For an automated pipeline, this conversational fluff is fatal. We need strict, raw, and syntactically valid HashiCorp Configuration Language (HCL). To achieve this, we must craft a rigid system prompt and utilize UrlFetchApp to call the Gemini API.

function generateTerraformWithGemini(infraData) {

const apiKey = PropertiesService.getScriptProperties().getProperty('GEMINI_API_KEY');

const endpoint = `https://generativelanguage.googleapis.com/v1beta/models/gemini-1.5-pro:generateContent?key=${apiKey}`;

const prompt = `

You are an expert Google Cloud Infrastructure as Code engineer.

Based on the following JSON payload, generate the corresponding Terraform HCL code.

REQUIREMENTS:

- Use the official 'google' provider.

- Output ONLY valid HCL code.

- DO NOT wrap the output in markdown code blocks (e.g., \`\`\`hcl).

- DO NOT include any explanations, greetings, or comments outside the HCL.

PAYLOAD:

${JSON.stringify(infraData, null, 2)}

`;

const payload = {

"contents": [{ "parts": [{ "text": prompt }] }]

};

const options = {

"method": "post",

"contentType": "application/json",

"payload": JSON.stringify(payload)

};

const response = UrlFetchApp.fetch(endpoint, options);

const jsonResponse = JSON.parse(response.getContentText());

// Extract the raw text from the Gemini response

return jsonResponse.candidates[0].content.parts[0].text.trim();

}

By explicitly forbidding Markdown blocks and explanations, we ensure the returned string is pure HCL, ready to be saved as a .tf file.

Exporting to DriveApp and GitHub

The final phase of the generation engine is routing the newly minted Terraform code to the appropriate destinations. We want to achieve two things: maintain an easily accessible backup in Automated Email Journey with Google Sheets and Google Analytics and push the code to a Git repository to trigger our CI/CD pipeline (like Terraform Cloud or GitHub Actions).

First, we use DriveApp to save a local copy of the generated .tf file within a designated Google Drive folder. This provides a quick audit trail for non-developers who might not have GitHub access.

function saveToDrive(fileName, hclContent) {

const folderId = PropertiesService.getScriptProperties().getProperty('DRIVE_FOLDER_ID');

const folder = DriveApp.getFolderById(folderId);

// Create or overwrite the Terraform file

folder.createFile(`${fileName}.tf`, hclContent, MimeType.PLAIN_TEXT);

}

Next, we integrate with GitHub. Pushing a file to GitHub via Apps Script requires interacting with the GitHub REST API. Because GitHub expects file content to be Base64 encoded, we must use the Utilities.base64Encode() method before making our PUT request. You will also need a Personal Access Token (PAT) stored securely in your Apps Script Properties.

function pushToGitHub(fileName, hclContent) {

const githubToken = PropertiesService.getScriptProperties().getProperty('GITHUB_PAT');

const repoOwner = "your-org";

const repoName = "terraform-infra-repo";

const filePath = `generated/${fileName}.tf`;

const apiUrl = `https://api.github.com/repos/${repoOwner}/${repoName}/contents/${filePath}`;

const payload = {

"message": `Auto-generated infrastructure for ${fileName} via Sheets & Gemini`,

"content": Utilities.base64Encode(hclContent),

"branch": "main"

};

const options = {

"method": "put",

"headers": {

"Authorization": `Bearer ${githubToken}`,

"Accept": "application/vnd.github.v3+json"

},

"contentType": "application/json",

"payload": JSON.stringify(payload),

"muteHttpExceptions": true

};

const response = UrlFetchApp.fetch(apiUrl, options);

Logger.log(response.getContentText());

}

(Note: If the file already exists in GitHub, the API requires you to provide the file’s current sha hash in the payload to update it. For simplicity, this snippet assumes a new file creation.)

By chaining these three functions together, you create a robust, serverless engine. A user simply fills out a row in Google Sheets, clicks a button, and moments later, valid Terraform code is generated by Gemini, backed up to Google Drive, and committed directly to your infrastructure repository.

Expanding the Deployment Ecosystem

While automating Terraform generation streamlines the provisioning of foundational infrastructure, a robust cloud engineering workflow encompasses much more than just the base layer. The integration of Google Sheets, Apps Script, and Gemini AI is not limited to .tf files; it can be treated as a generalized, AI-driven configuration engine. By expanding this architecture, we can extend our automation further up the cloud-native stack to handle application-layer deployments and implement stringent, automated quality gates.

Automating Kubernetes Manifest Generation

If your generated Terraform code is provisioning a Google Kubernetes Engine (GKE) cluster, the next logical step is deploying workloads onto it. Writing Kubernetes YAML manifests manually can be just as tedious and error-prone as writing infrastructure code, making it a perfect candidate for our Gemini-powered pipeline.

We can leverage the exact same Automated Google Slides Generation with Text Replacement integration to handle application deployments. Imagine adding a new worksheet titled “GKE Workloads” to your Google Sheet. In this sheet, developers input high-level application requirements: service name, container image URL (hosted in Google Artifact Registry), desired replica count, exposed ports, environment variables, and CPU/memory resource limits.

Using Google Apps Script, we extract these rows and construct a targeted prompt for the Gemini API. For example:

“Given the following microservice parameters from the provided JSON array, generate production-ready Kubernetes Deployment and Service manifests. Ensure cloud-native best practices, including the definition of readiness and liveness probes, resource quotas, and use the apps/v1 API.”

Gemini processes this structured data and outputs standard, declarative YAML. This effectively abstracts the steep learning curve of Kubernetes away from application developers. They simply fill out a familiar spreadsheet, and the pipeline generates the necessary Deployment, Service, Ingress, and HorizontalPodAutoscaler manifests. These files can then be automatically committed to a repository, ready to be synced to the GKE cluster via a GitOps controller like ArgoCD or Google Cloud Deploy.

Validating and Testing Generated Code

Trusting a Large Language Model (LLM) to generate infrastructure and deployment code drastically accelerates development, but blind trust is a recipe for production outages. AI models can occasionally hallucinate, reference deprecated API versions, or introduce subtle syntax errors. Therefore, integrating a robust validation and testing phase is a non-negotiable requirement for this architecture.

Instead of applying the generated code directly, the Apps Script should be configured to commit the Gemini-generated Terraform and Kubernetes files to a version control system, such as Google Cloud Source Repositories or GitHub. This commit should immediately trigger a Continuous Integration (CI) pipeline—like Google Cloud Build—to act as an automated quality gate.

For the Terraform generation, the Cloud Build pipeline should execute a series of strict checks:

-

terraform fmt -checkto ensure stylistic consistency across the generated codebase. -

terraform validateto catch syntax errors, invalid references, and missing variable definitions. -

tflintto enforce Google Cloud provider best practices and catch potential errors not found by native Terraform commands. -

terraform planto generate a speculative execution plan. This is crucial, as it allows cloud engineers to review exactly what GCP resources will be created, modified, or destroyed before any actual changes occur.

For the Kubernetes manifests, the pipeline can run:

-

yamllintto catch basic YAML formatting and indentation issues. -

kubeconformorplutoto validate the manifests against the official Kubernetes OpenAPI schema and proactively flag any deprecated or removed API versions.

To create a truly seamless developer experience, you can close the automation loop. If the Cloud Build pipeline fails any of these checks, it can trigger a webhook back to an Apps Script endpoint. This script can then update a “Deployment Status” column directly in the Google Sheet to “Validation Failed,” appending the error logs. If it passes, the status updates to “Ready for Review,” keeping the entire workflow visible and manageable from the original spreadsheet interface.

Scale Your Architecture with Confidence

When your organization experiences rapid growth, your cloud infrastructure must scale seamlessly without introducing fragility or operational bottlenecks. By combining the accessible interface of Google Sheets with the generative capabilities of Gemini AI and the robust state management of Terraform, you are essentially building an intelligent, highly scalable infrastructure engine.

This automated approach removes the traditional friction associated with manual HCL (HashiCorp Configuration Language) authoring. Instead of getting bogged down by syntax errors, complex module dependencies, or misconfigured Google Cloud resources, your engineering team can focus on high-level architectural design and strategic growth. Gemini AI acts as an expert translator, ensuring that the scaling parameters defined in your spreadsheet are accurately and securely converted into production-ready Terraform code. The result is an infrastructure lifecycle that scales on demand, backed by the confidence of automated, AI-verified code generation.

Empowering DevOps Teams with Standardized Workflows

The true magic of this integration lies in how it fundamentally transforms daily DevOps operations. Standardization is the bedrock of reliable cloud engineering, and this architecture enforces it by design. By utilizing Google Sheets as the single source of truth for resource parameters—such as compute instance sizing, IAM role bindings, and VPC subnet configurations—you create a standardized, easily auditable intake process.

Gemini AI then ingests these structured inputs and generates consistent Terraform configurations that align perfectly with your organization’s security and compliance guardrails. This workflow bridges the gap between technical and non-technical teams. Product managers or application developers can request infrastructure via a familiar spreadsheet interface, while DevOps engineers maintain strict governance, reviewing the AI-generated code before it is merged into the Git repository and deployed via CI/CD pipelines.

Ultimately, this empowers your DevOps teams by:

-

Eliminating Boilerplate: Freeing engineers from writing repetitive Terraform configurations from scratch.

-

Minimizing Configuration Drift: Ensuring that every environment is provisioned using the exact same standardized logic.

-

Accelerating Time-to-Market: Drastically reducing the lead time between an infrastructure request and its actual deployment in Google Cloud.

Book a Solution Discovery Call with Vo Tu Duc

Ready to revolutionize how your organization provisions and manages its Google Cloud infrastructure? Implementing an AI-driven, spreadsheet-to-Terraform pipeline requires careful architectural planning, deep Automated Order Processing Wordpress to Gmail to Google Sheets to Jobber integration, and robust Cloud Engineering expertise.

Take the guesswork out of your automation journey. Book a Solution Discovery Call with Vo Tu Duc to explore how this innovative workflow can be custom-tailored to your specific business requirements. Whether you are looking to optimize your current DevOps pipelines, securely integrate Gemini AI into your daily operations, or build a highly scalable Google Cloud environment from the ground up, Vo Tu Duc provides the strategic guidance and technical mastery necessary to turn your infrastructure goals into reality. Reach out today to engineer your next operational breakthrough.

Stop Doing Manual Work. Scale with AI.

Want to turn these blog concepts into production-ready reality for your team?

Table Of Contents

Portfolios

Related Posts

Quick Links

Legal Stuff